AI-specific cybersecurity threats+45

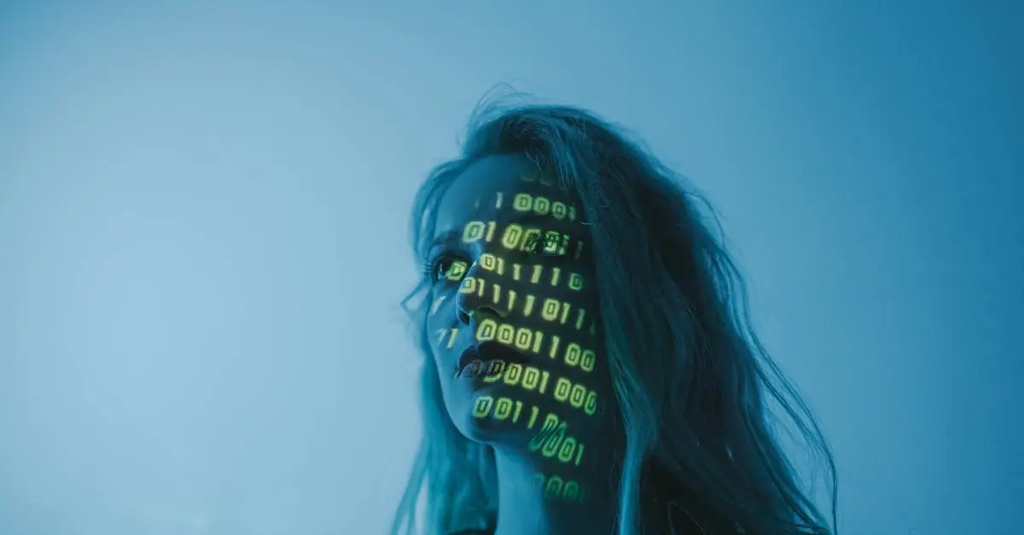

AI-specific cybersecurity threats refer to risks and vulnerabilities that arise from the use and integration of artificial intelligence technologies. These threats include data poisoning, model theft, adversarial attacks, and manipulation of AI decision-making processes. Attackers may exploit weaknesses in AI systems to alter outputs, steal sensitive information, or disrupt operations. As AI becomes more prevalent, understanding and mitigating these unique cybersecurity challenges is crucial to ensuring the safety and reliability of AI-driven applications.

AI-specific cybersecurity threats+45

AI-specific cybersecurity threats refer to risks and vulnerabilities that arise from the use and integration of artificial intelligence technologies. These threats include data poisoning, model theft, adversarial attacks, and manipulation of AI decision-making processes. Attackers may exploit weaknesses in AI systems to alter outputs, steal sensitive information, or disrupt operations. As AI becomes more prevalent, understanding and mitigating these unique cybersecurity challenges is crucial to ensuring the safety and reliability of AI-driven applications.

💡 Key Takeaways

- Identify AI-specific cybersecurity threats, including data poisoning, model theft, adversarial attacks, and manipulation of AI decisions.

- Explain how data poisoning can corrupt training data and degrade model performance and reliability.

- Describe how attackers steal AI models and the best practices to protect model intellectual property and access.

- Understand adversarial attacks and how small input changes can trigger incorrect AI decisions, with defense basics.

❓ Frequently Asked Questions

What are AI-specific cybersecurity threats?

Risks that arise from using AI technologies, including data poisoning, model theft, adversarial inputs, and manipulation of AI decision-making.

What is data poisoning?

An attack where malicious data is introduced to training data to corrupt a model’s behavior or cause targeted errors.

What is model theft?

Unauthorized copying or extraction of a trained model’s weights and architecture to imitate or steal its capabilities.

What are adversarial attacks?

Small, carefully crafted inputs that cause an AI system to make incorrect or unsafe decisions.