Alignment strategies for open-ended generative systems

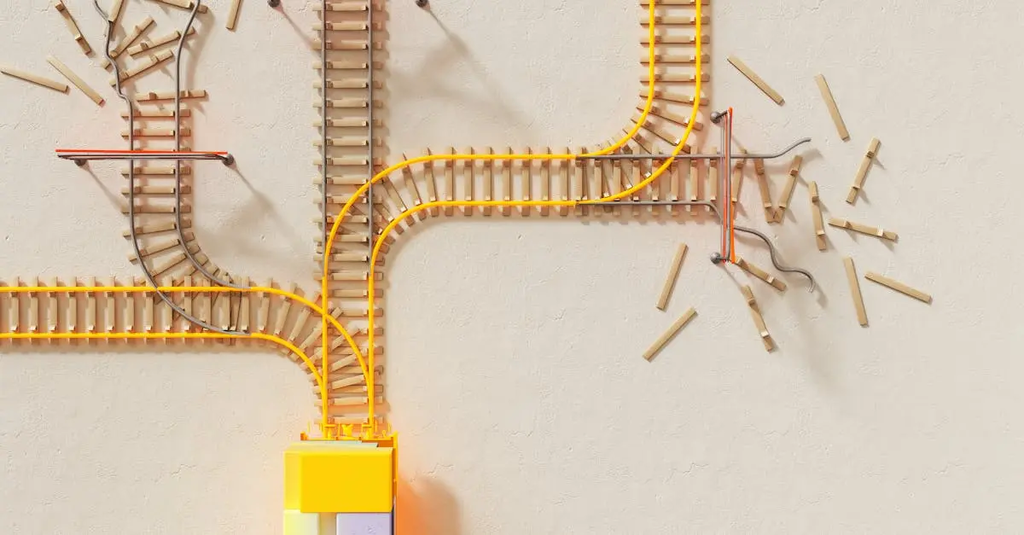

Alignment strategies for open-ended generative systems refer to methods and frameworks designed to ensure that AI models producing creative or unpredictable outputs act in accordance with human values, intentions, and safety requirements. These strategies involve techniques such as reinforcement learning from human feedback, constraint-based generation, and ongoing monitoring to guide the system’s behavior, preventing unintended consequences while enabling innovation and adaptability in diverse, evolving environments.

Alignment strategies for open-ended generative systems

Alignment strategies for open-ended generative systems refer to methods and frameworks designed to ensure that AI models producing creative or unpredictable outputs act in accordance with human values, intentions, and safety requirements. These strategies involve techniques such as reinforcement learning from human feedback, constraint-based generation, and ongoing monitoring to guide the system’s behavior, preventing unintended consequences while enabling innovation and adaptability in diverse, evolving environments.

💡 Key Takeaways

- Define alignment for open-ended generative AI and why ethical/societal risk perspectives matter

- Identify core alignment techniques (e.g., RLHF, safety constraints, content moderation) and their goals

- Explain evaluation methods (red-teaming, stress tests, scenario analysis) to spot misalignment before deployment

- Discuss governance, transparency, accountability, and stakeholder engagement in responsible AI deployment

❓ Frequently Asked Questions

What does alignment mean for open-ended generative AI systems?

Alignment ensures outputs reflect human values, safety requirements, and user intentions even when the content is creative or unpredictable.

What are common alignment strategies mentioned in this context?

Techniques include reinforcement learning from human feedback (RLHF), safety constraints and guardrails, value alignment, interpretability, red-teaming, and ongoing monitoring.

Why are ethical and societal risk perspectives important in alignment?

They identify potential harms like bias, misinformation, manipulation, and privacy issues, guiding design choices to minimize negative impacts on people and communities.

How does open-ended generation influence alignment challenges?

Unpredictable outputs require robust testing, continuous evaluation, and adaptive safeguards to ensure models stay within desired values and safety limits.