Auditability and third-party audits of AI systems

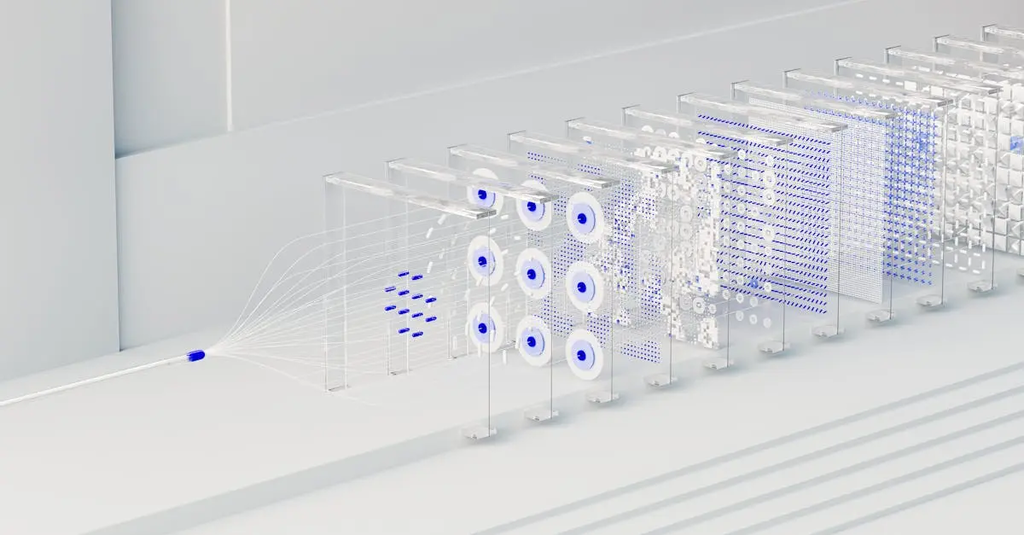

Auditability and third-party audits of AI systems refer to the capability to independently examine and verify how an AI system operates, makes decisions, and complies with regulations or ethical standards. Third-party audits involve external experts systematically reviewing the AI’s algorithms, data usage, and outcomes to ensure transparency, fairness, and accountability. This process helps identify biases, security vulnerabilities, and potential risks, fostering trust and reliability in AI deployments.

Auditability and third-party audits of AI systems

Auditability and third-party audits of AI systems refer to the capability to independently examine and verify how an AI system operates, makes decisions, and complies with regulations or ethical standards. Third-party audits involve external experts systematically reviewing the AI’s algorithms, data usage, and outcomes to ensure transparency, fairness, and accountability. This process helps identify biases, security vulnerabilities, and potential risks, fostering trust and reliability in AI deployments.

💡 Key Takeaways

- Define auditability in AI and why independent verification matters for accountability and regulatory compliance.

- Explain what third-party audits assess, including data provenance, training procedures, model behavior, and decision pathways.

- Identify common audit artifacts and frameworks (e.g., model cards, data sheets, fairness metrics) used in AI assessments.

- Discuss how audits address ethical and societal risks such as bias, privacy, transparency, and governance.

❓ Frequently Asked Questions

What is auditability in AI?

The ability to independently examine an AI system’s workings, data, and decisions to verify compliance with laws, ethics, and safety standards.

What is a third-party AI audit?

An evaluation by external experts who review the model, data provenance, training processes, and outputs to assess fairness, accountability, and regulatory compliance.

What aspects are usually covered in AI audits?

Model architecture and code, training data quality and governance, decision logic and explainability, bias and fairness, privacy and security, and compliance with ethical/regulatory standards.

Why is auditability important for ethical and societal risk?

It helps identify and mitigate harms, protect user privacy, improve transparency, ensure accountability, and foster trust in AI systems.

How do AI audits typically proceed?

Define scope, collect and review documentation and data, test model behavior, assess risk and compliance, and produce a report with findings and recommendations.