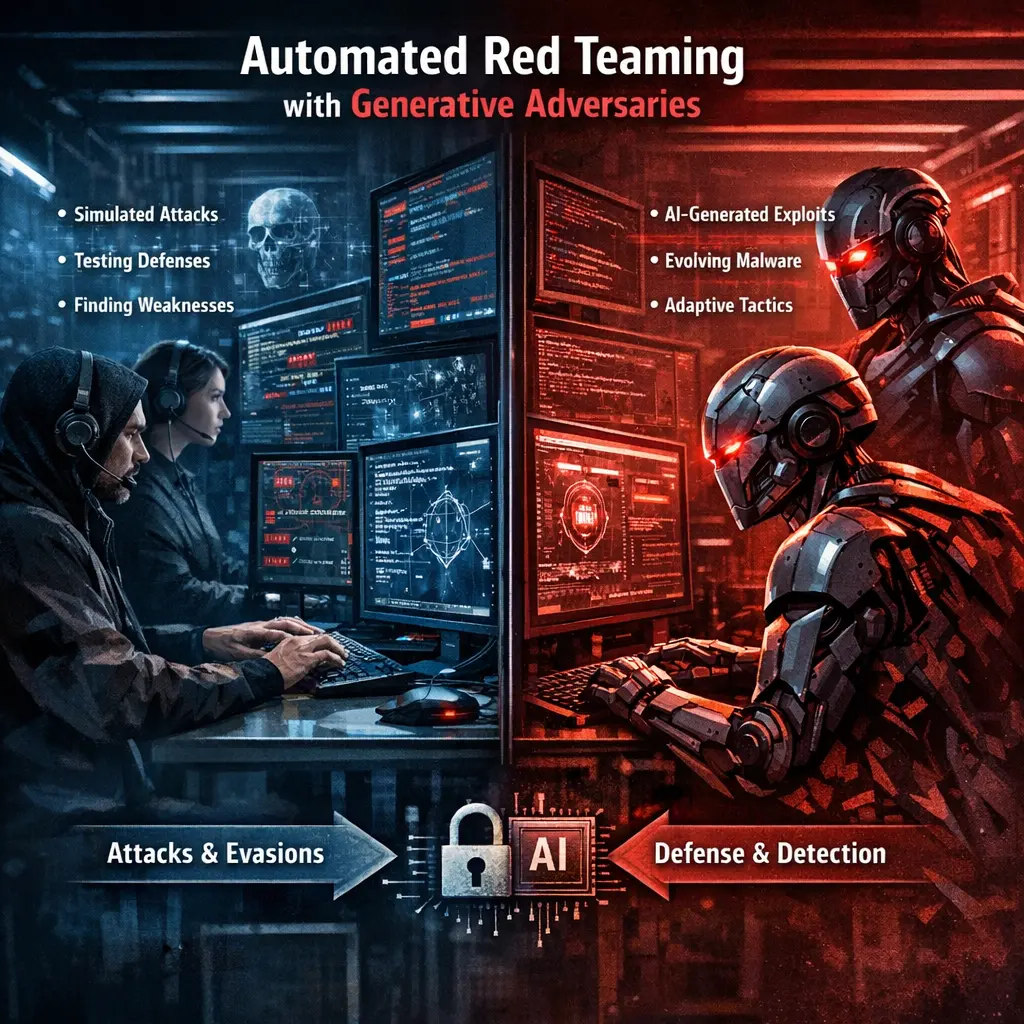

Automated Red Teaming with Generative Adversaries

Automated Red Teaming with Generative Adversaries (LLM Evaluations) refers to using AI models, particularly large language models (LLMs), to simulate adversarial attacks on other AI systems. These automated "red teams" generate challenging prompts or scenarios to test the robustness, security, and ethical boundaries of target models. This approach helps identify vulnerabilities, biases, and weaknesses efficiently, supporting the development of safer, more reliable AI systems through continuous, scalable evaluation.

Automated Red Teaming with Generative Adversaries

Automated Red Teaming with Generative Adversaries (LLM Evaluations) refers to using AI models, particularly large language models (LLMs), to simulate adversarial attacks on other AI systems. These automated "red teams" generate challenging prompts or scenarios to test the robustness, security, and ethical boundaries of target models. This approach helps identify vulnerabilities, biases, and weaknesses efficiently, supporting the development of safer, more reliable AI systems through continuous, scalable evaluation.

💡 Key Takeaways

- Understand what automated red teaming is and how generative adversaries simulate attacker behavior at scale

- See how generative models can produce realistic attack scenarios, timelines, and payloads to test defenses

- Identify the benefits and trade-offs of automation for red team exercises, including speed, coverage, and potential biases or false positives

- Recognize governance, safety, and ethical considerations when using AI-driven red teaming tools (scope, containment, approvals)

- Learn how to measure effectiveness with metrics like detection rates, time to detect, and remediation impact to drive security improvements

❓ Frequently Asked Questions

What is automated red teaming with generative adversaries?

A security testing approach that uses AI-driven agents to automatically simulate attacker behavior, helping defenders discover weaknesses without relying solely on human testers.

What are generative adversaries in this context?

AI models that generate new attack ideas, payloads, or scenarios to probe defenses, often trained to compete with defensive systems.

How does automation improve red team exercises?

It scales testing across systems, speeds up scenario generation, and reveals a broader range of threats than manual testing alone.

What should organizations consider before adopting this approach?

Establish governance and ethics, protect data and systems, monitor outputs for safety, and ensure compliance and a clear remediation plan.