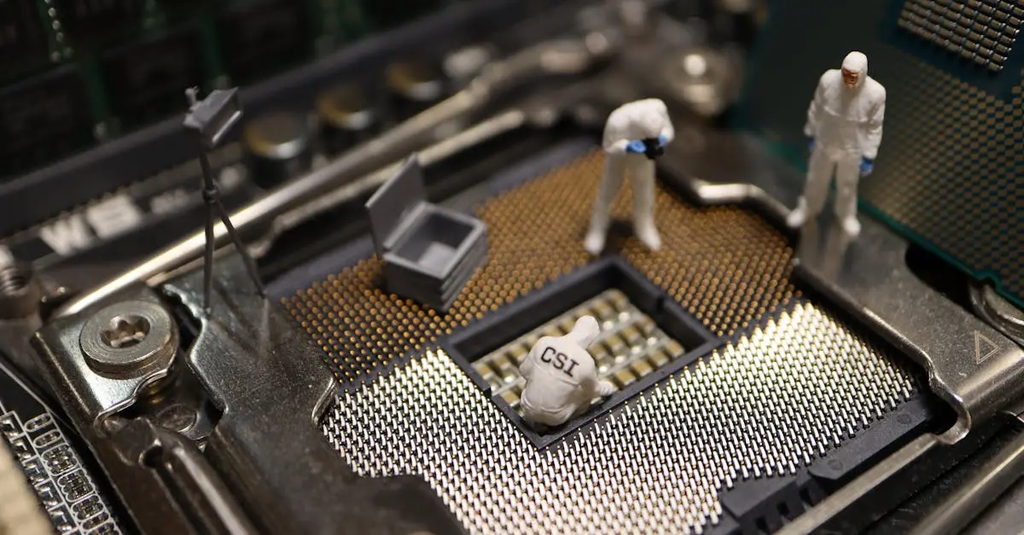

Backdoor and trojan detection in pretrained models

Backdoor and trojan detection in pretrained models refers to identifying hidden malicious behaviors intentionally embedded during a model’s training process. These threats can cause the model to behave incorrectly or leak sensitive information when triggered by specific inputs. Detection involves analyzing the model’s responses, inspecting its parameters, and using specialized algorithms to uncover abnormal patterns or triggers, ensuring that the model operates securely and as intended in deployment environments.

Backdoor and trojan detection in pretrained models

Backdoor and trojan detection in pretrained models refers to identifying hidden malicious behaviors intentionally embedded during a model’s training process. These threats can cause the model to behave incorrectly or leak sensitive information when triggered by specific inputs. Detection involves analyzing the model’s responses, inspecting its parameters, and using specialized algorithms to uncover abnormal patterns or triggers, ensuring that the model operates securely and as intended in deployment environments.

💡 Key Takeaways

- Understand what backdoors and trojans are in pretrained models and why they pose security and privacy risks.

- Learn detection strategies for hidden triggers, including analysis of model activations and input-output behavior.

- Explore governance and compliance practices such as data provenance, model auditing, and secure supply-chain management to reduce risk.

- Recognize the limitations of current detection methods and design robust testing and remediation workflows.

❓ Frequently Asked Questions

What is backdoor/trojan in pretrained models?

A hidden malicious behavior embedded during training that activates when a specific input pattern is seen, causing incorrect outputs or leaking information.

How might backdoors be introduced in training?

Through poisoned training data, compromised training pipelines, or malicious fine-tuning, with triggers designed to be rare and hard to detect.

Why is detection important in Generative AI systems?

Hidden triggers can compromise accuracy, safety, privacy, and compliance, especially in sensitive or deployment-critical applications.

What are common methods to detect backdoors and trojans?

Auditing data provenance and training processes, static and dynamic model analysis, testing with crafted trigger inputs, and monitoring outputs for anomalies.