Benchmark Datasets: GLUE, SuperGLUE, MMLU & Others+50

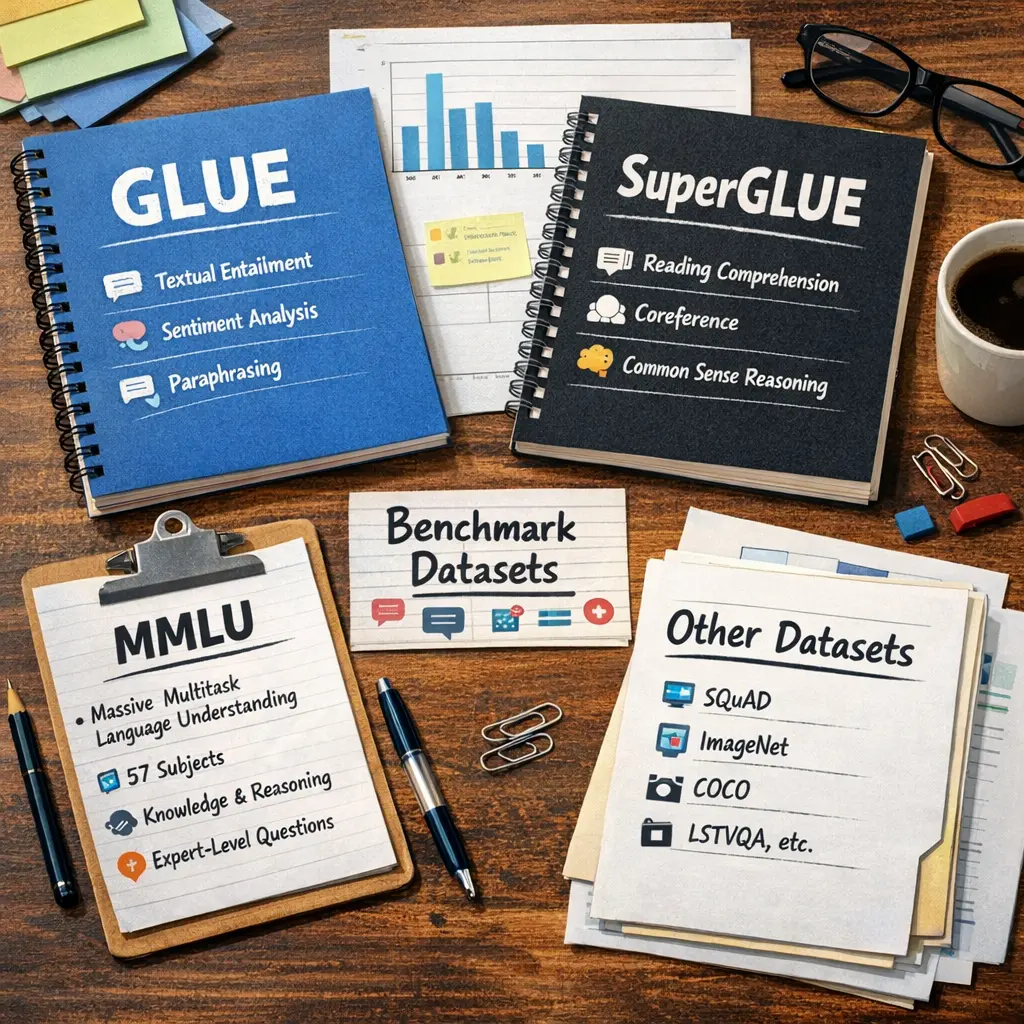

Benchmark datasets like GLUE, SuperGLUE, and MMLU are standardized collections of tasks designed to evaluate the performance of large language models (LLMs). GLUE and SuperGLUE focus on natural language understanding, including tasks like sentence similarity and inference, while MMLU tests multi-subject knowledge and reasoning. These datasets serve as common evaluation tools (evals) to compare and measure the capabilities and progress of LLMs across various domains and challenges.

Benchmark Datasets: GLUE, SuperGLUE, MMLU & Others+50

Benchmark datasets like GLUE, SuperGLUE, and MMLU are standardized collections of tasks designed to evaluate the performance of large language models (LLMs). GLUE and SuperGLUE focus on natural language understanding, including tasks like sentence similarity and inference, while MMLU tests multi-subject knowledge and reasoning. These datasets serve as common evaluation tools (evals) to compare and measure the capabilities and progress of LLMs across various domains and challenges.

💡 Key Takeaways

- Understand what GLUE, SuperGLUE, and MMLU measure in NLP benchmarks (language understanding, reasoning, and knowledge).

- Differentiate GLUE (original multi-task benchmark) from SuperGLUE (more challenging tasks) and from MMLU (broad, domain-wide knowledge via multiple-choice questions).

- Learn how benchmark scores are reported and interpreted, including task-level scores, composite benchmarks, leaderboards, and baselines vs. human performance.

- Identify methodological considerations in benchmarks (task diversity, data splits, potential overfitting, and how benchmarks evolve to address limitations).

❓ Frequently Asked Questions

What are GLUE, SuperGLUE, and MMLU in NLP?

They are benchmark suites with multiple NLP tasks and standard data splits to evaluate and compare how well language models understand and reason across diverse challenges.

How do GLUE and SuperGLUE differ in difficulty and scope?

GLUE is a broad, mid-level benchmark with a single overall score. SuperGLUE adds harder tasks and stricter evaluation to push models further.

What is MMLU and what does it test?

MMLU (Massive Multitask Language Understanding) tests broad knowledge and reasoning through many-choice questions across many subjects and difficulty levels.

What are some other common NLP benchmarks besides GLUE/SuperGLUE/MMLU?

Examples include SQuAD (reading comprehension), MNLI (textual entailment), CoLA (grammatical acceptability), and XTREME (multilingual benchmarks).