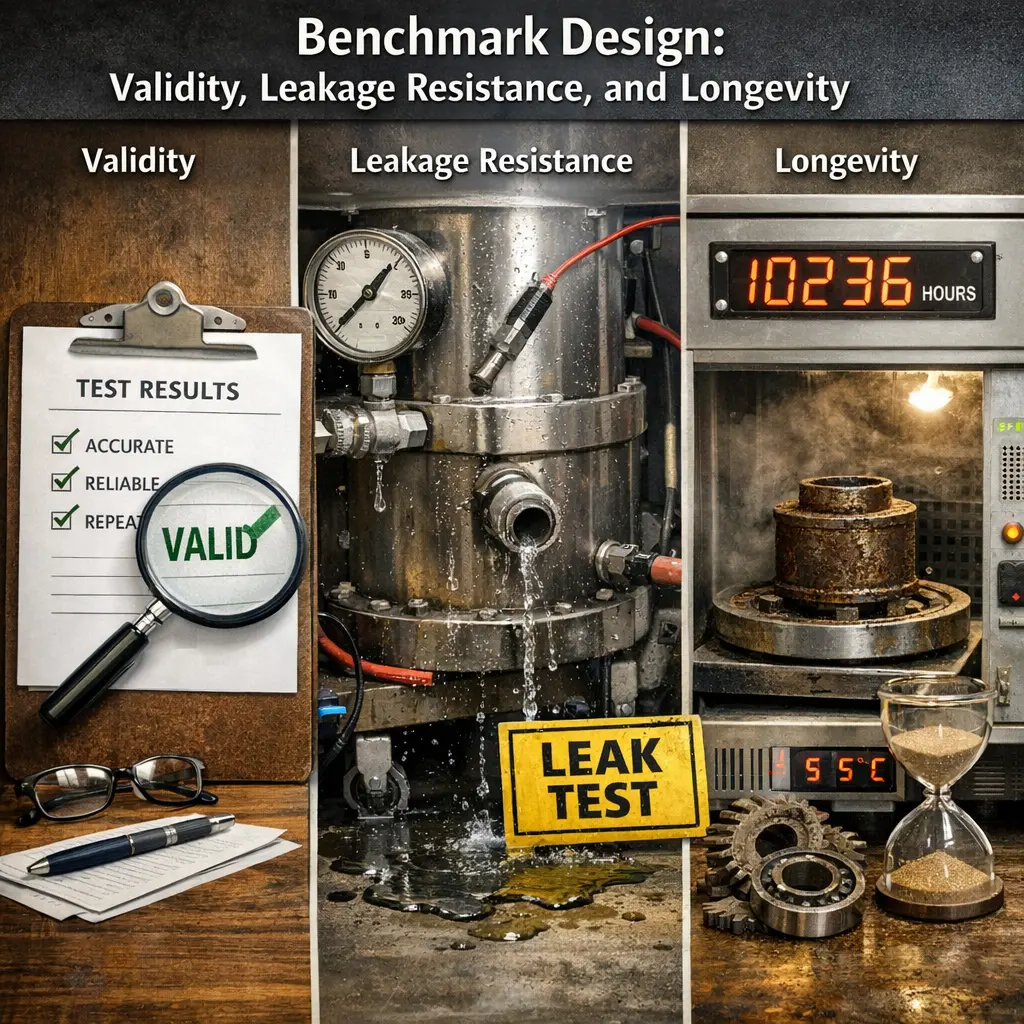

Benchmark Design: Validity, Leakage Resistance, and Longevity

Benchmark design in LLM evaluations involves creating tests that accurately measure model capabilities (validity), prevent models from exploiting shortcuts or memorized answers (leakage resistance), and remain useful over time as models improve (longevity). Effective benchmarks ensure that results reflect genuine model understanding, are not compromised by prior exposure to test data, and continue to challenge advanced models, supporting fair and meaningful comparison of language model performance.

Benchmark Design: Validity, Leakage Resistance, and Longevity

Benchmark design in LLM evaluations involves creating tests that accurately measure model capabilities (validity), prevent models from exploiting shortcuts or memorized answers (leakage resistance), and remain useful over time as models improve (longevity). Effective benchmarks ensure that results reflect genuine model understanding, are not compromised by prior exposure to test data, and continue to challenge advanced models, supporting fair and meaningful comparison of language model performance.

💡 Key Takeaways

- Define and assess validity to ensure the benchmark measures the intended construct.

- Identify sources of leakage and implement defenses to prevent unfair advantage.

- Evaluate the longevity of benchmark results to ensure stability over time.

- Apply robust design practices (controls, baselines, and repeatable procedures) for durable benchmarks.

❓ Frequently Asked Questions

What is validity in benchmark design?

Validity means the benchmark measures the intended concept and yields results that generalize beyond the specific test data.

How does data leakage occur in benchmarks and how can you prevent it?

Data leakage happens when information from evaluation data or future data influences training or model selection. Prevent by strict train/validation/test separation, careful feature construction, and predefined evaluation protocols.

What is leakage resistance and why is it important?

Leakage resistance is the benchmark’s ability to minimize unintended information flow that could bias results, ensuring performance reflects true capability rather than data quirks.

What does longevity mean for a benchmark?

Longevity refers to how long a benchmark stays relevant and usable, supported by stable APIs, versioned data, and thoughtful updates that preserve comparability.

What practices help improve both validity and longevity of a benchmark?

Use diverse, well-documented data; define clear metrics and protocols; version-control datasets; involve governance or community input; and plan for transparent, regular updates.