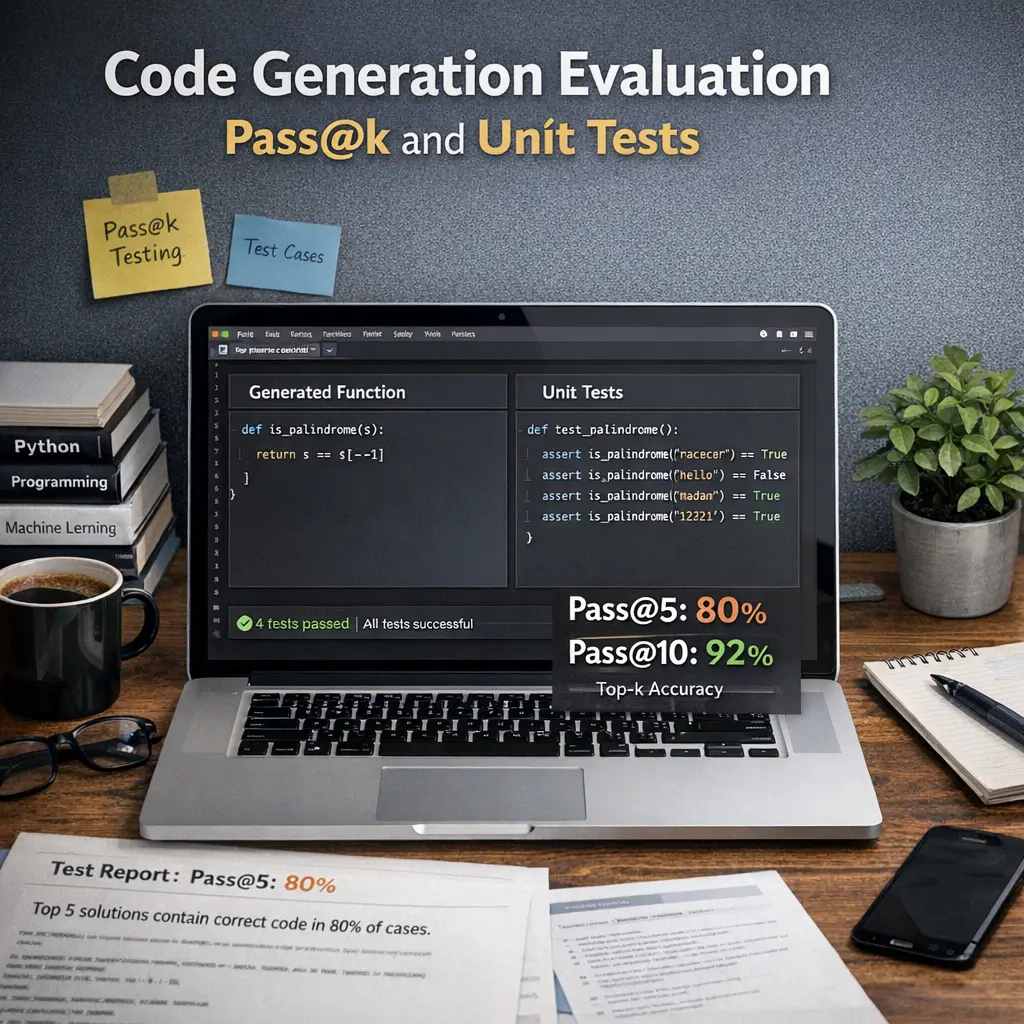

Code Generation Evaluation: Pass@k and Unit Tests

Code Generation Evaluation involves assessing how well language models generate correct code. Pass@k measures the likelihood that at least one of the top-k generated code samples solves a given problem, providing a quantitative success rate. Unit tests are used to automatically verify code correctness by running generated code against predefined test cases. Together, these methods help benchmark and compare LLM performance in code synthesis tasks, ensuring reliable and accurate code generation.

Code Generation Evaluation: Pass@k and Unit Tests

Code Generation Evaluation involves assessing how well language models generate correct code. Pass@k measures the likelihood that at least one of the top-k generated code samples solves a given problem, providing a quantitative success rate. Unit tests are used to automatically verify code correctness by running generated code against predefined test cases. Together, these methods help benchmark and compare LLM performance in code synthesis tasks, ensuring reliable and accurate code generation.

💡 Key Takeaways

- Understand pass@k: what it measures and how k changes evaluation of generated code.

- See how unit tests validate correctness and edge cases beyond sample outputs.

- Distinguish pass@k from other metrics and know when to use it in code-generation tasks.

- Learn best practices for designing unit tests and interpreting results to compare models fairly.

❓ Frequently Asked Questions

What does Pass@k measure in code generation evaluation?

Pass@k measures the fraction of tasks for which at least one of the model's top-k generated solutions passes the unit tests, indicating how often a correct solution appears within the top k attempts.

What are unit tests in this context?

Unit tests are automated checks that validate specific behaviors of the generated code, ensuring correct outputs for defined inputs and handling of edge cases.

How are unit tests and Pass@k related?

Unit tests define what counts as a correct solution. Pass@k aggregates whether any of the top-k generated solutions satisfy those tests across tasks, combining correctness with search depth.

How can I improve evaluation reliability and interpretability?

Use a diverse, well-defined test suite; report multiple k values (e.g., Pass@1, Pass@10); run in deterministic environments; and note limitations like flaky tests or task difficulty.