Context Windows & Token Budgets+50

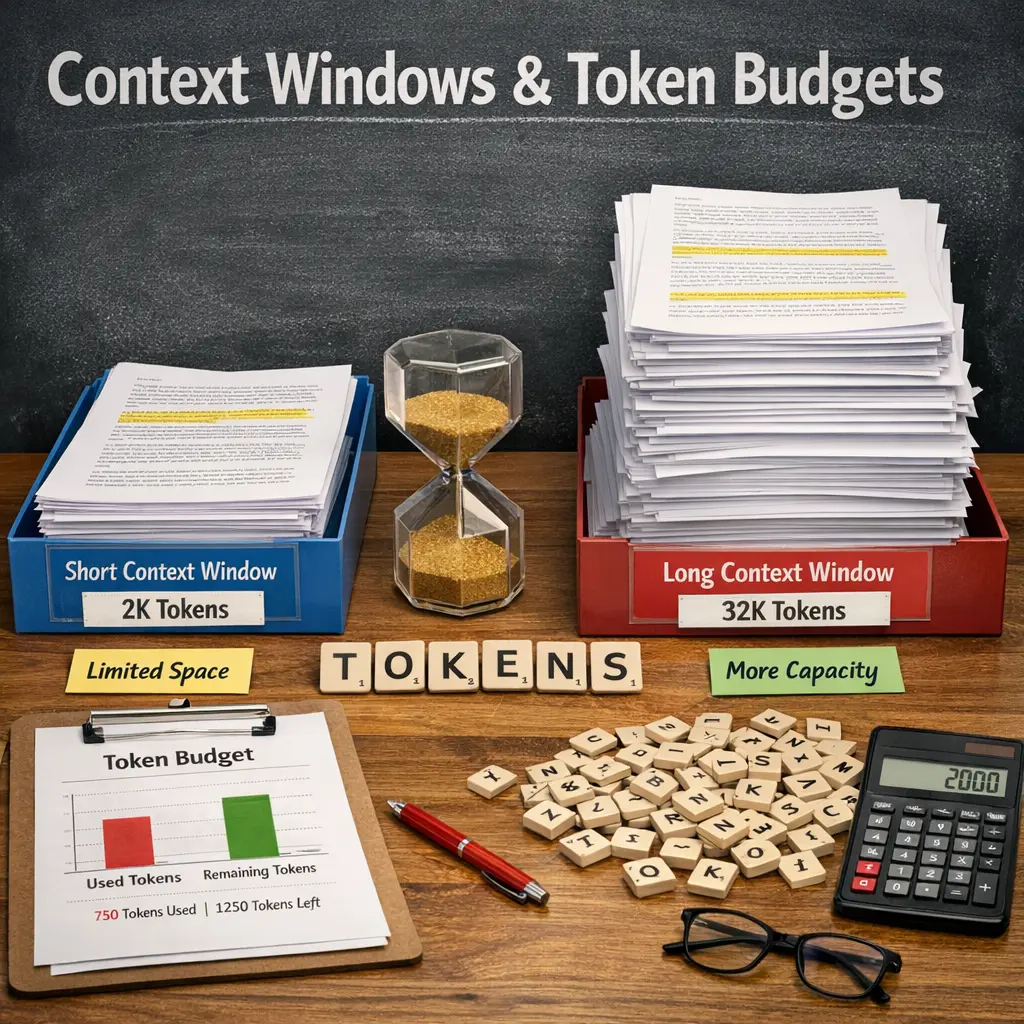

Context windows and token budgets are crucial concepts in agent architecture, particularly for AI language models. The context window refers to the amount of recent data or conversation history the model can access at once. Token budgets denote the maximum number of tokens (words or characters) the model can process within that window. Efficiently managing both ensures the agent can understand context, maintain coherent dialogue, and operate within computational limits.

Context Windows & Token Budgets+50

Context windows and token budgets are crucial concepts in agent architecture, particularly for AI language models. The context window refers to the amount of recent data or conversation history the model can access at once. Token budgets denote the maximum number of tokens (words or characters) the model can process within that window. Efficiently managing both ensures the agent can understand context, maintain coherent dialogue, and operate within computational limits.

💡 Key Takeaways

- Understand what a context window is and how it limits the amount of text a model can consider at once.

- Learn how token budgets cap the total tokens available for input, prompt, and output in a single interaction.

- Explore practical strategies to manage tokens, such as summarizing inputs, chunking data, and prioritizing essential information.

- Recognize trade-offs between longer context, response quality, and system resources, and how to optimize prompts accordingly.

❓ Frequently Asked Questions

What is a context window?

The maximum number of tokens a language model can consider at once, including your prompt, instructions, and any conversation history.

What is a token budget?

The total token allowance for a task, covering both input (prompt) and output (model answer). It helps limit how long the model can respond.

How do context windows affect prompt design?

If the prompt plus conversation history exceed the window, you must truncate or summarize earlier content to fit the model's available tokens.

What are practical tips to manage token budgets?

Write concise prompts, summarize prior messages, avoid redundant details, split tasks, and consider external memory or retrieval for long contexts.

What happens if you exceed the context window?

The model may ignore older tokens beyond the window, causing loss of earlier information unless you reintroduce it or reorganize the input.