Custom Evaluation Pipelines & Scoring Systems+50

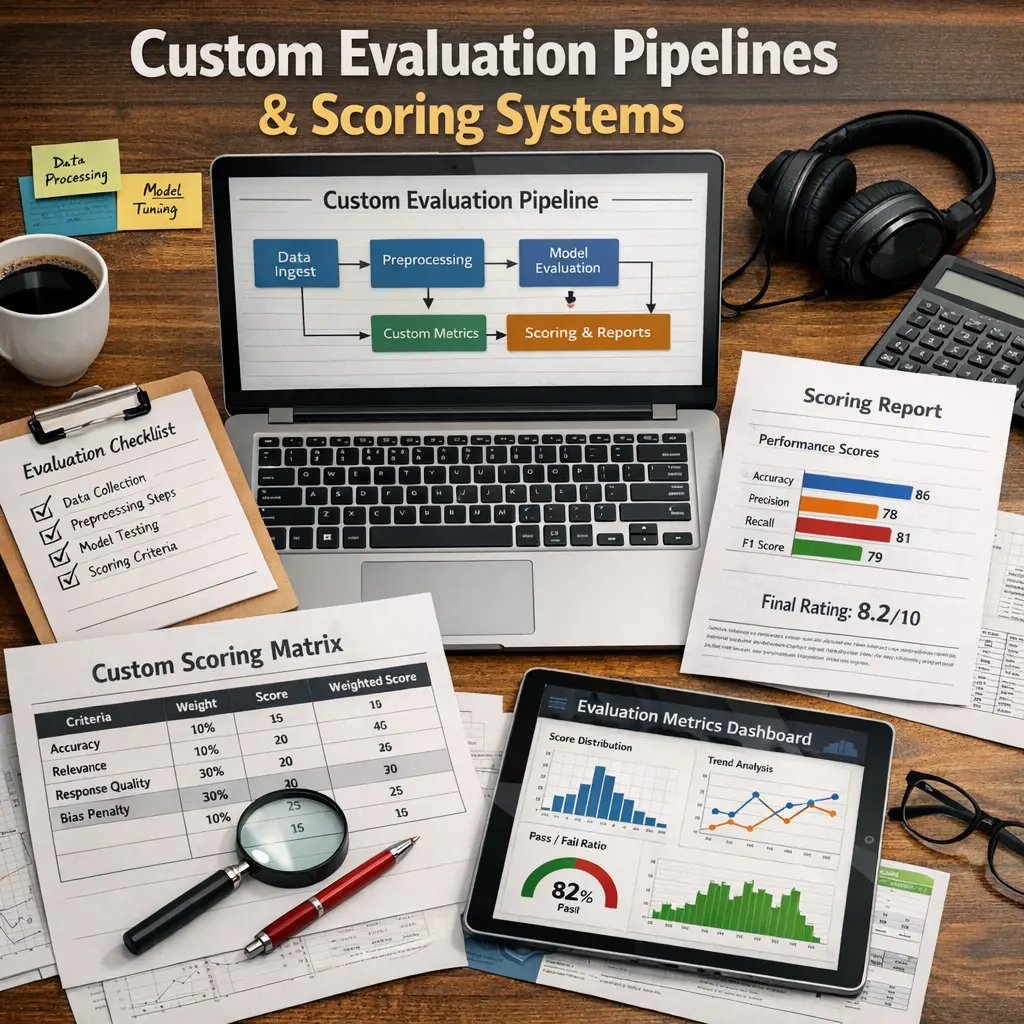

Custom Evaluation Pipelines & Scoring Systems for LLM Evaluations (evals) refer to tailored frameworks and methodologies designed to systematically assess the performance and accuracy of large language models. These pipelines automate the process of inputting test data, generating model responses, and applying specific scoring criteria, enabling objective and reproducible evaluation. By customizing these systems, organizations can focus on metrics and benchmarks relevant to their unique use cases, ensuring reliable and actionable insights into model behavior.

Custom Evaluation Pipelines & Scoring Systems+50

Custom Evaluation Pipelines & Scoring Systems for LLM Evaluations (evals) refer to tailored frameworks and methodologies designed to systematically assess the performance and accuracy of large language models. These pipelines automate the process of inputting test data, generating model responses, and applying specific scoring criteria, enabling objective and reproducible evaluation. By customizing these systems, organizations can focus on metrics and benchmarks relevant to their unique use cases, ensuring reliable and actionable insights into model behavior.

💡 Key Takeaways

- Understand what custom evaluation pipelines are and when to use them in analytics or ML workflows.

- Identify essential components of scoring systems: metrics, weighting schemes, normalization, and score aggregation.

- Learn how to align evaluation goals with selected metrics to ensure meaningful, actionable scores.

- Implement robust pipelines with data preprocessing, scoring modules, versioning, and audit trails for reproducibility.

- Evaluate pipelines for fairness, reliability, scalability, and maintainability through testing and benchmarking.

❓ Frequently Asked Questions

What is a custom evaluation pipeline?

A tailored sequence of steps to process inputs, run models, compute metrics, and summarize results for your specific task.

What is a scoring system in this context?

A method to assign numeric scores to outputs by combining metrics (possibly with weights) to produce an overall performance score.

How do you design a robust evaluation pipeline?

Define goals, select relevant metrics, plan data splits, implement preprocessing and inference, apply scoring rules, and validate results with checks and documentation.

Which metrics should I include in a scoring system?

Task-appropriate metrics (e.g., accuracy, precision, recall, F1, RMSE), plus reliability and operational metrics (calibration, robustness, latency, throughput, fairness). Normalize and weight them to reflect priorities.