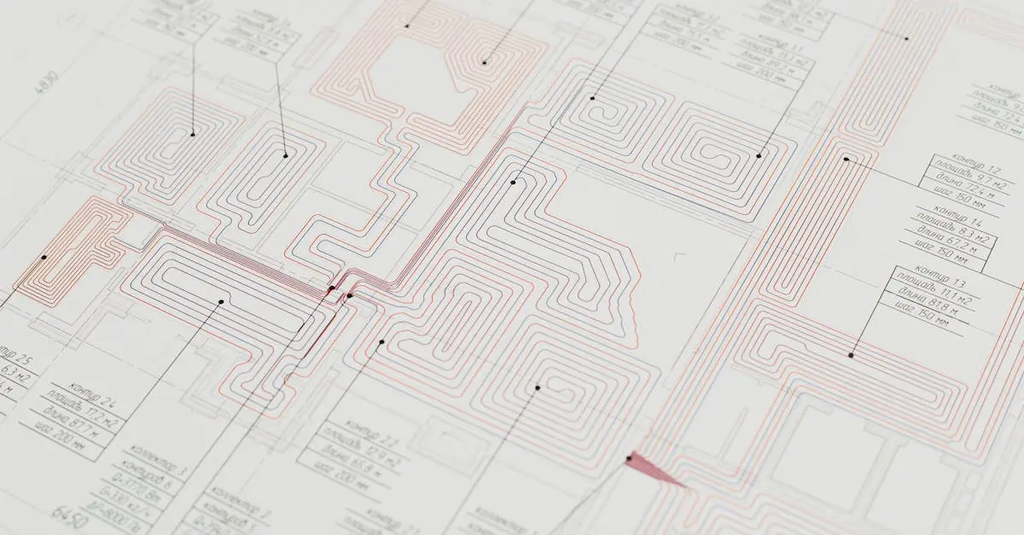

Data engineering pipelines are structured workflows that automate the process of collecting, transforming, and loading data from various sources into storage or analytics systems. They ensure data is cleansed, organized, and made accessible for analysis or machine learning tasks. These pipelines often involve steps such as extraction, validation, enrichment, and integration, helping organizations efficiently manage large volumes of data and maintain data quality throughout its lifecycle.

Data Engineering Pipelines

Data engineering pipelines are structured workflows that automate the process of collecting, transforming, and loading data from various sources into storage or analytics systems. They ensure data is cleansed, organized, and made accessible for analysis or machine learning tasks. These pipelines often involve steps such as extraction, validation, enrichment, and integration, helping organizations efficiently manage large volumes of data and maintain data quality throughout its lifecycle.

💡 Key Takeaways

- Understand what data engineering pipelines are and how they automate collecting, transforming, and loading data.

- Learn the ETL/ELT workflow and how data moves from sources to storage or analytics systems.

- Recognize data cleansing, normalization, and schema management to ensure data quality for analysis and machine learning.

- Identify considerations like scheduling, monitoring, scalability, and data lineage to maintain reliable pipelines.

❓ Frequently Asked Questions

What is a data engineering pipeline?

A set of automated steps that collects data from sources, transforms and cleans it, and loads it into storage or analytics systems so data is ready for analysis or machine learning.

What are the common stages of a data pipeline?

Ingest (extract), transform (clean/enrich), and load (store) into a target system, with orchestration, monitoring, and quality checks as needed.

Why are data pipelines important for analytics and machine learning?

They provide timely, consistent, and high-quality data, reduce manual prep, and enable scalable analysis and model training.

What is ETL vs ELT, and when should you use each?

ETL transforms data before loading into the target; ELT loads first and transforms inside the destination. Use ETL for early data shaping or when targets can’t compute easily; use ELT for large datasets on modern warehouses that support in-place processing.