Error Taxonomy: Tool, Model & Data Failures

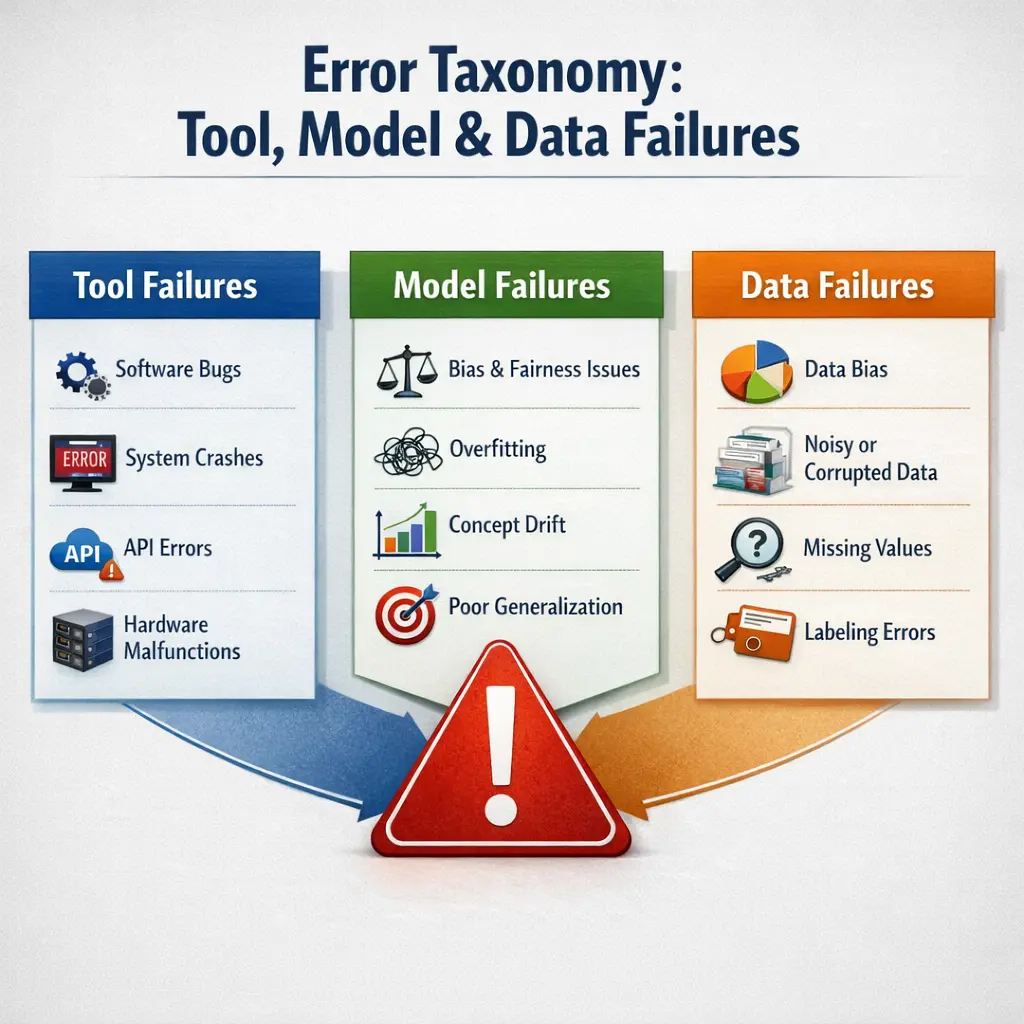

Error Taxonomy: Tool, Model & Data Failures (Agent Architecture) refers to a systematic classification of errors occurring within agent-based systems. It distinguishes failures arising from tools (software or hardware malfunctions), models (inaccurate or incomplete algorithms), and data (incorrect, missing, or biased inputs). This taxonomy helps developers and researchers identify, analyze, and address the root causes of errors, enhancing the reliability and performance of agent architectures in complex environments.

Error Taxonomy: Tool, Model & Data Failures

Error Taxonomy: Tool, Model & Data Failures (Agent Architecture) refers to a systematic classification of errors occurring within agent-based systems. It distinguishes failures arising from tools (software or hardware malfunctions), models (inaccurate or incomplete algorithms), and data (incorrect, missing, or biased inputs). This taxonomy helps developers and researchers identify, analyze, and address the root causes of errors, enhancing the reliability and performance of agent architectures in complex environments.

💡 Key Takeaways

- Understand the three main error types: tool, model, and data failures, and how they interact.

- Differentiate tool failures (dependencies, runtimes) from model failures (bias, accuracy) and data issues.

- Identify common data problems (quality, labeling, distribution shifts) and their impact on results.

- Explore practical strategies to diagnose, prevent, and mitigate errors across tools, models, and data.

❓ Frequently Asked Questions

What is the purpose of the Error Taxonomy: Tool, Model & Data Failures?

A framework to categorize failures in AI/ML work into three domains—Tool, Model, and Data—to help diagnose root causes and guide fixes.

What is a Tool failure?

Problems with software, libraries, or infrastructure that prevent experiments from running or reproducibly executing. Examples include missing dependencies, version conflicts, misconfigurations, or hardware/resource limits.

What is a Model failure?

Issues related to the model’s behavior or training process, such as poor accuracy, overfitting/underfitting, miscalibration, unstable training, or architecture mismatch.

What is a Data failure?

Problems with the data used for training or evaluation, like mislabeled data, missing values, data leakage, distribution shift, biased samples, or corrupted files.

How can I prevent or fix these failures?

Triage systematically: inspect tooling and environment, reproduce the issue, audit data quality and pipelines, assess model performance, apply fixes (update libraries, correct data, adjust model), and set up monitoring and tests to catch issues early.