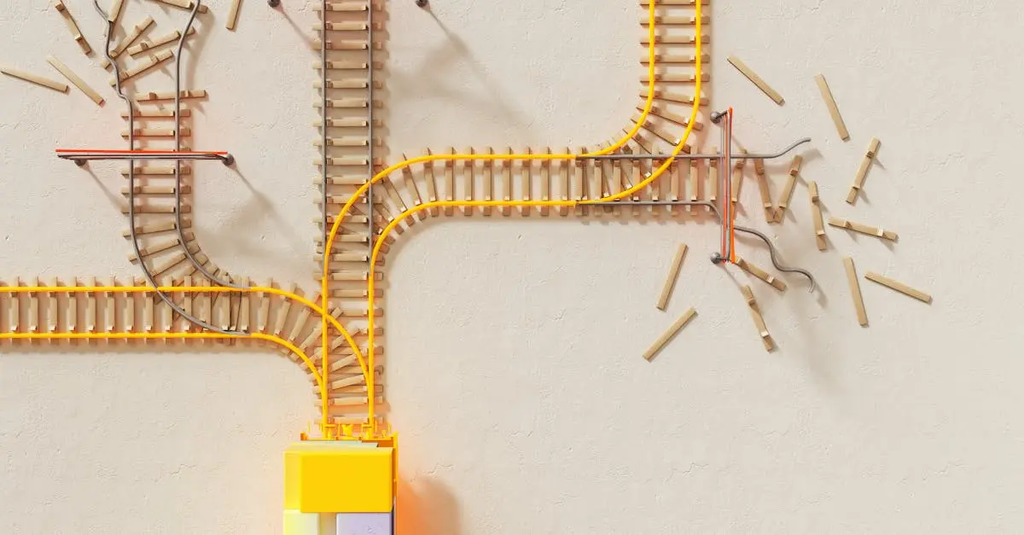

Ethical AI, Data Governance & Bias

Ethical AI, Data Governance, and Bias refer to the responsible development and management of artificial intelligence systems. Ethical AI emphasizes fairness, transparency, and accountability, ensuring technology aligns with societal values. Data Governance involves policies and processes for managing data quality, privacy, and security. Addressing Bias focuses on identifying and mitigating prejudices in AI algorithms to prevent discrimination and ensure equitable outcomes for all users, fostering trust and reliability in AI applications.

Ethical AI, Data Governance & Bias

Ethical AI, Data Governance, and Bias refer to the responsible development and management of artificial intelligence systems. Ethical AI emphasizes fairness, transparency, and accountability, ensuring technology aligns with societal values. Data Governance involves policies and processes for managing data quality, privacy, and security. Addressing Bias focuses on identifying and mitigating prejudices in AI algorithms to prevent discrimination and ensure equitable outcomes for all users, fostering trust and reliability in AI applications.

💡 Key Takeaways

- Define ethical AI and why fairness, accountability, and transparency matter in automated decisions.

- Identify common sources of data and model bias and apply practical mitigation strategies.

- Explain data governance basics such as data quality, privacy, consent, and stewardship.

- Learn how to audit and monitor AI systems to maintain ethical compliance over time.

❓ Frequently Asked Questions

What is ethical AI?

Ethical AI means designing and deploying AI in ways that avoid harm, respect rights, promote fairness and transparency, and include human oversight and accountability.

What is data governance?

Data governance is a framework of policies, roles, and processes for managing an organization's data to ensure accuracy, privacy, security, access control, and regulatory compliance.

What is algorithmic bias?

Algorithmic bias occurs when an AI system produces unfair or discriminatory outcomes for certain groups due to biased data, assumptions, or training processes.

How can bias be mitigated in AI systems?

Mitigation includes using diverse, representative data; conducting bias audits and fairness testing; building transparent models; and ongoing monitoring and accountability.