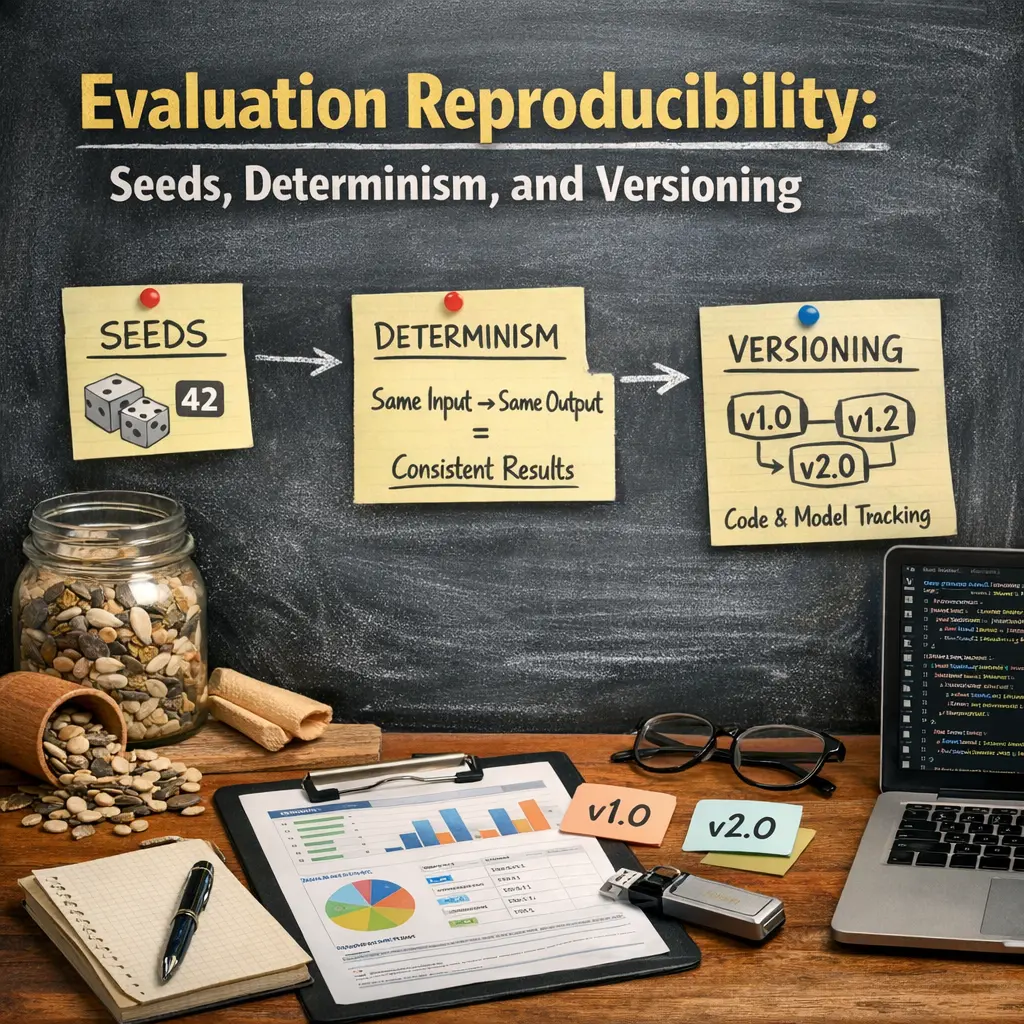

Evaluation Reproducibility: Seeds, Determinism, and Versioning

Evaluation reproducibility in LLM evaluations refers to the ability to consistently replicate evaluation results. This relies on three key factors: setting random seeds to ensure identical initialization, enforcing determinism so that processes yield the same outputs across runs, and using strict versioning of datasets, code, and model checkpoints. Together, these practices enable researchers to reliably compare results, validate findings, and build trust in the evaluation process for language models.

Evaluation Reproducibility: Seeds, Determinism, and Versioning

Evaluation reproducibility in LLM evaluations refers to the ability to consistently replicate evaluation results. This relies on three key factors: setting random seeds to ensure identical initialization, enforcing determinism so that processes yield the same outputs across runs, and using strict versioning of datasets, code, and model checkpoints. Together, these practices enable researchers to reliably compare results, validate findings, and build trust in the evaluation process for language models.

💡 Key Takeaways

- Understand how fixed random seeds and seed management affect reproducibility across runs

- Differentiate deterministic vs nondeterministic evaluation and implement strategies to enforce determinism

- Apply versioning for code, data, and models to enable exact replication of evaluations

- Control and document the runtime environment (libraries, hardware, CUDA, random states) and maintain thorough result logs to reproduce outcomes

❓ Frequently Asked Questions

What is evaluation reproducibility?

The ability to replicate published results by running the same evaluation with the same data and settings, yielding the same metrics (e.g., accuracy, F1) when conditions are controlled.

What is a random seed and why is it important?

A seed initializes random number generators used for data shuffling, train/test splits, and augmentations. Setting a fixed seed makes results repeatable across runs.

What does determinism mean in evaluations?

Determinism means that, given identical input data, code, and environment, the evaluation outputs are the same every run. This often requires fixing seeds and controlling nondeterministic factors.

How does versioning help with reproducibility?

Versioning records exact software, library, and data versions used in an evaluation. Using containers or environment files enables others to recreate the same setup.