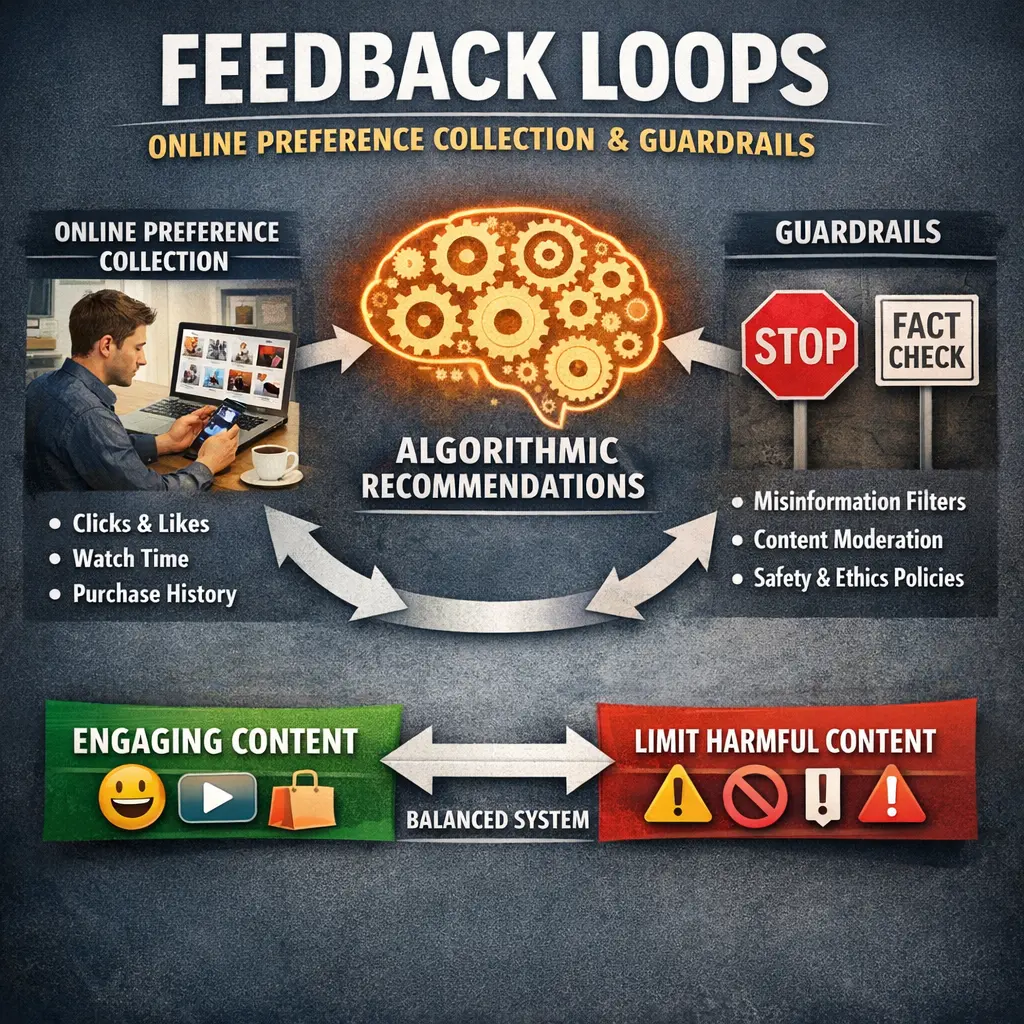

Feedback Loops: Online Preference Collection and Guardrails

Feedback loops in online preference collection and guardrails refer to the iterative process where user interactions and preferences are continuously gathered and analyzed to improve large language models (LLMs). Through systematic evaluations (evals), these models receive real-time feedback, enabling developers to adjust responses and set boundaries (guardrails) that ensure safe, relevant, and high-quality outputs. This dynamic approach enhances model alignment with user expectations and ethical standards.

Feedback Loops: Online Preference Collection and Guardrails

Feedback loops in online preference collection and guardrails refer to the iterative process where user interactions and preferences are continuously gathered and analyzed to improve large language models (LLMs). Through systematic evaluations (evals), these models receive real-time feedback, enabling developers to adjust responses and set boundaries (guardrails) that ensure safe, relevant, and high-quality outputs. This dynamic approach enhances model alignment with user expectations and ethical standards.

💡 Key Takeaways

- Understand how online feedback loops use your actions to shape future content and recommendations.

- Learn how guardrails protect privacy, consent, and limit data collection in preference systems.

- Distinguish between implicit and explicit feedback and how each guides personalization.

- Identify risks of feedback loops like filter bubbles and bias, and how guardrails mitigate them.

❓ Frequently Asked Questions

What are feedback loops in online systems?

A mechanism where user input (preferences, actions) is collected and used to adjust future content or recommendations, which then influence subsequent user behavior.

How do online platforms collect user preferences?

They use explicit inputs (ratings, settings, surveys) and implicit signals (clicks, dwell time, scrolling) to tailor content to each user.

What are guardrails in the context of online preference collection?

Safeguards like data minimization, transparency about collection, opt-out options, and rules to prevent manipulation or bias.

Why are guardrails important for user trust and safety?

They protect privacy, reduce abuse, promote fair recommendations, and give users control over their data.