Generative Illustration & Diffusion Models

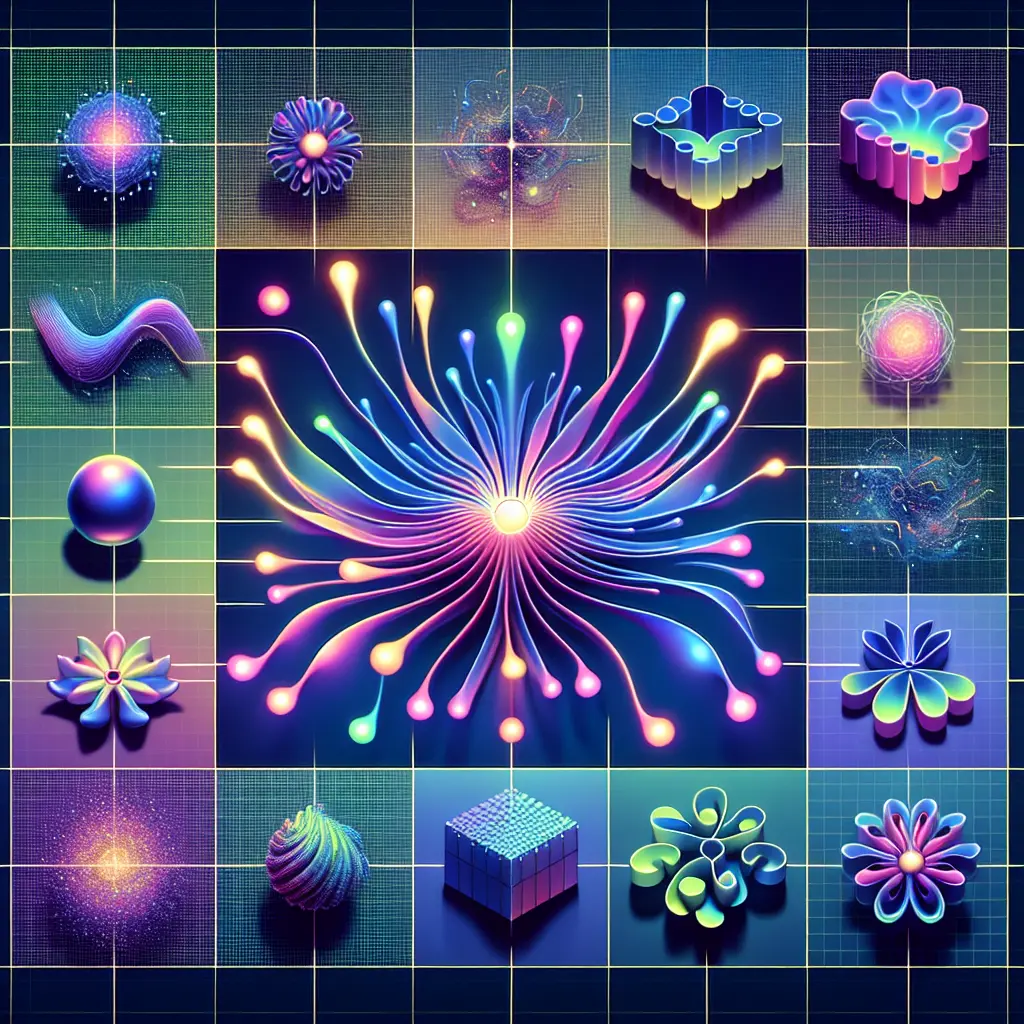

Generative Illustration & Diffusion Models refer to advanced AI techniques that create original images or artworks from textual or visual prompts. Generative illustration uses neural networks to produce creative visuals, while diffusion models iteratively refine random noise into coherent images through a process of denoising. Together, they enable the automated generation of high-quality, imaginative illustrations, revolutionizing digital art, design, and creative content production by offering unprecedented flexibility and realism.

Generative Illustration & Diffusion Models

Generative Illustration & Diffusion Models refer to advanced AI techniques that create original images or artworks from textual or visual prompts. Generative illustration uses neural networks to produce creative visuals, while diffusion models iteratively refine random noise into coherent images through a process of denoising. Together, they enable the automated generation of high-quality, imaginative illustrations, revolutionizing digital art, design, and creative content production by offering unprecedented flexibility and realism.

💡 Key Takeaways

- Understand what generative illustration is and how diffusion models turn textual or visual prompts into original images.

- Learn how prompts, conditioning, and denoising steps guide the generation process through iterative refinement.

- Explore common creative applications in fields like illustration, design, concept art, and storytelling using AI visuals.

- Consider key limitations and ethical considerations of AI-generated art, including originality, copyright, and bias.

❓ Frequently Asked Questions

What is Generative Illustration?

Generative illustration uses AI neural networks to create original images from prompts (text or visuals), turning ideas into visuals without hand-drawn input.

What is a diffusion model?

A diffusion model is a type of generative model that starts with random noise and iteratively denoises it to produce a coherent image that matches a given prompt.

How do prompts and settings influence the results?

Prompts describe the desired subject, style, and composition. More detail yields more control; settings like seed or guidance strength affect randomness and fidelity to the prompt.

What should creators consider when using these tools?

Consider licensing and attribution for training data, ethical implications, potential biases or artifacts, and the need to refine outputs for professional use.