Hallucination Detection & Factuality Assessment+50

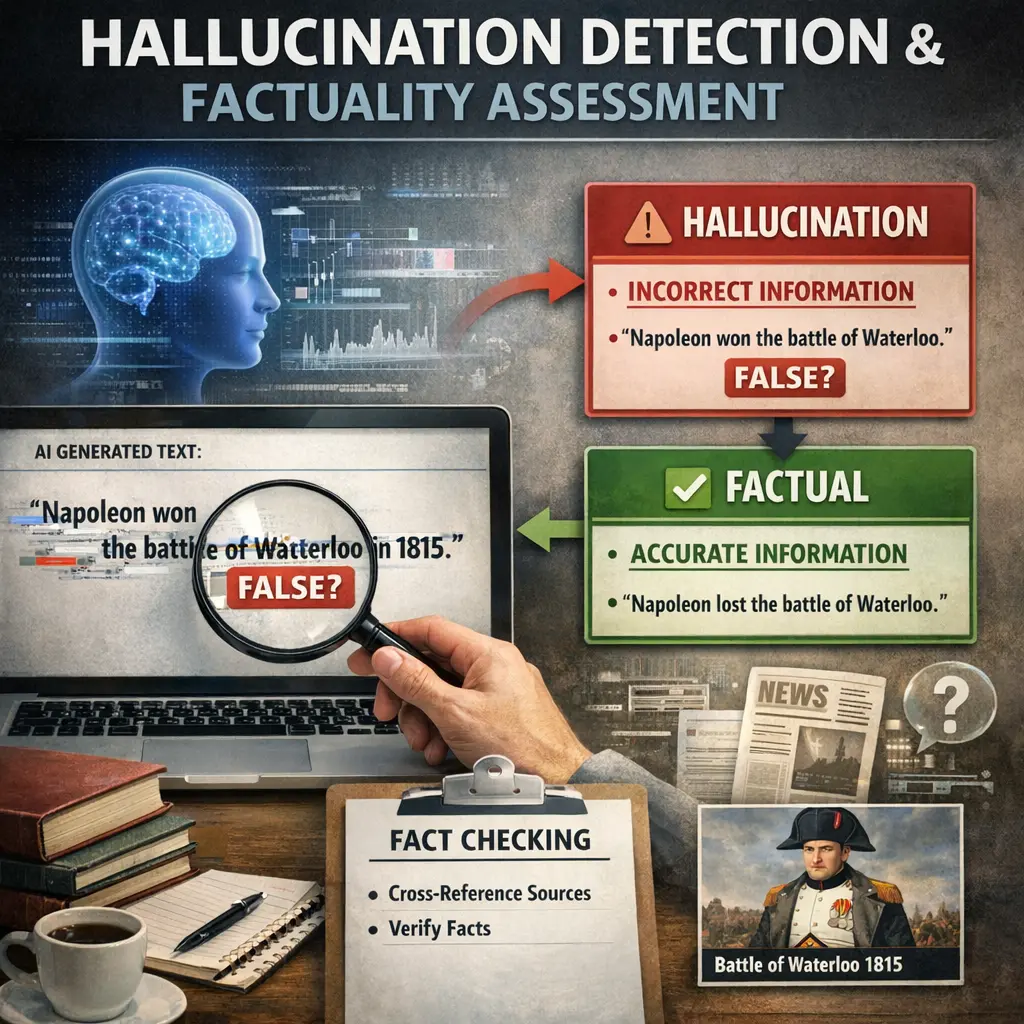

Hallucination Detection & Factuality Assessment in the context of LLM Evaluations (evals) refers to the process of identifying when a language model generates information that is untrue, misleading, or fabricated (“hallucinations”). This evaluation assesses the factual correctness of the model’s outputs by comparing them to reliable sources or ground truth data, ensuring that responses are accurate and trustworthy. Such assessments help improve model reliability and guide further development of large language models.

Hallucination Detection & Factuality Assessment+50

Hallucination Detection & Factuality Assessment in the context of LLM Evaluations (evals) refers to the process of identifying when a language model generates information that is untrue, misleading, or fabricated (“hallucinations”). This evaluation assesses the factual correctness of the model’s outputs by comparing them to reliable sources or ground truth data, ensuring that responses are accurate and trustworthy. Such assessments help improve model reliability and guide further development of large language models.

💡 Key Takeaways

- Distinguish hallucinations from uncertainty in AI outputs and why it matters.

- Detect hallucinations with practical checks such as cross-checking facts against trusted sources and assessing internal consistency.

- Assess factuality using evidence-based methods and simple metrics to gauge claim reliability.

- Establish a reproducible fact-checking workflow: collect sources, cite evidence, and decide when to flag or defer.

❓ Frequently Asked Questions

What is hallucination in AI language models?

Hallucination occurs when a model generates text that is not supported by the input, evidence, or real-world facts—plausible-sounding but false or invented statements.

What is factuality assessment?

Factuality assessment checks whether generated content is accurate and backed by reliable sources, data, or evidence, using automatic checks or human review.

How can you detect hallucinations in a quiz or article?

Look for claims lacking citations or source backing, statements that contradict known facts, or details that cannot be traced to the provided material.

What approaches help improve factuality in AI outputs?

Use grounding or retrieval-augmented generation to cite sources, prompt for evidence, run entailment checks, and involve human review to verify accuracy.