Hallucination Detection & Grounding Checks

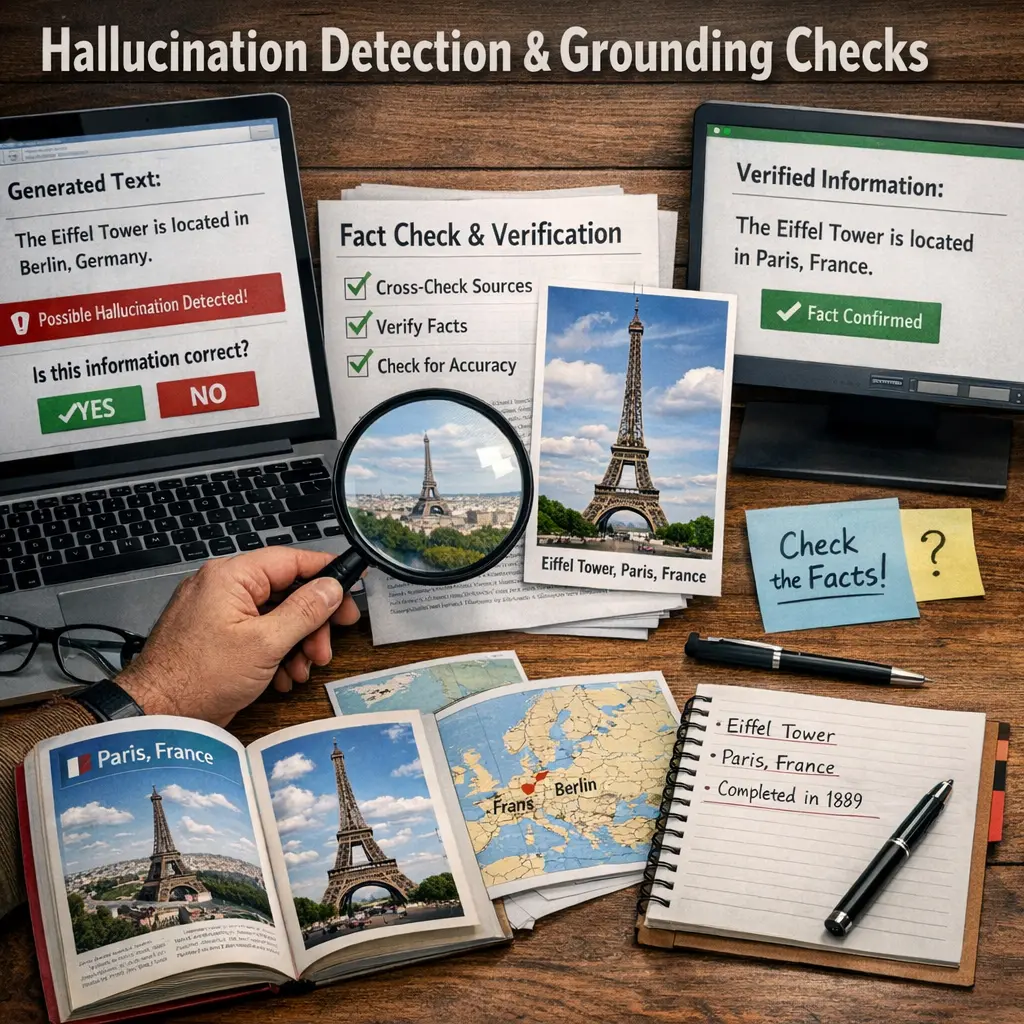

Hallucination Detection & Grounding Checks in agent architecture refer to mechanisms that identify when an AI system generates false, misleading, or unsubstantiated information (hallucinations) and verify that its outputs are based on reliable sources or context (grounding). These checks ensure the agent’s responses remain accurate, trustworthy, and anchored in factual data, enhancing overall system reliability and user trust in AI-generated content.

Hallucination Detection & Grounding Checks

Hallucination Detection & Grounding Checks in agent architecture refer to mechanisms that identify when an AI system generates false, misleading, or unsubstantiated information (hallucinations) and verify that its outputs are based on reliable sources or context (grounding). These checks ensure the agent’s responses remain accurate, trustworthy, and anchored in factual data, enhancing overall system reliability and user trust in AI-generated content.

💡 Key Takeaways

- Understand what AI hallucination is and why it happens in language models.

- Learn how grounding checks verify claims against trusted sources and provide evidence.

- Explore practical techniques to reduce hallucinations, such as retrieval-augmented generation, prompt engineering, and source-aware generation.

- Design and implement evaluation workflows for detecting and correcting hallucinations, including metrics and human-in-the-loop practices.

❓ Frequently Asked Questions

What is hallucination in AI language models?

A hallucination is when the model outputs information that sounds plausible but is false or not supported by the input or reliable sources.

What are grounding checks and why do they matter?

Grounding checks verify that the model's claims are backed by evidence, data, or citations, helping ensure accuracy and trustworthiness.

How can you detect hallucinatory responses in a quiz setting?

Look for unsupported facts, claims not tied to provided context, missing citations, or statements that can’t be verified with reliable sources.

What practices help reduce hallucinations during generation?

Use retrieval-augmented generation, require explicit citations, implement fact-checking or human review, and design prompts that request evidence and clear boundaries on claims.