Human factors and automation bias risk studies

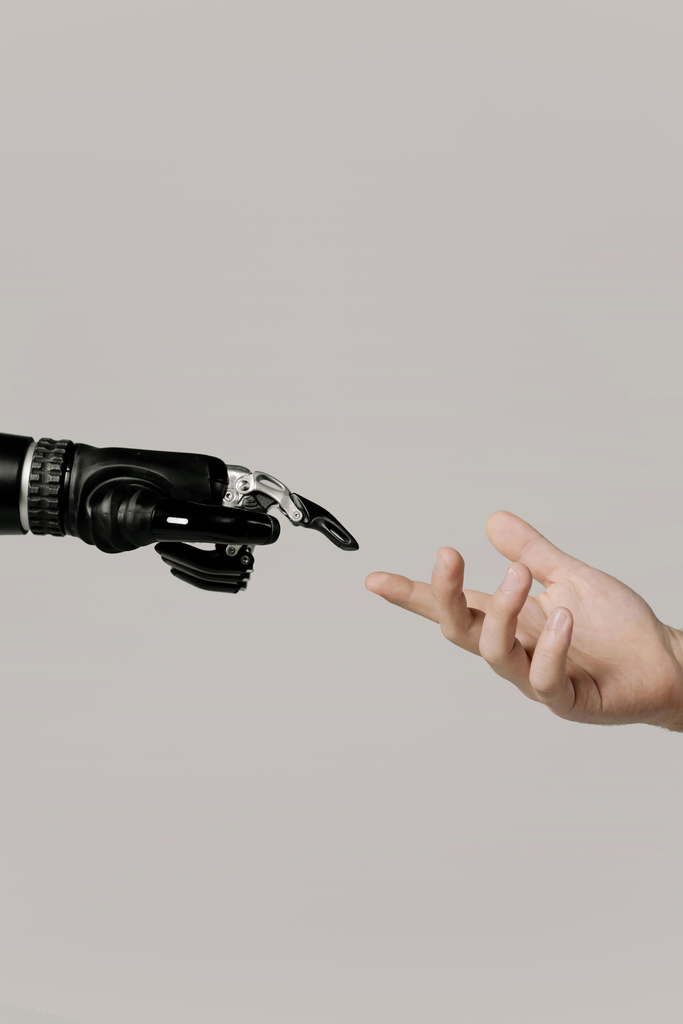

Human factors and automation bias risk studies examine how human behavior, cognitive limitations, and interactions with automated systems can lead to errors or overreliance on technology. These studies analyze the tendency of individuals to trust or follow automated recommendations, sometimes at the expense of their own judgment. The goal is to identify risks, improve system design, and develop training or safeguards that help users maintain appropriate oversight and decision-making abilities when working with automation.

Human factors and automation bias risk studies

Human factors and automation bias risk studies examine how human behavior, cognitive limitations, and interactions with automated systems can lead to errors or overreliance on technology. These studies analyze the tendency of individuals to trust or follow automated recommendations, sometimes at the expense of their own judgment. The goal is to identify risks, improve system design, and develop training or safeguards that help users maintain appropriate oversight and decision-making abilities when working with automation.

💡 Key Takeaways

- Understand how human behavior and cognitive limits influence interactions with automated systems.

- Learn about automation bias and why people may overtrust AI recommendations.

- Identify risk assessment methods and analytical approaches used to study human–automation interactions.

- Explore strategies to mitigate automation bias through better interfaces, explanations, and training.

❓ Frequently Asked Questions

What is automation bias and why does it matter?

Automation bias is the tendency to over-rely on automated systems or recommendations, even when they are flawed. It can lead to errors if the user accepts faulty outputs without independent judgment.

What methods do researchers use to study human factors and automation bias?

Researchers use controlled experiments, simulations, field studies, cognitive task analysis, think-aloud protocols, and eye-tracking to assess trust, workload, decision quality, and how people interact with automation.

What is AI risk assessment and what methods are used?

AI risk assessment identifies potential failure modes, likelihoods, and impacts of AI systems. Analysts use qualitative and quantitative methods such as fault tree analysis, FMEA, scenario analysis, and probabilistic risk assessment to guide mitigation.

How can organizations reduce automation bias in practice?

Mitigations include training to calibrate trust, transparent and explainable AI, keeping humans in the loop, robust monitoring and audits, and scenario-based exercises to improve decision-making under automation.