Inter-Annotator Agreement: Cohen's Kappa and Krippendorff's Alpha

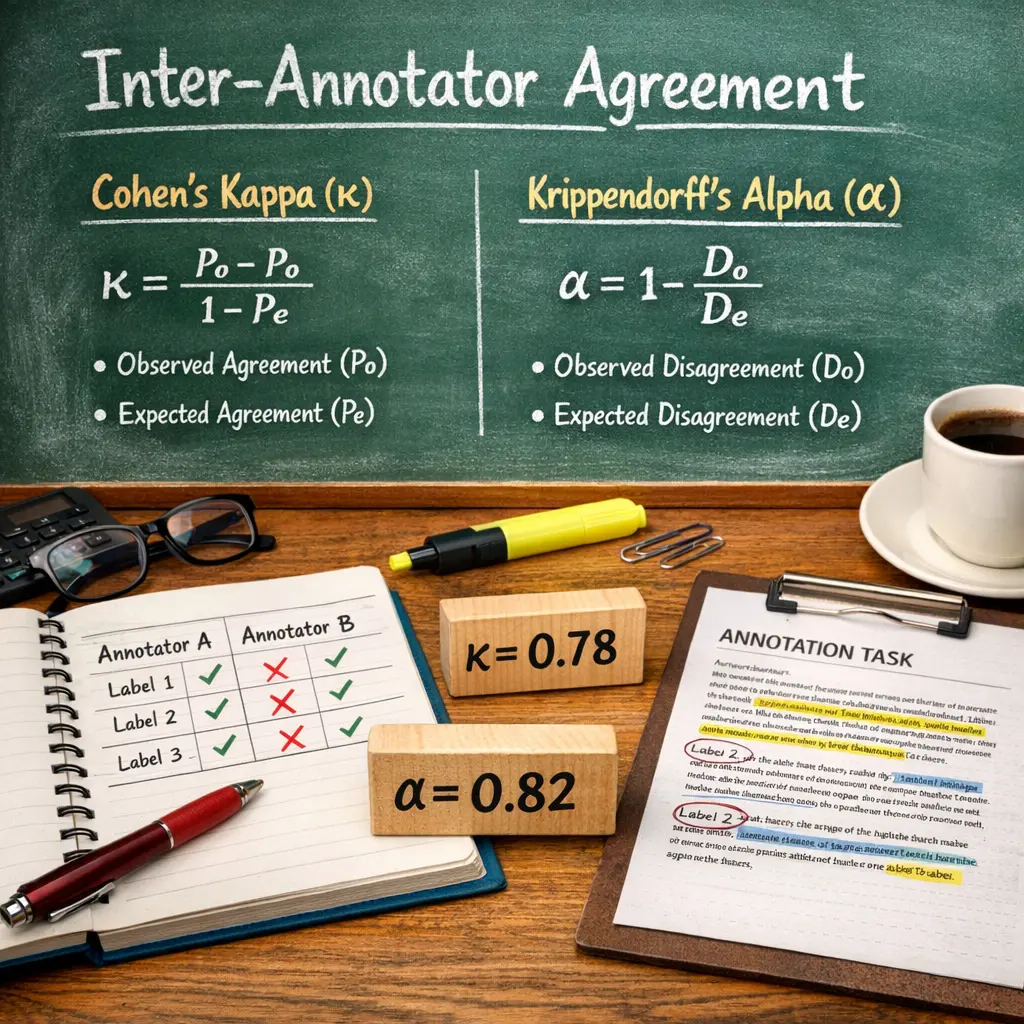

Inter-Annotator Agreement measures the consistency between different evaluators assessing the same data. Cohen's Kappa quantifies agreement between two annotators, correcting for chance agreement. Krippendorff's Alpha generalizes this to multiple annotators and accommodates various data types. In LLM evaluations, these metrics ensure that human judgments of model outputs are reliable and not due to random chance, thus validating the quality and fairness of the evaluation process.

Inter-Annotator Agreement: Cohen's Kappa and Krippendorff's Alpha

Inter-Annotator Agreement measures the consistency between different evaluators assessing the same data. Cohen's Kappa quantifies agreement between two annotators, correcting for chance agreement. Krippendorff's Alpha generalizes this to multiple annotators and accommodates various data types. In LLM evaluations, these metrics ensure that human judgments of model outputs are reliable and not due to random chance, thus validating the quality and fairness of the evaluation process.

💡 Key Takeaways

- Define inter-annotator agreement and its importance for reliable labeling.

- Understand Cohen's Kappa: when to use it (two raters, nominal data) and how it adjusts for chance.

- Understand Krippendorff's Alpha: its flexibility for any number of raters and data types, and handling of missing data.

- Learn how to interpret the agreement scores and common pitfalls in reporting these metrics.

❓ Frequently Asked Questions

What is inter-annotator agreement (IAA) and why is it important?

IAA measures how consistently independent annotators label the same items. High IAA indicates reliable labels, reducing measurement error and supporting valid conclusions and better model performance.

What is Cohen's kappa and how is it interpreted?

Cohen's kappa measures agreement between two annotators, corrected for chance. It ranges from -1 to 1; common interpretation: <0 poor, 0–0.20 slight, 0.21–0.40 fair, 0.41–0.60 moderate, 0.61–0.80 substantial, 0.81–1.00 almost perfect.

What is Krippendorff's alpha and how is it interpreted?

Krippendorff's alpha is a versatile reliability measure that handles any number of raters, any data level (nominal, ordinal, interval, ratio), and missing data. It ranges from 0 to 1 (higher is better); 1 = perfect agreement, 0 = chance-level agreement, and it can be negative in cases of systematic disagreement.

When should you use Cohen's kappa versus Krippendorff's alpha?

Use Cohen's kappa when you have exactly two raters and nominal data. Use Krippendorff's alpha when there are more than two raters, when data are not strictly nominal, or when some ratings are missing.

What are common pitfalls when interpreting IAA scores?

Be aware that kappa can be affected by category prevalence and bias; alpha can be influenced by the number of categories and missing data. A high IAA doesn't guarantee validity—report data type, number of raters, sample size, and consider confidence intervals.