Liability apportionment in multi-party AI systems

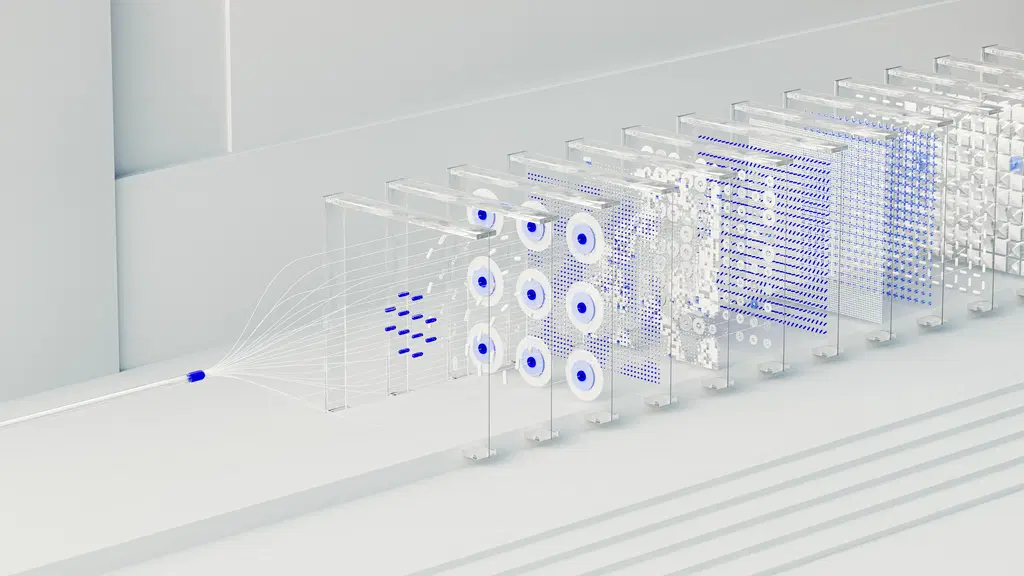

Liability apportionment in multi-party AI systems refers to the process of determining and distributing responsibility among multiple parties involved in the development, deployment, or operation of an AI system when harm or loss occurs. This involves assessing the roles and actions of each stakeholder—such as developers, operators, and users—to decide how legal or financial accountability is shared, often complicated by the system’s complexity and the interdependence of contributions.

Liability apportionment in multi-party AI systems

Liability apportionment in multi-party AI systems refers to the process of determining and distributing responsibility among multiple parties involved in the development, deployment, or operation of an AI system when harm or loss occurs. This involves assessing the roles and actions of each stakeholder—such as developers, operators, and users—to decide how legal or financial accountability is shared, often complicated by the system’s complexity and the interdependence of contributions.

💡 Key Takeaways

- Identify the parties involved in a multi-party AI system (developers, deployers, data providers, platform operators) and their potential liability.

- Explain how harm or loss is attributed and how liability is apportioned under contracts, regulatory frameworks, and industry standards.

- Recognize key factors that influence liability (negligence, data quality/provenance, security incidents, governance gaps) in generative AI.

- Learn governance and risk-management practices to allocate liability upfront (contracts, SLAs, audit trails, incident response) and improve security and compliance.

❓ Frequently Asked Questions

What is liability apportionment in multi-party AI systems?

Liability apportionment is the process of assigning responsibility for harm or loss to the appropriate parties involved in developing, deploying, or operating an AI system, based on their roles, actions, and fault.

Who are the typical parties involved in liability assessments for generative AI systems?

Typical parties include model developers/owners, data providers, platform or service providers, integrators, operators, and users, along with regulators or courts in some cases.

What factors influence how liability is allocated in these systems?

Factors include the parties' roles and actions (design choices, data use, training, deployment settings), causation and foreseeability of harm, contractual risk shifts, negligence or non-compliance, and applicable laws or standards.

How can organizations prepare to address liability across the AI system lifecycle?

Establish clear governance and contracts, implement robust security and compliance controls, maintain evidence and data provenance, perform risk assessments, and define fault attribution processes and incident response plans.

What practices support effective liability management in multi-party AI deployments?

Use documentation of roles (RACI), data provenance logs, third-party certifications, indemnities and liability limits in contracts, and ongoing audits to demonstrate accountability and aid fault resolution.