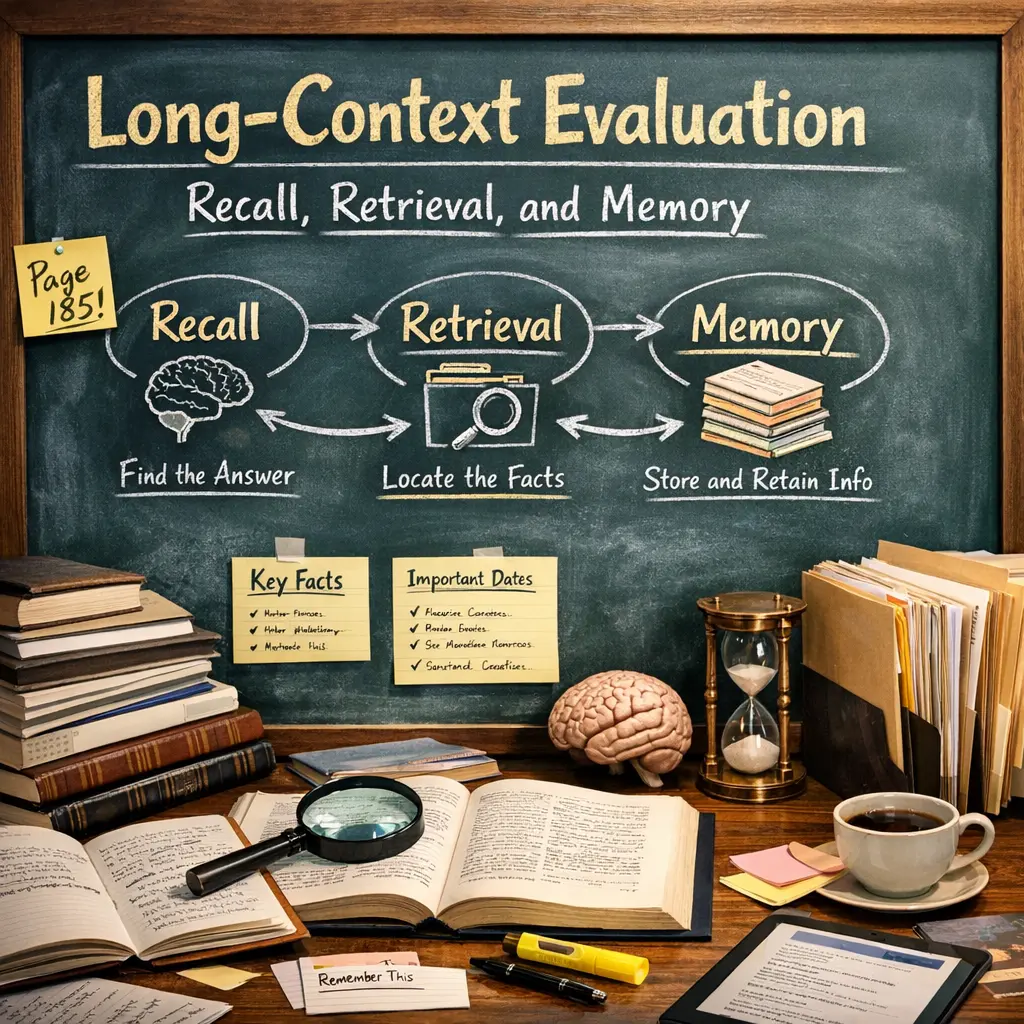

Long-Context Evaluation: Recall, Retrieval, and Memory

Long-Context Evaluation: Recall, Retrieval, and Memory (LLM Evaluations) refers to assessing how well large language models (LLMs) handle, remember, and accurately retrieve information across extended contexts or lengthy inputs. These evaluations test a model’s ability to recall earlier details, retrieve relevant facts, and maintain coherent memory over long conversations or documents, ensuring consistent and contextually accurate responses throughout prolonged interactions.

Long-Context Evaluation: Recall, Retrieval, and Memory

Long-Context Evaluation: Recall, Retrieval, and Memory (LLM Evaluations) refers to assessing how well large language models (LLMs) handle, remember, and accurately retrieve information across extended contexts or lengthy inputs. These evaluations test a model’s ability to recall earlier details, retrieve relevant facts, and maintain coherent memory over long conversations or documents, ensuring consistent and contextually accurate responses throughout prolonged interactions.

💡 Key Takeaways

- Explain long-context evaluation and how recall, retrieval, and memory interact when answering from large texts.

- Distinguish recall (internal memory retrieval) from retrieval (pulling in external context) in long-context tasks.

- Identify common challenges in long-context evaluation, such as memory decay and context fragmentation, and quick ways to mitigate them.

- Learn practical techniques to boost long-context performance, including chunking text, summarizing sections, and retrieval-augmented strategies.

- Understand how to evaluate long-context reasoning, choosing suitable metrics and designing robust test cases.

❓ Frequently Asked Questions

What is long-context evaluation in AI?

It tests how well a model handles and preserves information across long input sequences, requiring memory, recall, and retrieval abilities.

How do recall, retrieval, and memory differ in this context?

Recall reproduces information already present in the current context; retrieval fetches relevant facts from external sources or stored memory; memory refers to the model's ability to retain and use information across a long task or conversation.

What makes long-context evaluation challenging?

Finite context windows, the need to maintain coherence, and the difficulty of accurately recalling or retrieving relevant details as the context grows.

How can you improve performance on long-context tasks?

Use retrieval-augmented techniques, memory-augmented models, chunking or sliding windows, and clear task prompts to better manage long inputs and maintain relevant information.