Long-Term Memory Indexing & Pruning

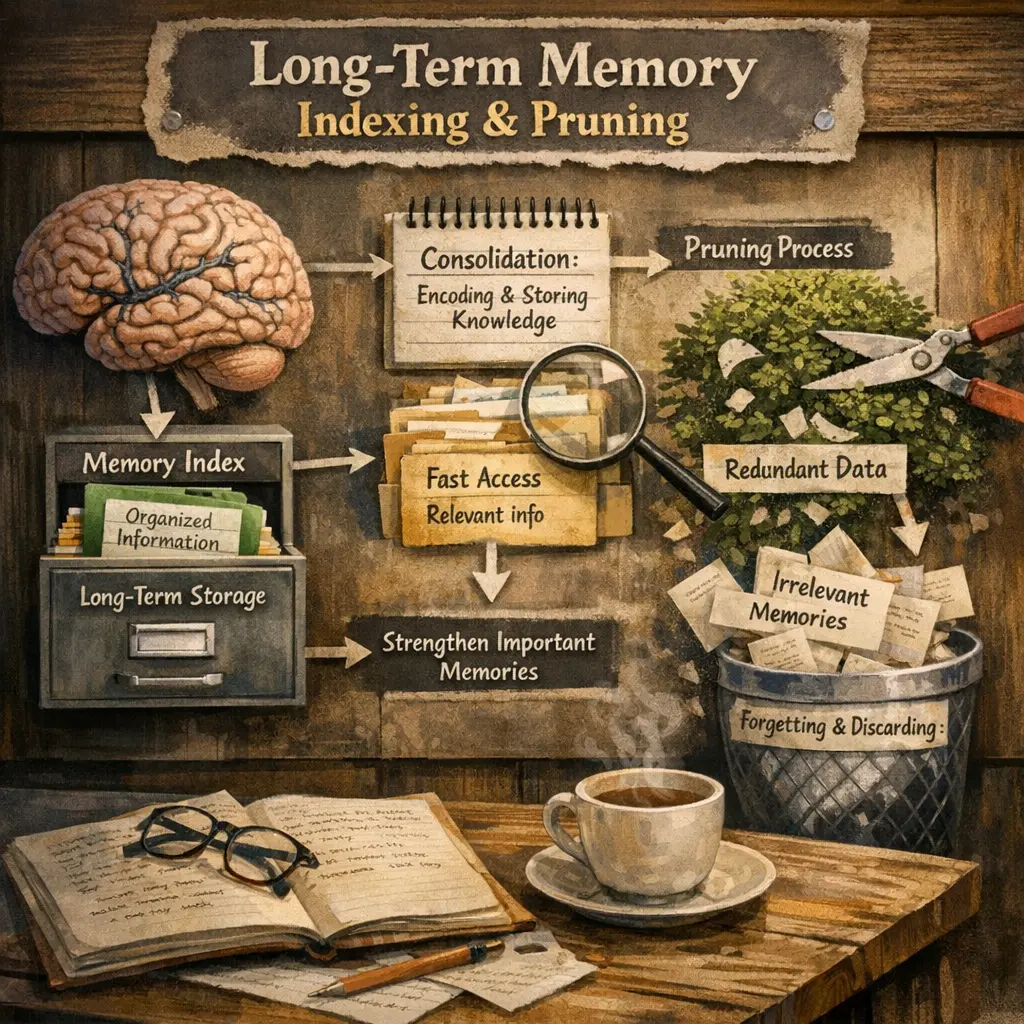

Long-Term Memory Indexing & Pruning in agent architecture refers to systematically organizing and managing an AI agent’s accumulated knowledge over time. Indexing enables efficient retrieval of relevant information from vast memory stores, while pruning removes outdated, irrelevant, or redundant data. This process ensures the agent maintains optimal performance, avoids information overload, and adapts to new tasks or environments by retaining only the most valuable and contextually appropriate memories for decision-making and learning.

Long-Term Memory Indexing & Pruning

Long-Term Memory Indexing & Pruning in agent architecture refers to systematically organizing and managing an AI agent’s accumulated knowledge over time. Indexing enables efficient retrieval of relevant information from vast memory stores, while pruning removes outdated, irrelevant, or redundant data. This process ensures the agent maintains optimal performance, avoids information overload, and adapts to new tasks or environments by retaining only the most valuable and contextually appropriate memories for decision-making and learning.

💡 Key Takeaways

- Understand what long-term memory indexing is and why fast retrieval matters.

- Learn common indexing strategies for long-term memory (e.g., tree- and hash-based indexes) and their trade-offs.

- Explore pruning techniques to remove outdated or low-utility memories while preserving important context.

- Identify criteria for deciding when to prune (recency, frequency, relevance) and how to monitor memory health and performance implications.

❓ Frequently Asked Questions

What is long-term memory indexing?

Indexing organizes stored memories so they can be found quickly, often by mapping content to search keys or embeddings.

Why prune long-term memory?

Pruning removes outdated, redundant, or rarely used memories to save space, reduce retrieval noise, and improve speed and accuracy.

What criteria guide pruning?

Pruning uses criteria like recency, usefulness for goals, access frequency, redundancy with other memories, and potential to improve future recall.

How do indexing and pruning work together?

Indexing enables fast retrieval by organizing memories; pruning keeps the memory set lean, reducing interference and improving recall efficiency.