Multimodal LLM Evaluation Fundamentals

Multimodal LLM Evaluation Fundamentals refer to the foundational principles and methods used to assess large language models (LLMs) that process and generate information across multiple modes, such as text, images, and audio. LLM evaluations (evals) involve systematically measuring the model’s accuracy, robustness, and alignment with human expectations by testing its performance on diverse, multimodal tasks. These fundamentals ensure that LLMs function reliably and ethically in real-world, multi-format scenarios.

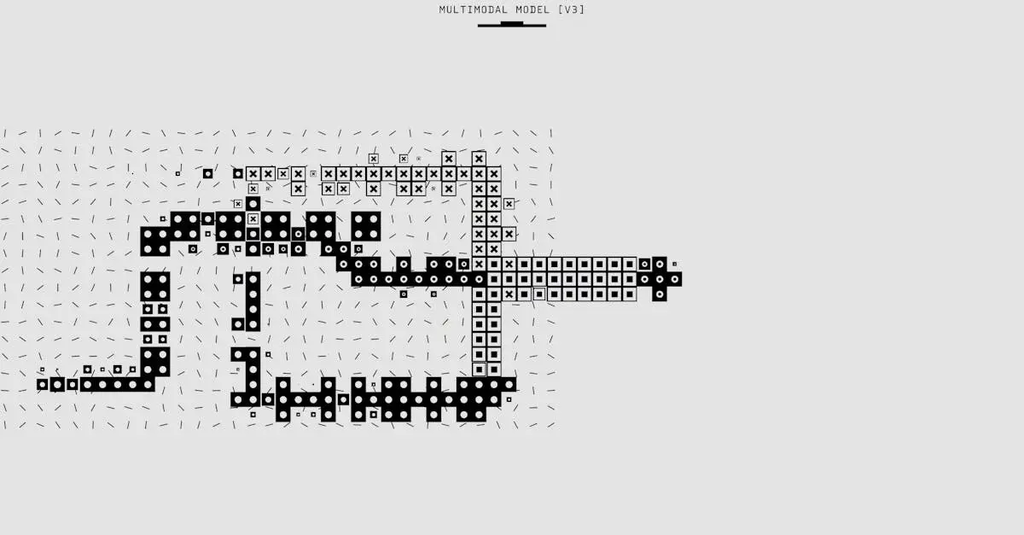

Multimodal LLM Evaluation Fundamentals

Multimodal LLM Evaluation Fundamentals refer to the foundational principles and methods used to assess large language models (LLMs) that process and generate information across multiple modes, such as text, images, and audio. LLM evaluations (evals) involve systematically measuring the model’s accuracy, robustness, and alignment with human expectations by testing its performance on diverse, multimodal tasks. These fundamentals ensure that LLMs function reliably and ethically in real-world, multi-format scenarios.

💡 Key Takeaways

- Understand what multimodal LLMs are and the modalities they support (e.g., text, images, video, audio).

- Distinguish intrinsic vs. extrinsic evaluation and know common automatic and human metrics for multimodal tasks.

- Design robust evaluations by prioritizing dataset quality, cross-modal alignment, fair baselines, and thorough error analysis.

- Build and interpret a practical evaluation workflow, from task selection and metric computation to reporting limitations.

❓ Frequently Asked Questions

What is multimodal LLM evaluation?

It’s the process of assessing LLMs that handle multiple data types (text, images, audio, etc.) across tasks like captioning, VQA, and retrieval, using metrics that reflect accuracy, relevance, and robustness.

What metrics are commonly used?

For text generation and QA: BLEU, ROUGE, METEOR, CIDEr. For VQA: accuracy. For retrieval: Recall@K, Precision@K, or mAP. For multimodal alignment: CLIP score. Include human evaluation for overall quality.

How should you design a fair evaluation dataset?

Use diverse modalities and tasks with clear prompts, ensure high-quality ground truth, split data into train/val/test with no leakage, and include challenging cases and bias considerations.

What are common pitfalls to avoid?

Relying on a single metric, using synthetic or biased data, not reporting variability, ignoring robustness to noise or distribution shifts, and skipping error analysis or reproducibility.