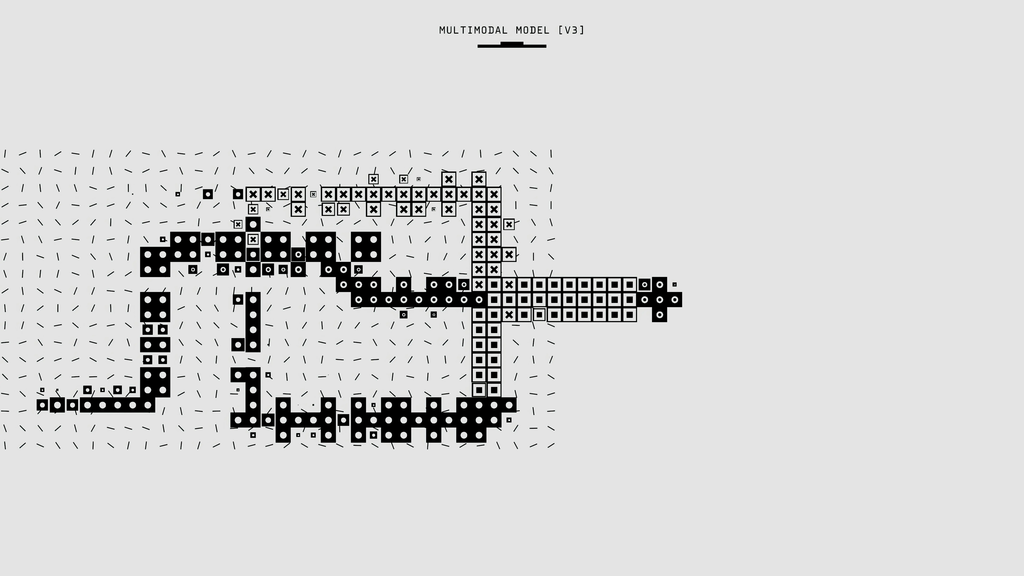

Multimodal risk assessment for text-image-audio models

Multimodal risk assessment for text-image-audio models refers to the systematic evaluation of potential risks and vulnerabilities in artificial intelligence systems that process and integrate information from multiple data types—namely text, images, and audio. This assessment aims to identify issues such as bias, misinformation, privacy breaches, and unintended harmful outputs, ensuring the models operate safely, ethically, and reliably across diverse real-world scenarios involving complex, multimodal inputs.

Multimodal risk assessment for text-image-audio models

Multimodal risk assessment for text-image-audio models refers to the systematic evaluation of potential risks and vulnerabilities in artificial intelligence systems that process and integrate information from multiple data types—namely text, images, and audio. This assessment aims to identify issues such as bias, misinformation, privacy breaches, and unintended harmful outputs, ensuring the models operate safely, ethically, and reliably across diverse real-world scenarios involving complex, multimodal inputs.

💡 Key Takeaways

- Define multimodal risk assessment and its purpose for text-image-audio AI systems.

- Identify key risk categories across modalities, including privacy, bias, safety, and copyright concerns.

- Learn evaluation methods to test cross-modal vulnerabilities such as adversarial inputs and data leakage.

- Explore mitigation strategies and governance practices to reduce multimodal risks, including data handling, monitoring, and risk reporting.

❓ Frequently Asked Questions

What is multimodal risk assessment for text-image-audio models?

A systematic evaluation of risks and vulnerabilities in AI systems that process and integrate text, images, and audio, focusing on safety, fairness, privacy, and reliability.

What risks does multimodal assessment address?

Bias and discrimination, privacy and data consent issues, content safety and misinformation, misalignment with user intent, and robustness to noisy inputs or adversarial manipulation across modalities.

What methods are used in multimodal risk assessment?

Threat modeling, risk scoring, red-teaming/adversarial testing, bias and fairness audits, privacy impact assessments, data governance reviews, and interpretability analyses.

Why is multimodal risk assessment important?

Because integrating multiple data types introduces new failure modes and privacy concerns, ensuring safer, more trustworthy, and compliant AI systems.