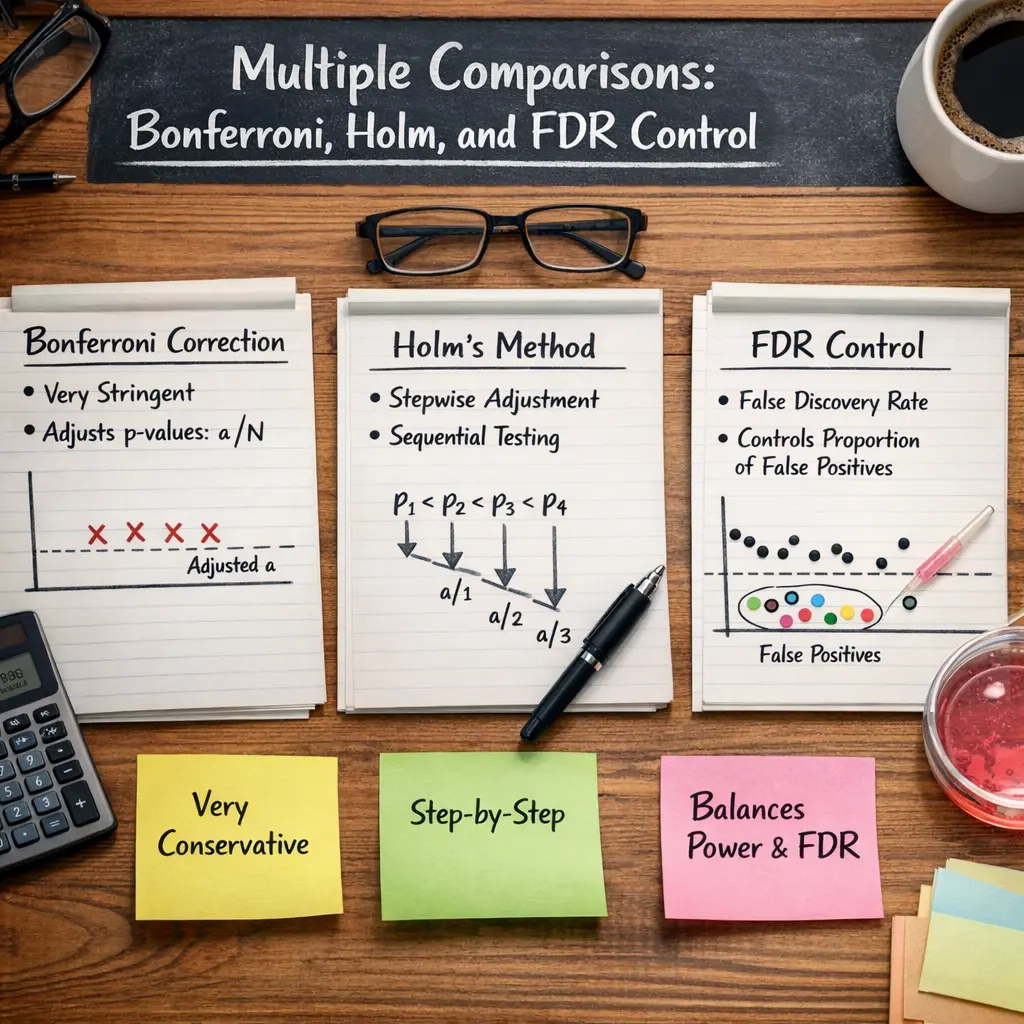

Multiple Comparisons: Bonferroni, Holm, and FDR Control

Multiple comparisons refer to the statistical challenge of testing several hypotheses simultaneously, which increases the risk of false positives. The Bonferroni method controls this by adjusting the significance threshold, making it more stringent. The Holm method is a stepwise, less conservative alternative that sequentially tests hypotheses. False Discovery Rate (FDR) control, such as the Benjamini-Hochberg procedure, limits the expected proportion of false positives. In LLM evaluations, these methods help ensure reliable results when comparing multiple models or metrics.

Multiple Comparisons: Bonferroni, Holm, and FDR Control

Multiple comparisons refer to the statistical challenge of testing several hypotheses simultaneously, which increases the risk of false positives. The Bonferroni method controls this by adjusting the significance threshold, making it more stringent. The Holm method is a stepwise, less conservative alternative that sequentially tests hypotheses. False Discovery Rate (FDR) control, such as the Benjamini-Hochberg procedure, limits the expected proportion of false positives. In LLM evaluations, these methods help ensure reliable results when comparing multiple models or metrics.

💡 Key Takeaways

- Understand why many statistical tests raise the risk of false positives and why correction is needed.

- Learn the Bonferroni correction and how to apply it to p-values or the significance level to control the family-wise error rate.

- Learn the Holm-Bonferroni method as a stepwise, less conservative alternative that often improves power.

- Understand the Benjamini-Hochberg false discovery rate (FDR) control and when it’s appropriate to use.

- Compare when to use Bonferroni, Holm, or FDR based on study goals and tolerance for false positives.

❓ Frequently Asked Questions

What is multiple comparisons and why do we correct p-values?

When you perform many statistical tests, the chance of at least one false positive increases. Corrections adjust significance criteria or p-values to control error rates (FWER or FDR) so findings are more reliable.

How does the Bonferroni correction work and when should I use it?

Bonferroni multiplies p-values by the number of tests (or uses alpha divided by the number of tests as the threshold). It strongly controls the family-wise error rate but can be very conservative with many tests; use when false positives are especially costly.

What is the Holm correction and how is it different from Bonferroni?

Holm is a step-down procedure: order p-values from smallest to largest and compare each to alpha divided by the remaining number of tests. It is generally less conservative and more powerful than Bonferroni while still controlling the family-wise error rate.

What is FDR control (e.g., Benjamini–Hochberg) and when should I use it?

FDR control limits the expected proportion of false discoveries among rejected hypotheses. It is less stringent than FWER methods, offering more power for large numbers of tests—ideal for exploratory, high-throughput analyses.