Performance metrics selection and thresholds

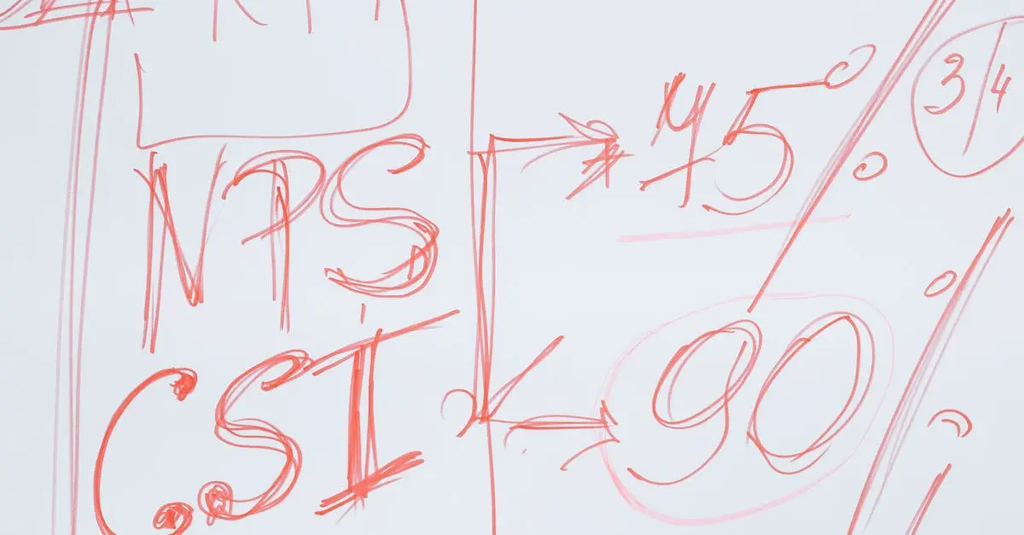

Performance metrics selection and thresholds involve identifying the most relevant quantitative measures to assess the effectiveness, efficiency, or quality of a process, system, or individual. Once appropriate metrics are chosen, specific threshold values are established to define acceptable or desired performance levels. These thresholds serve as benchmarks for evaluation, helping organizations monitor progress, identify areas for improvement, and ensure that objectives and standards are consistently met.

Performance metrics selection and thresholds

Performance metrics selection and thresholds involve identifying the most relevant quantitative measures to assess the effectiveness, efficiency, or quality of a process, system, or individual. Once appropriate metrics are chosen, specific threshold values are established to define acceptable or desired performance levels. These thresholds serve as benchmarks for evaluation, helping organizations monitor progress, identify areas for improvement, and ensure that objectives and standards are consistently met.

💡 Key Takeaways

- Identify the most relevant quantitative metrics to assess the effectiveness, efficiency, or quality of a process, system, or individual.

- Ensure metrics are measurable, reliable, and aligned with stakeholder goals and governance needs.

- Define clear thresholds that distinguish acceptable, target, and escalating performance to guide actions.

- Establish ongoing governance practices including monitoring, validation, documentation, and periodic review of metrics and thresholds.

❓ Frequently Asked Questions

What are performance metrics in AI governance and control?

Quantitative measures used to assess how well an AI system, process, or model achieves its goals, including effectiveness, efficiency, and quality—used to monitor performance and compliance.

How do you select the most relevant metrics for an AI system?

Start with objectives and risk, involve stakeholders, ensure data is available and reliable, and choose metrics that reflect real impact and can be acted upon.

What is a threshold and how is it used in monitoring AI performance?

A threshold is a defined value that separates acceptable from unacceptable performance. Crossing it triggers alerts or governance actions to address issues.

How should thresholds be established and updated over time?

Set thresholds based on historical data, target performance, and risk tolerance; review and adjust for data drift, model updates, and regulatory changes.