Prompt safety, guardrails, and policy alignment

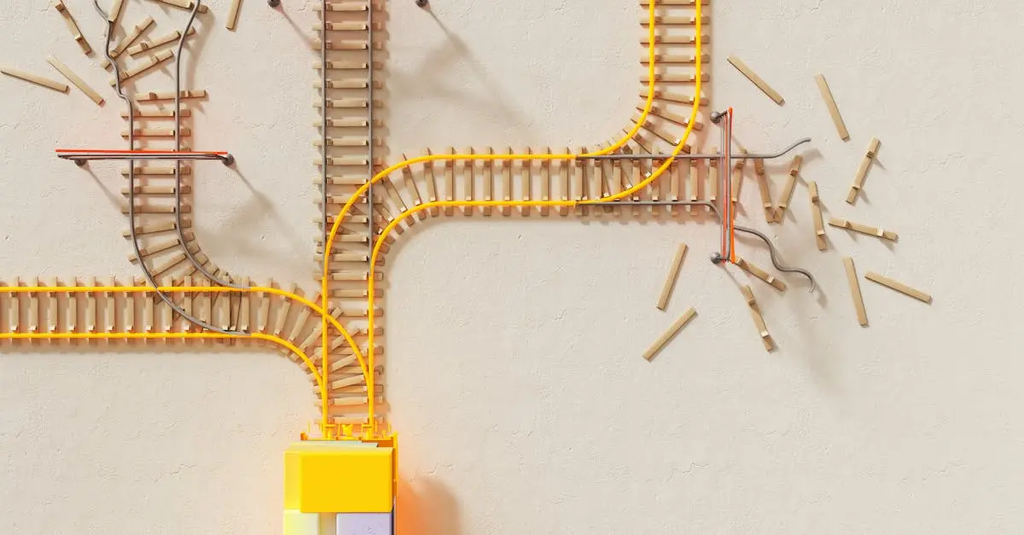

Prompt safety, guardrails, and policy alignment refer to measures ensuring that AI-generated outputs are appropriate, secure, and consistent with organizational or societal guidelines. Prompt safety involves designing prompts to minimize harmful or unintended responses. Guardrails are built-in constraints or rules that prevent undesirable behaviors or content. Policy alignment ensures the AI’s actions and outputs adhere to established ethical standards, legal requirements, and company policies, promoting responsible and trustworthy AI use.

Prompt safety, guardrails, and policy alignment

Prompt safety, guardrails, and policy alignment refer to measures ensuring that AI-generated outputs are appropriate, secure, and consistent with organizational or societal guidelines. Prompt safety involves designing prompts to minimize harmful or unintended responses. Guardrails are built-in constraints or rules that prevent undesirable behaviors or content. Policy alignment ensures the AI’s actions and outputs adhere to established ethical standards, legal requirements, and company policies, promoting responsible and trustworthy AI use.

💡 Key Takeaways

- Define prompt safety and why it's essential to limit harmful or biased AI outputs.

- Identify guardrails (policy constraints, content filters, and monitoring) that constrain AI behavior.

- Explain policy alignment and how it ensures outputs conform to organizational, legal, and societal norms.

- Apply risk-aware prompt design to anticipate and mitigate ethical and societal risks in AI outputs.

❓ Frequently Asked Questions

What is prompt safety?

Prompt safety is designing prompts to minimize the chance of generating harmful, biased, or inappropriate outputs.

What are guardrails in AI systems?

Guardrails are built-in constraints and mechanisms that steer or block outputs to keep them safe, compliant, and aligned with policies.

What is policy alignment in AI?

Policy alignment means ensuring AI outputs conform to organizational rules, legal requirements, and societal norms.

Why is an ethical and societal risk perspective important in AI?

It helps prevent harm, protect rights, reduce bias, and maintain public trust by guiding how AI is designed and deployed.