Prompts as Policies: Basic Prompting for Agents+50

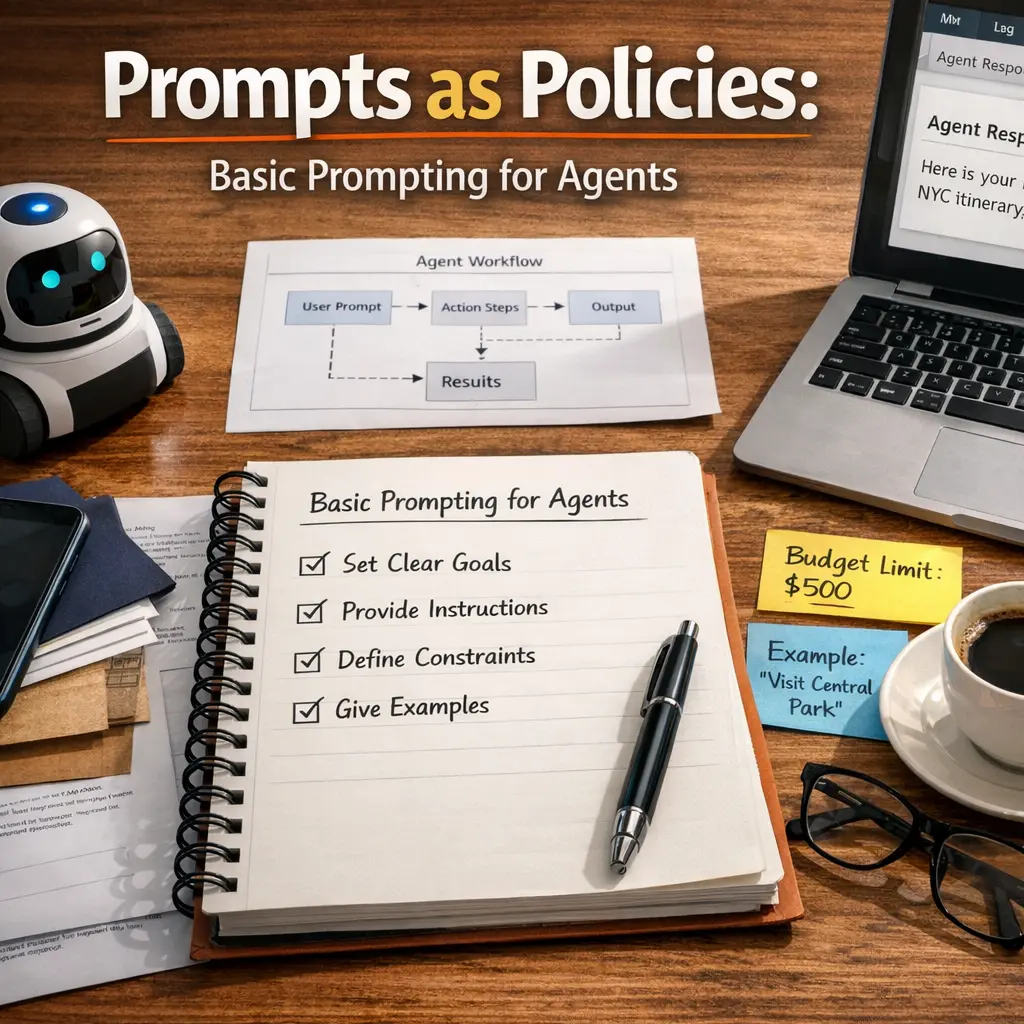

"Prompts as Policies: Basic Prompting for Agents (Agent Architecture)" refers to using prompts—structured language instructions—as guiding policies for autonomous agents. In this context, prompts define the agent’s behavior, decision-making, and task execution, serving as high-level rules or strategies. This approach leverages natural language to direct agent actions, simplifying the process of programming and adapting agent behavior within various architectures, making agents more flexible and accessible for diverse applications.

Prompts as Policies: Basic Prompting for Agents+50

"Prompts as Policies: Basic Prompting for Agents (Agent Architecture)" refers to using prompts—structured language instructions—as guiding policies for autonomous agents. In this context, prompts define the agent’s behavior, decision-making, and task execution, serving as high-level rules or strategies. This approach leverages natural language to direct agent actions, simplifying the process of programming and adapting agent behavior within various architectures, making agents more flexible and accessible for diverse applications.

💡 Key Takeaways

- Understand prompts as policies that guide how agents behave and make decisions.

- Identify the roles in prompting (system, user, and assistant) and how they shape outputs.

- Explore basic prompting techniques for agents, including zero-shot, few-shot, and chain-of-thought prompts.

- Recognize limitations and failure modes of prompts, and how to test and iterate them safely.

- Apply practical prompt design tips to align agent behavior with goals, constraints, and safety.

❓ Frequently Asked Questions

What does 'Prompts as Policies' mean?

It means using carefully crafted prompts to encode the rules, goals, and constraints that guide an AI agent's behavior, functioning as a policy.

What is an AI agent in this context?

A software component that acts to complete tasks by interpreting prompts, making decisions, and taking actions within defined boundaries.

How do you design a basic prompting strategy for agents?

Define the objective and role, specify allowed actions and constraints, provide context and examples, and include success criteria; keep prompts clear and modular.

What are common pitfalls and best practices?

Pitfalls include vague goals, ambiguity about allowed actions, overly long prompts, and missing safety constraints. Best practices are concise prompts, clear roles and rules, scenario testing, and iterative refinement.