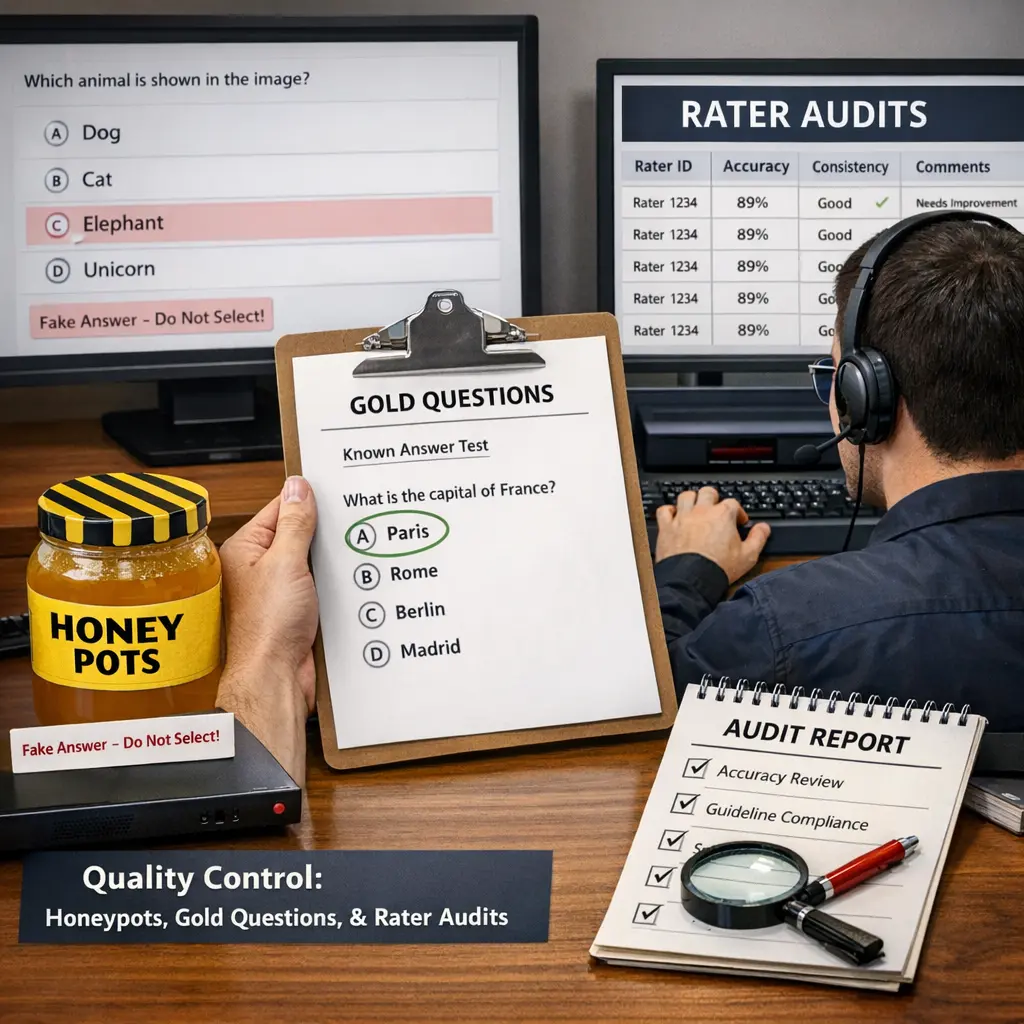

Quality Control: Honeypots, Gold Questions, and Rater Audits

Quality control in LLM evaluations involves strategies to ensure accurate assessments of model outputs. Honeypots are intentionally placed test items to detect inattentive or dishonest raters. Gold questions are pre-validated examples with known answers, used to benchmark rater accuracy. Rater audits involve systematically reviewing rater performance to identify inconsistencies or biases. Together, these methods help maintain high evaluation standards, ensuring reliable and trustworthy results in large language model assessments.

Quality Control: Honeypots, Gold Questions, and Rater Audits

Quality control in LLM evaluations involves strategies to ensure accurate assessments of model outputs. Honeypots are intentionally placed test items to detect inattentive or dishonest raters. Gold questions are pre-validated examples with known answers, used to benchmark rater accuracy. Rater audits involve systematically reviewing rater performance to identify inconsistencies or biases. Together, these methods help maintain high evaluation standards, ensuring reliable and trustworthy results in large language model assessments.

💡 Key Takeaways

- Understand how honeypots deter low-quality annotations in crowdsourcing.

- Learn how gold standard questions benchmark annotator accuracy.

- Explore rater audits and how they monitor performance and consistency.

- See how combining these QC techniques enhances data reliability.

- Identify practical best practices for implementing QC in labeling workflows.

❓ Frequently Asked Questions

What is a honeypot in quality control for labeling?

A task with a known answer embedded in the workflow to check whether a worker is paying attention; wrong answers can flag or filter the work.

What are gold questions (gold standard) in labeling?

Pre-labeled tasks with verified answers used to measure a labeler's accuracy and calibrate scoring for overall quality.

What are rater audits?

Periodic reviews where experienced raters re-check a sample of labels to assess consistency, detect drift, and improve guidelines.

How do honeypots and gold questions complement each other?

Honeypots test ongoing attention and effort, while gold questions provide a stable accuracy benchmark; together they help maintain high data quality.