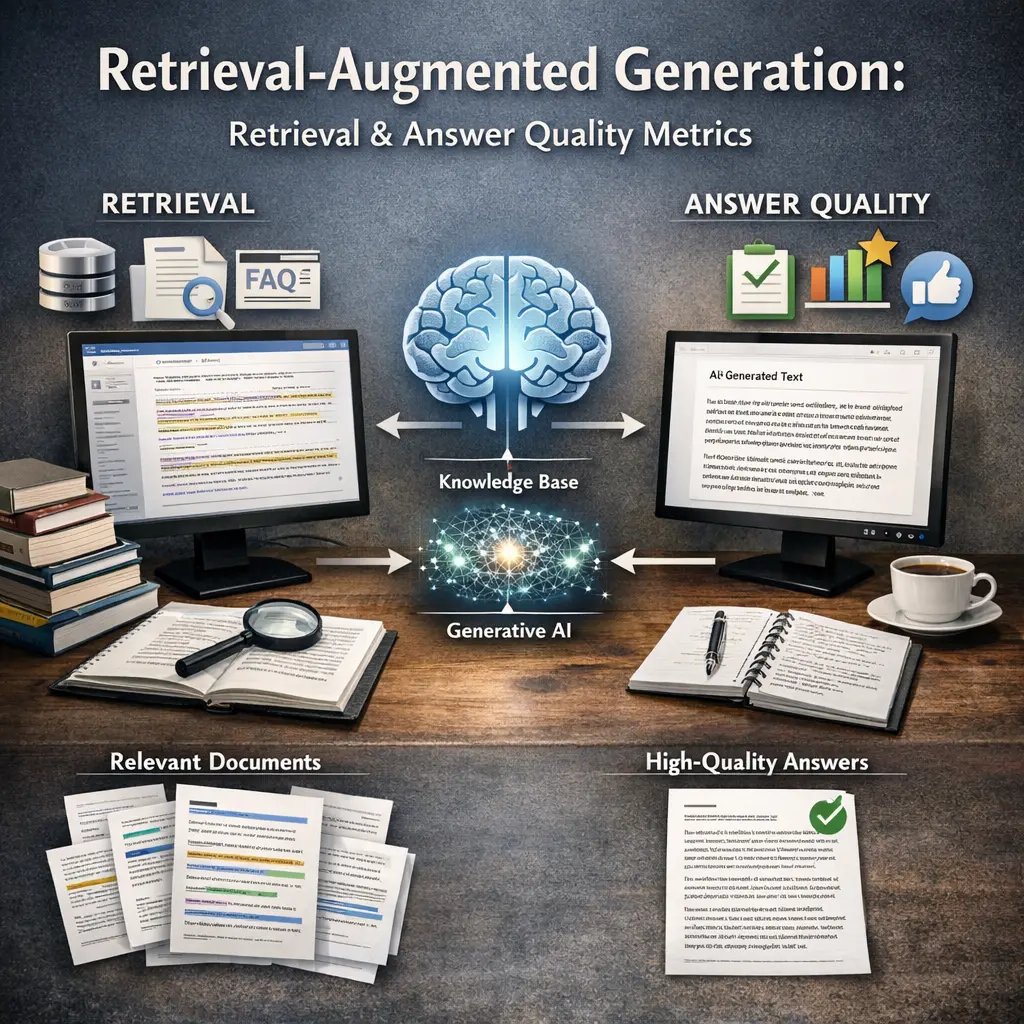

Retrieval-Augmented Generation: Retrieval and Answer Quality Metrics

Retrieval-Augmented Generation (RAG) combines retrieval of relevant documents with generative language models to produce informed, accurate responses. Evaluating RAG systems involves measuring retrieval quality—how well relevant information is sourced—and answer quality—how accurately and coherently the model responds using retrieved content. LLM evaluations (evals) for RAG assess both components, using metrics like precision, recall, factual correctness, and relevance to ensure the system retrieves useful data and generates high-quality answers.

Retrieval-Augmented Generation: Retrieval and Answer Quality Metrics

Retrieval-Augmented Generation (RAG) combines retrieval of relevant documents with generative language models to produce informed, accurate responses. Evaluating RAG systems involves measuring retrieval quality—how well relevant information is sourced—and answer quality—how accurately and coherently the model responds using retrieved content. LLM evaluations (evals) for RAG assess both components, using metrics like precision, recall, factual correctness, and relevance to ensure the system retrieves useful data and generates high-quality answers.

💡 Key Takeaways

- Understand Retrieval-Augmented Generation and how retrieval improves answers compared with generation alone.

- Recognize how retrieval quality (relevance and coverage) impacts end-to-end answer quality in a RAG system.

- Learn key evaluation metrics for RAG, including retrieval metrics like precision@k/recall@k/MRR and answer metrics like exact match and ROUGE/BLEU.

- Explore retrieval approaches (BM25 vs dense-vector retrievers) and practical tips to choose and tune them for better answer quality.

❓ Frequently Asked Questions

What is Retrieval-Augmented Generation (RAG)?

RAG combines a retriever with a generator: it fetches relevant documents from a knowledge store and uses that content to produce more accurate, less hallucinated answers.

How is retrieval quality evaluated in RAG?

Retrieval quality is typically measured with metrics like recall@k (whether a relevant document is in the top-k results), precision@k, and mean reciprocal rank (MRR).

How is answer quality evaluated in RAG?

Answer quality uses metrics such as exact match (EM), F1 score, and text-similarity metrics like ROUGE, BLEU, or semantic metrics like BERTScore.

Why is both retrieval and generation important in RAG?

Good retrieval provides useful evidence for the generator; together they reduce hallucinations and improve accuracy. Weak retrieval or generation can lead to errors in the final answer.