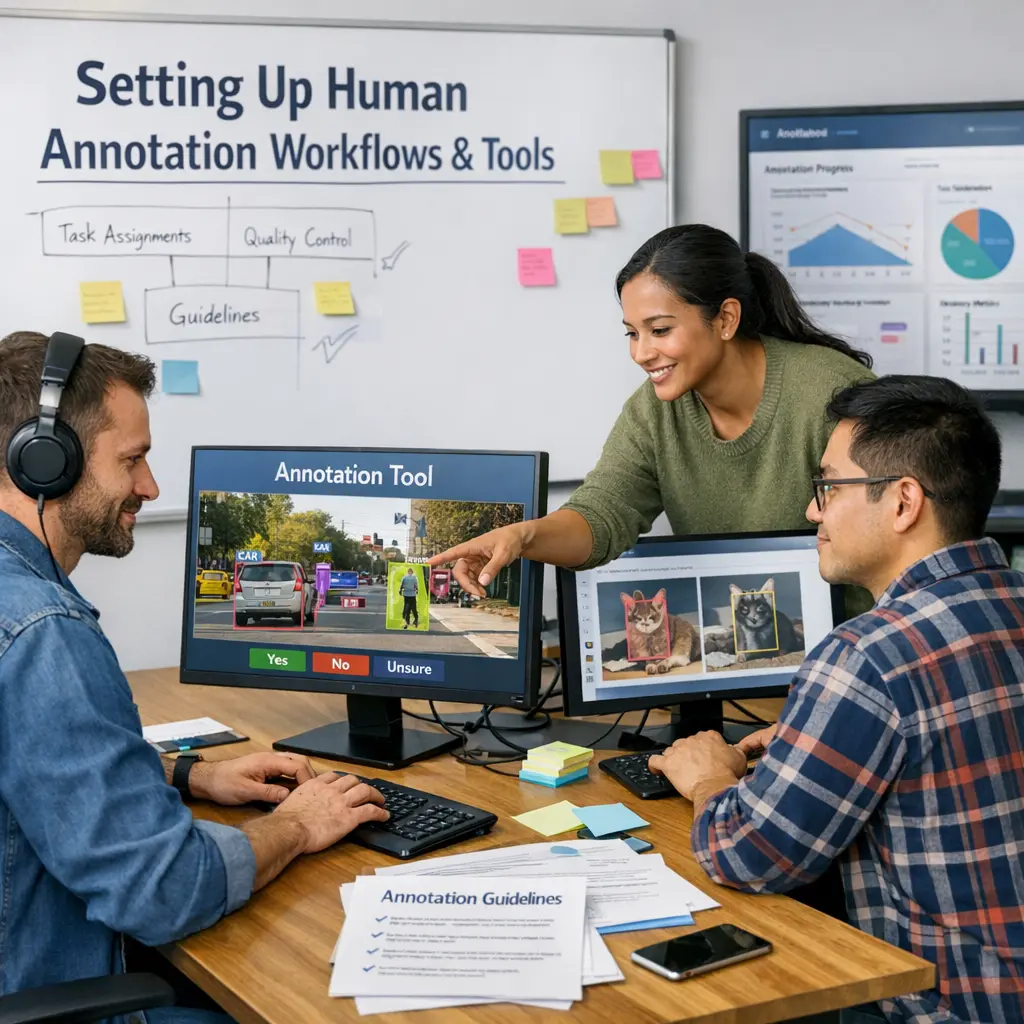

Setting Up Human Annotation Workflows and Tools

Setting up human annotation workflows and tools for LLM evaluations involves designing structured processes where human annotators assess and label outputs generated by large language models. This includes selecting appropriate annotation guidelines, training annotators, and utilizing specialized software platforms to collect, organize, and monitor annotations. Effective workflows ensure consistency, quality control, and reliable data for evaluating model performance, identifying biases, and informing further model improvements.

Setting Up Human Annotation Workflows and Tools

Setting up human annotation workflows and tools for LLM evaluations involves designing structured processes where human annotators assess and label outputs generated by large language models. This includes selecting appropriate annotation guidelines, training annotators, and utilizing specialized software platforms to collect, organize, and monitor annotations. Effective workflows ensure consistency, quality control, and reliable data for evaluating model performance, identifying biases, and informing further model improvements.

💡 Key Takeaways

- Understand essential components of a human annotation workflow: task design, labeling, quality assurance, and delivery.

- Learn how to choose and configure annotation tools for different data types (text, image, audio, video) and team sizes.

- Implement quality assurance practices: clear guidelines, calibration tasks, and inter-annotator agreement to improve consistency.

- Establish data governance for privacy, security, versioning, and audit trails of labeled data.

❓ Frequently Asked Questions

What is human annotation in machine learning?

Human annotation is labeling data (images, text, audio, etc.) by people to create ground-truth examples used to train and evaluate ML models.

What are the essential steps to set up a human annotation workflow?

Define the labeling schema and tasks, choose appropriate tools, recruit and train annotators, run pilots, implement quality assurance, and monitor performance for iteration.

What kinds of tools support human annotation workflows?

Cloud-based labeling platforms and annotation interfaces, quality-control tooling, and task-management systems that integrate with your data storage and pipelines.

How can you ensure high quality and consistency in annotations?

Provide clear guidelines and training, use calibration tasks, measure inter-annotator agreement, perform spot checks, and continuously refine the process.