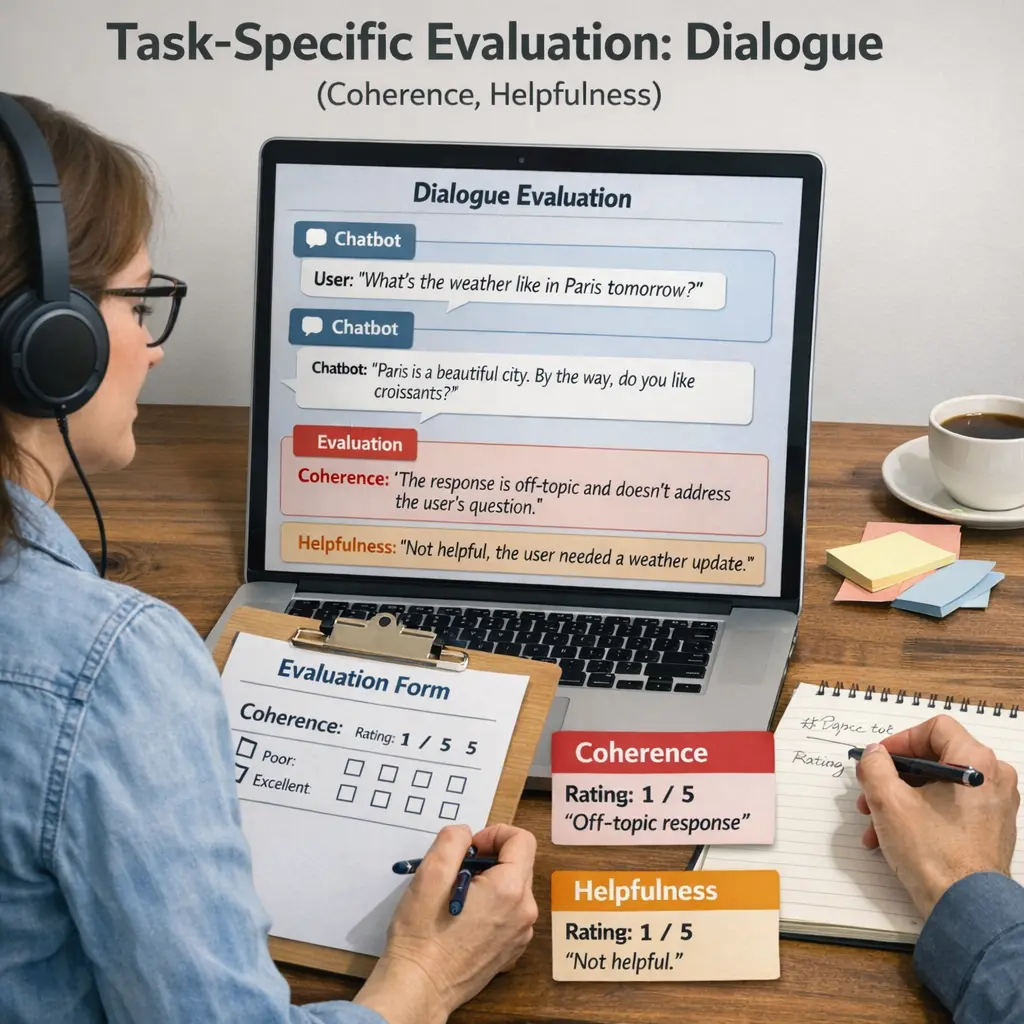

Task-specific Evaluation: Dialogue (coherence, helpfulness)

Task-specific Evaluation: Dialogue (coherence, helpfulness) in LLM Evaluations refers to assessing how well a language model maintains logical and consistent conversations (coherence) and provides useful, relevant responses (helpfulness) during dialogue. This evaluation helps determine the model’s effectiveness in real-world conversational scenarios by focusing on the quality and utility of its interactions, ensuring that responses are not only contextually appropriate but also valuable to users.

Task-specific Evaluation: Dialogue (coherence, helpfulness)

Task-specific Evaluation: Dialogue (coherence, helpfulness) in LLM Evaluations refers to assessing how well a language model maintains logical and consistent conversations (coherence) and provides useful, relevant responses (helpfulness) during dialogue. This evaluation helps determine the model’s effectiveness in real-world conversational scenarios by focusing on the quality and utility of its interactions, ensuring that responses are not only contextually appropriate but also valuable to users.

💡 Key Takeaways

- Define coherence in task-specific dialogue and how to spot it.

- Recognize helpfulness indicators like relevance, clarity, and actionable guidance.

- Assess dialogue quality across turns (context retention, continuity, user intent alignment).

- Understand how coherence and helpfulness together influence task success.

❓ Frequently Asked Questions

What does task-specific evaluation mean in dialogue?

It means judging dialogue outputs against the goals of the task, focusing on coherence and usefulness to the user rather than just general language quality.

How is coherence defined in this context?

Coherence means the response is logically connected to the prior conversation, stays on topic, and avoids contradictions or irrelevant details.

How is helpfulness defined in dialogue evaluation?

Helpfulness measures how useful and actionable the response is: it should be accurate, clear, directly address the question, and provide practical guidance when appropriate.

How are coherence and helpfulness typically measured?

Using a rubric or scoring system (e.g., 1–5) that rates relevance, logical flow, factual accuracy, completeness, and user satisfaction; often includes multiple evaluators to ensure reliability.