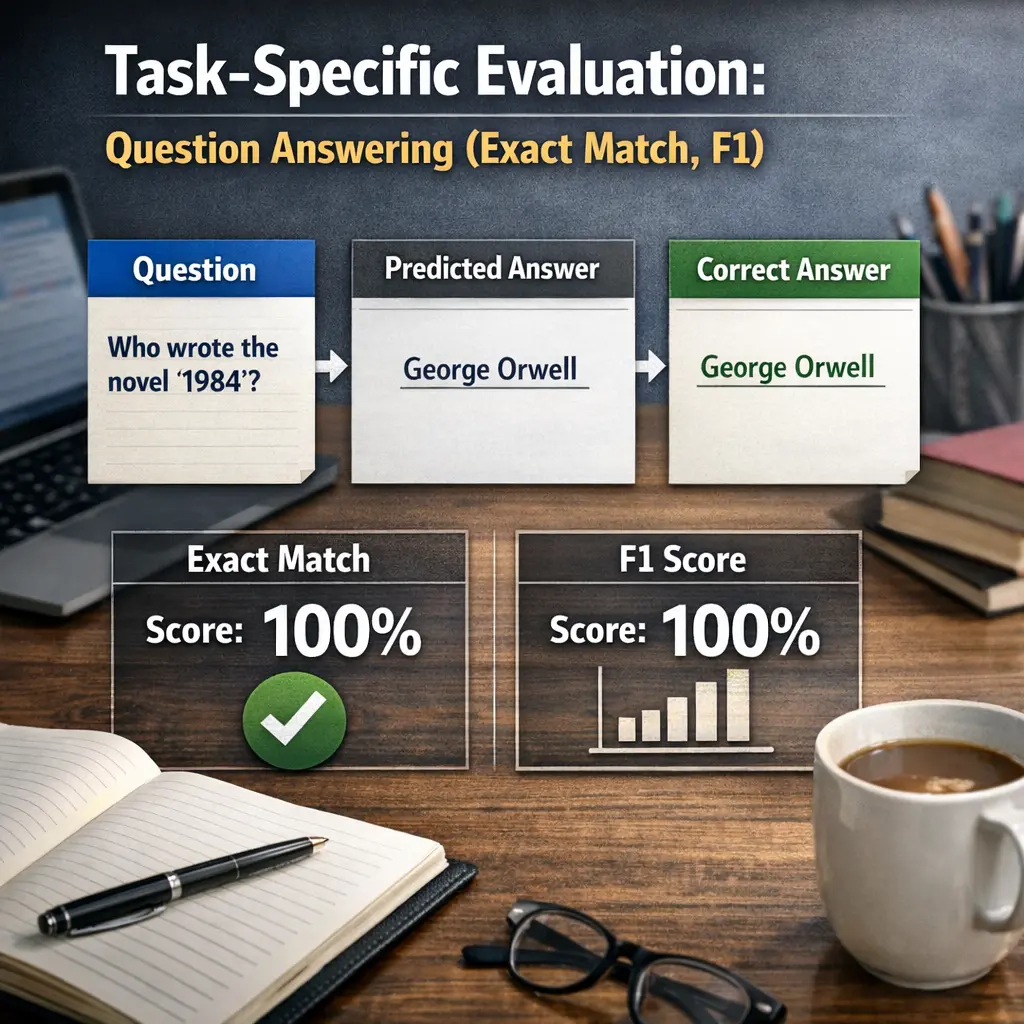

Task-specific Evaluation: Question Answering (exact match, F1)

Task-specific evaluation in question answering assesses how accurately a language model responds to questions. The exact match metric checks if the model’s answer matches the correct answer word-for-word. The F1 score measures the overlap between the predicted and correct answers, balancing precision and recall. These metrics help determine a model’s effectiveness in providing precise and relevant answers, ensuring its reliability for question answering tasks in LLM evaluations.

Task-specific Evaluation: Question Answering (exact match, F1)

Task-specific evaluation in question answering assesses how accurately a language model responds to questions. The exact match metric checks if the model’s answer matches the correct answer word-for-word. The F1 score measures the overlap between the predicted and correct answers, balancing precision and recall. These metrics help determine a model’s effectiveness in providing precise and relevant answers, ensuring its reliability for question answering tasks in LLM evaluations.

💡 Key Takeaways

- Understand exact match (EM): the predicted answer must exactly match the ground-truth after normalization.

- Learn F1: token-level overlap between prediction and ground truth, balancing precision and recall.

- Compare EM and F1: EM is strict while F1 rewards partial correctness; know when to rely on each metric.

- Know how to prepare and normalize answers for scoring (case, punctuation, articles, whitespace) and handle multiple ground-truth answers.

- Use EM for strict correctness and F1 for partial-credit assessment to guide model improvements and benchmarking.

❓ Frequently Asked Questions

What is task-specific evaluation in Question Answering?

It measures how well a QA system answers questions by comparing predictions to ground-truth answers, using metrics like Exact Match (EM) and F1.

What is Exact Match (EM) in QA evaluation?

EM is the percentage of predictions that exactly match the reference answer after normalization (e.g., lowercasing and removing punctuation).

What is the F1 score in QA evaluation?

F1 is the token-level harmonic mean of precision and recall between the predicted answer and the reference answer(s), rewarding partial overlaps.

How do EM and F1 differ in practice?

EM is binary (correct or incorrect for a question), while F1 reflects partial correctness and can be non-zero even when EM is zero.

How can I improve QA performance under EM and F1 metrics?

Normalize text, use multiple gold answers, improve tokenization, handle synonyms/paraphrase, and apply post-processing to align predictions with expected surface forms.