Temporal Abstraction & Options

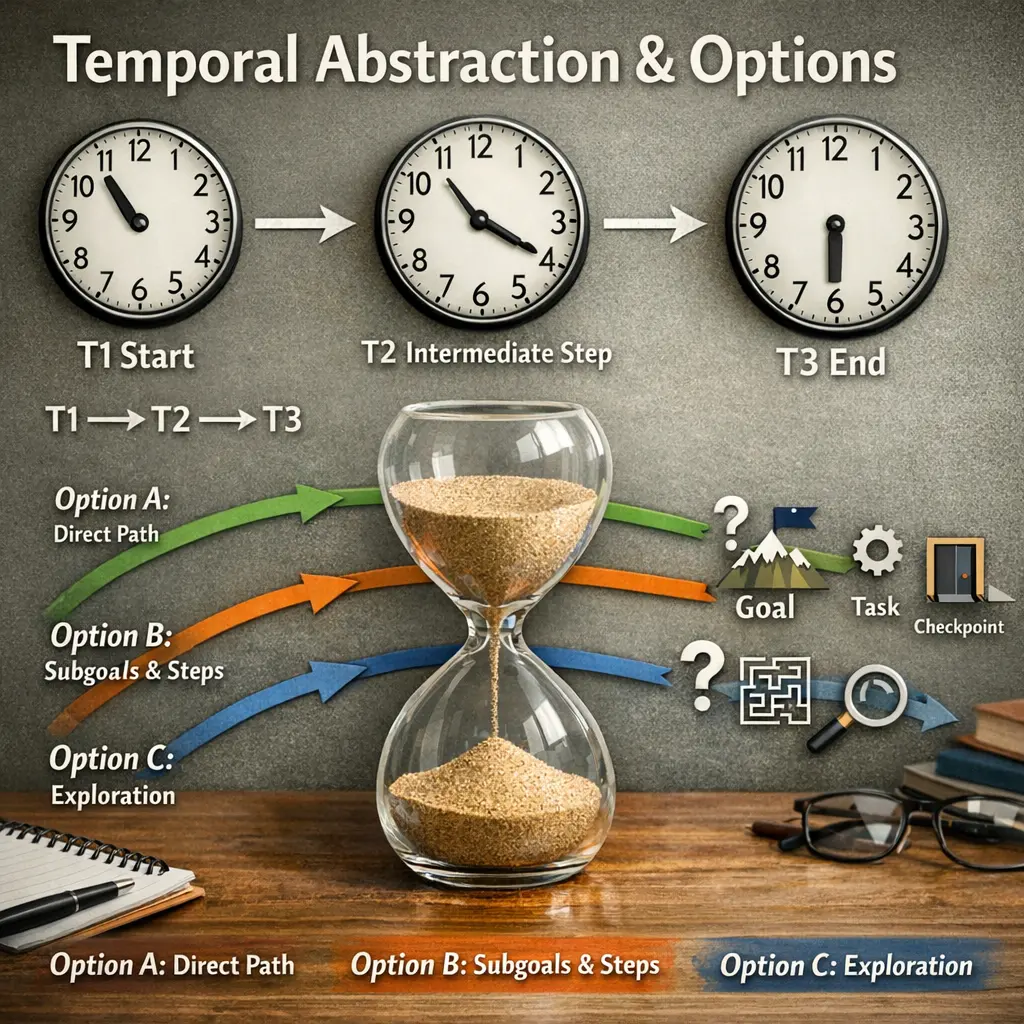

Temporal Abstraction & Options in agent architecture refer to methods that allow an agent to reason and plan over extended time periods by grouping sequences of primitive actions into higher-level, temporally extended actions called "options." This enables the agent to operate at multiple time scales, improving learning efficiency and decision-making. Options encapsulate policies for sub-tasks, allowing agents to reuse solutions and structure complex behaviors hierarchically within reinforcement learning frameworks.

Temporal Abstraction & Options

Temporal Abstraction & Options in agent architecture refer to methods that allow an agent to reason and plan over extended time periods by grouping sequences of primitive actions into higher-level, temporally extended actions called "options." This enables the agent to operate at multiple time scales, improving learning efficiency and decision-making. Options encapsulate policies for sub-tasks, allowing agents to reuse solutions and structure complex behaviors hierarchically within reinforcement learning frameworks.

💡 Key Takeaways

- Define temporal abstraction and explain how options operate as higher-level actions spanning multiple time steps.

- Identify the core components of the Options framework: option policies, initiation sets, and termination conditions.

- Explain how temporal abstraction can improve learning efficiency and planning in sequential decision problems.

- Provide intuitive examples such as robot navigation with macro-actions and planning with subgoals in games.

❓ Frequently Asked Questions

What is temporal abstraction in reinforcement learning?

Temporal abstraction groups sequences of primitive actions into higher-level choices called options, allowing the agent to plan and act over longer time horizons with fewer decisions.

What is the Options framework in RL?

The Options framework defines reusable, temporally extended actions called options, each with a initiation set, an intra-option policy, and a termination condition.

What are the main components of an option?

Initiation set specifies where an option can start; intra-option policy guides actions while the option is active; termination condition determines when the option ends.

How do options differ from primitive actions?

Options run for multiple steps and encode subgoals, enabling hierarchical control, while primitive actions are single-step decisions; using options can speed up learning and planning.