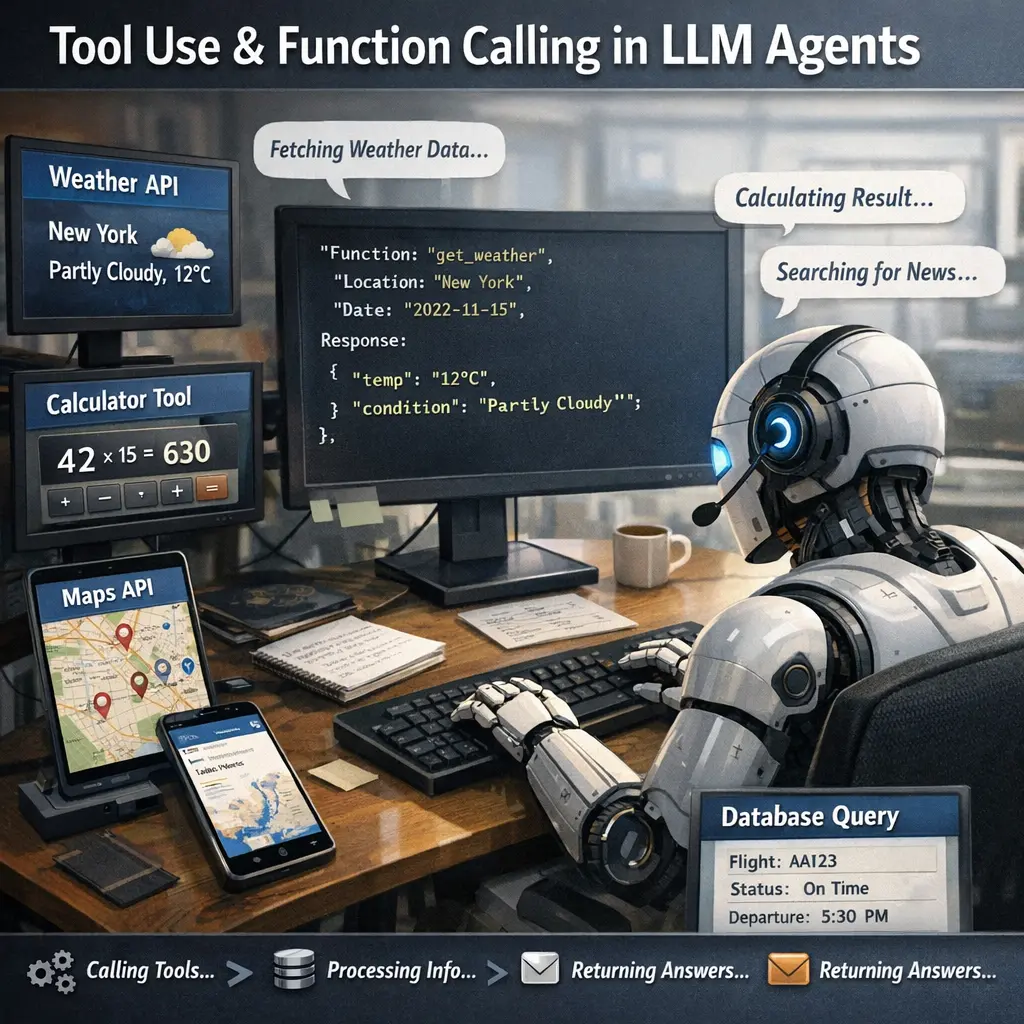

Tool Use & Function Calling in LLM Agents

Tool use and function calling in LLM agents refer to the ability of language model-based agents to interact with external tools, APIs, or functions to enhance their reasoning and problem-solving capabilities. Within agent architecture, this allows LLMs to delegate specific tasks—such as calculations, data retrieval, or web searches—to specialized functions, integrating their outputs into responses. This approach increases accuracy, versatility, and enables more complex, multi-step workflows in AI applications.

Tool Use & Function Calling in LLM Agents

Tool use and function calling in LLM agents refer to the ability of language model-based agents to interact with external tools, APIs, or functions to enhance their reasoning and problem-solving capabilities. Within agent architecture, this allows LLMs to delegate specific tasks—such as calculations, data retrieval, or web searches—to specialized functions, integrating their outputs into responses. This approach increases accuracy, versatility, and enables more complex, multi-step workflows in AI applications.

💡 Key Takeaways

- Understand how LLM agents use external tools to extend capabilities.

- Learn how function calling enables structured tool interactions.

- Identify common tools (APIs, calculators, databases) and when to use them.

- Practice prompting to trigger tool calls and parse tool results.

- Plan for tool failures with error handling and fallbacks.

❓ Frequently Asked Questions

What is tool use in LLM agents?

Tool use lets an AI agent call external tools or APIs to fetch fresh data, perform actions, or compute results beyond its built-in knowledge.

What is function calling in LLM agents?

Function calling is a formal mechanism where the model requests a predefined function with parameters; the system executes the function and returns the result to the model.

When does an LLM agent decide to call a tool or function?

When the answer requires real-time data, access to external systems, or computations the model cannot perform on its own.

What are common parts of a tool-use setup?

A catalog of available tools, a function invocation protocol, input validation, and a method to integrate results into the final reply.