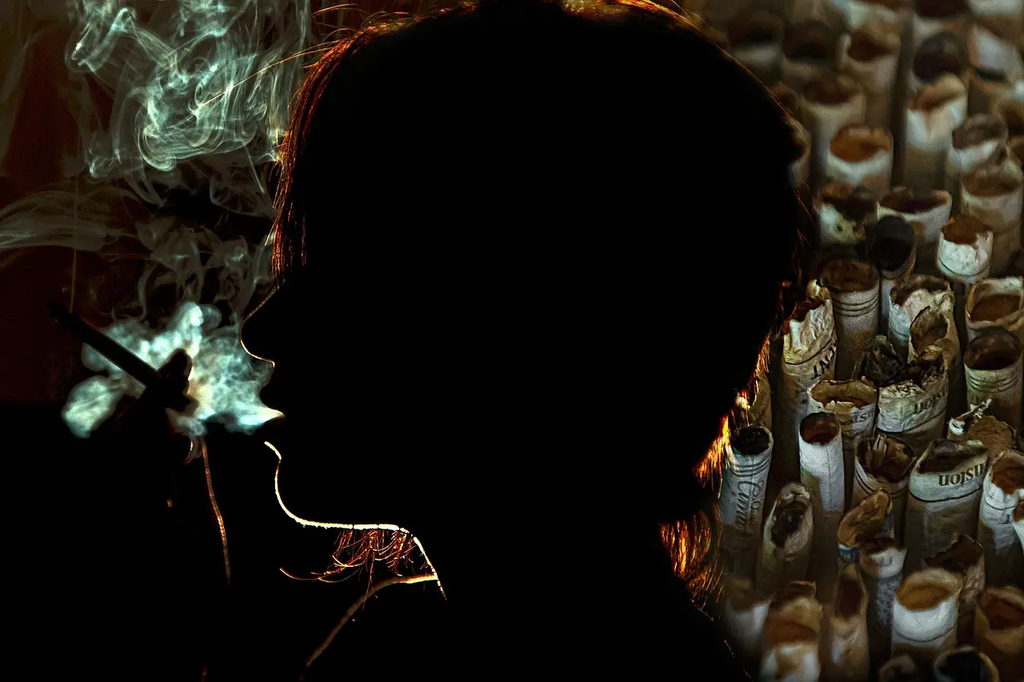

Toxicity and harmful content risks

Toxicity and harmful content risks refer to the dangers associated with exposure to offensive, abusive, or inappropriate material, particularly online. This can include hate speech, cyberbullying, misinformation, and explicit content that may negatively impact individuals’ mental health, well-being, or safety. Such risks are prevalent on social media, forums, and digital platforms, highlighting the need for effective moderation, user education, and robust safety measures to protect vulnerable users and maintain healthy digital environments.

Toxicity and harmful content risks

Toxicity and harmful content risks refer to the dangers associated with exposure to offensive, abusive, or inappropriate material, particularly online. This can include hate speech, cyberbullying, misinformation, and explicit content that may negatively impact individuals’ mental health, well-being, or safety. Such risks are prevalent on social media, forums, and digital platforms, highlighting the need for effective moderation, user education, and robust safety measures to protect vulnerable users and maintain healthy digital environments.

💡 Key Takeaways

- Identify common forms of toxicity and harmful content online (hate speech, cyberbullying, misinformation, explicit material) and how they can arise in AI systems.

- Understand the potential impacts on mental health and well-being from exposure to toxic content.

- Recognize data-related risks in AI—biased or low-quality data, labeling inconsistencies, privacy concerns, and consent issues.

- Learn mitigation and governance strategies, including content moderation, safety policies, and careful data curation.

- Differentiate misinformation from disinformation and examine AI's role in generating or amplifying harmful content (e.g., deepfakes) and how to detect it.

❓ Frequently Asked Questions

What is toxicity in online content?

Toxicity refers to abusive, harassing, or hateful material that can harm others, including hate speech, threats, bullying, and slurs.

How is harmful content different from misinformation?

Harmful content targets individuals or groups with abuse or explicit material, while misinformation is false or misleading information. They can overlap, but describe different risks.

How can AI help identify toxicity and data concerns?

AI can flag potentially harmful posts by detecting patterns of abusive language and other risk signals. It’s useful but must be designed to minimize bias and protect privacy, often with human review.

What can users do to stay safe and respond to toxic content?

Use safety tools (block/mute/report), avoid engaging with abusers, take breaks if distressed, and seek support or report concerns to the platform if needed.