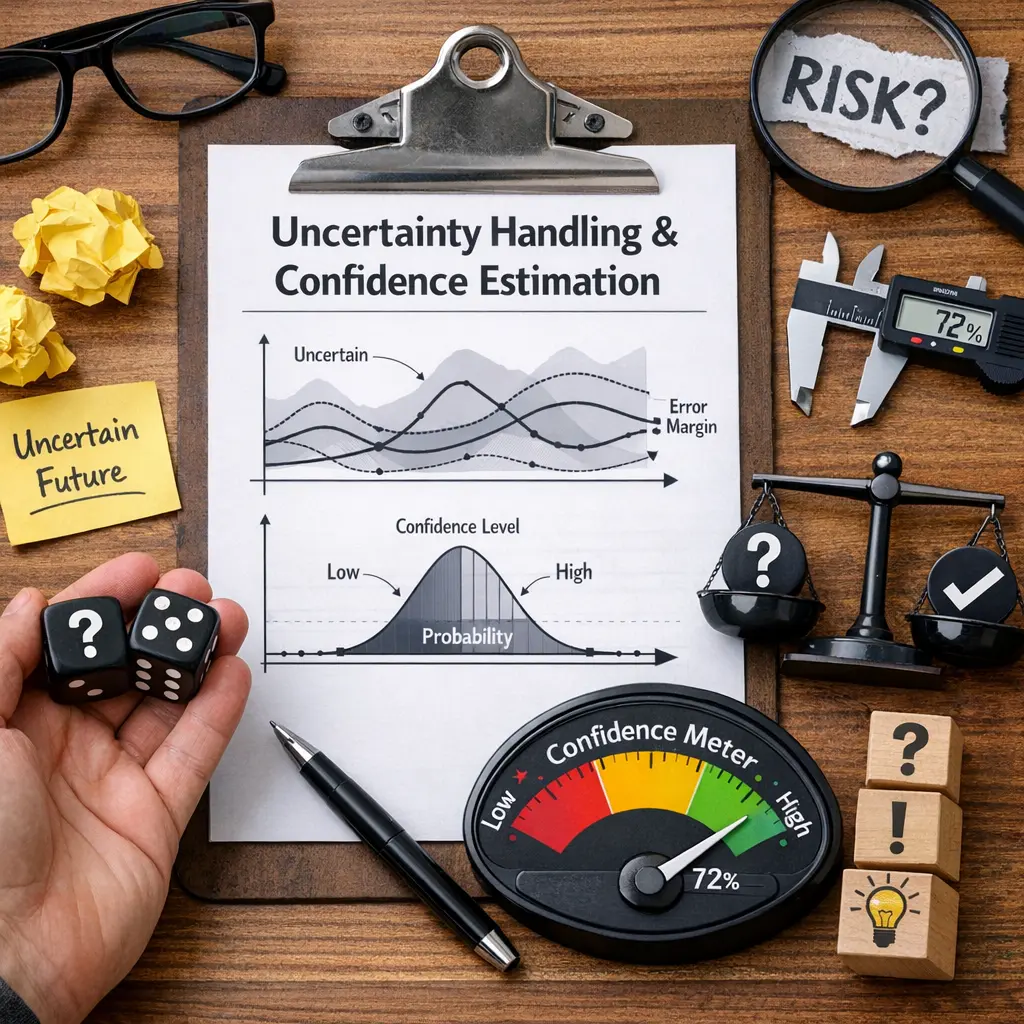

Uncertainty Handling & Confidence Estimation

Uncertainty Handling & Confidence Estimation in agent architecture refers to methods that allow intelligent systems to recognize, quantify, and manage their own uncertainties during decision-making. By estimating confidence levels in predictions or actions, agents can adapt strategies, seek additional information, or defer decisions when faced with ambiguous situations. This enhances robustness, reliability, and trustworthiness, enabling agents to operate effectively in dynamic, unpredictable environments while communicating the degree of certainty in their outputs.

Uncertainty Handling & Confidence Estimation

Uncertainty Handling & Confidence Estimation in agent architecture refers to methods that allow intelligent systems to recognize, quantify, and manage their own uncertainties during decision-making. By estimating confidence levels in predictions or actions, agents can adapt strategies, seek additional information, or defer decisions when faced with ambiguous situations. This enhances robustness, reliability, and trustworthiness, enabling agents to operate effectively in dynamic, unpredictable environments while communicating the degree of certainty in their outputs.

💡 Key Takeaways

- Define uncertainty and confidence in model predictions and why they matter for decision making.

- Differentiate sources of uncertainty: aleatoric (inherent randomness) versus epistemic (model knowledge limits).

- Learn common techniques to estimate and quantify uncertainty, such as confidence intervals, probability calibration, Bayesian methods, and bootstrap approaches.

- Interpret and communicate uncertainty effectively to stakeholders and use it to set safe thresholds and risk-informed actions.

❓ Frequently Asked Questions

What is uncertainty handling?

The process of recognizing ambiguity in data and models and using methods to measure, manage, and communicate that uncertainty in decisions.

What is confidence estimation?

A way to quantify how sure we are about a result, often via intervals or probability scores that reflect uncertainty.

What is a confidence interval?

A range around a statistic that is expected to contain the true value a specified proportion of the time (e.g., 95%) in repeated samples.

What are common methods to quantify uncertainty in predictions?

Confidence/prediction intervals, Bayesian posterior distributions, bootstrapping, and uncertainty calibration.

Why is calibration important in prediction models?

Calibration checks whether predicted probabilities match observed outcomes, helping ensure reliable uncertainty estimates.