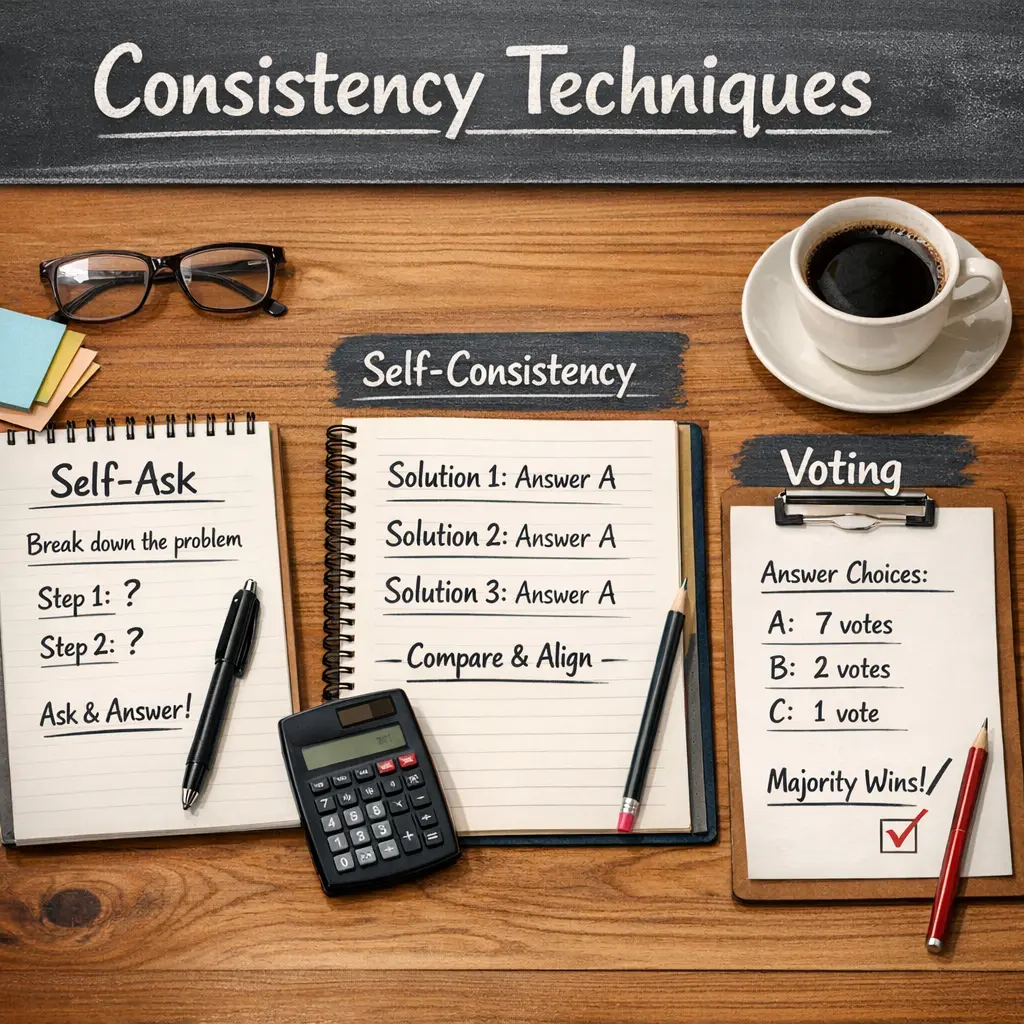

Consistency Techniques: Self-Ask, Self-Consistency, and Voting

Consistency techniques in Retrieval-Augmented Generation (RAG) aim to improve the reliability of generated responses. Self-Ask involves the model generating clarifying questions to refine its understanding. Self-Consistency aggregates multiple outputs from the model to select the most consistent answer. Voting combines responses from different model runs or retrievals, selecting the answer with the majority consensus. Together, these methods enhance accuracy and reduce variability in RAG-generated outputs.

Consistency Techniques: Self-Ask, Self-Consistency, and Voting

Consistency techniques in Retrieval-Augmented Generation (RAG) aim to improve the reliability of generated responses. Self-Ask involves the model generating clarifying questions to refine its understanding. Self-Consistency aggregates multiple outputs from the model to select the most consistent answer. Voting combines responses from different model runs or retrievals, selecting the answer with the majority consensus. Together, these methods enhance accuracy and reduce variability in RAG-generated outputs.

💡 Key Takeaways

- Understand how self-ask prompts generate multiple reasoning paths to tackle a problem.

- Learn how self-consistency uses voting among multiple reasoning paths to improve reliability of the final answer.

- Compare Self-Ask, Self-Consistency, and Voting to decide which technique fits a given task.

- Apply practical steps to implement these techniques: generate diverse reasoning branches and select the most coherent, consistent conclusion.

❓ Frequently Asked Questions

What is Self-Ask in consistency techniques?

Self-Ask is a prompting technique where the model generates and answers its own clarifying questions before solving the main task, helping to reveal assumptions and explore alternative paths.

What is Self-Consistency?

Self-Consistency creates multiple plausible reasoning paths and uses their final answers to select a consensus result, increasing reliability over a single chain-of-thought.

How does Voting work in this context?

Voting collects several candidate answers or reasoning traces and selects the most common or highest-scoring result, reducing the impact of any single incorrect path.

When should I apply these techniques, and can they be used together?

Use Self-Ask when unsure to surface hidden steps; use Self-Consistency to explore multiple paths and Voting to finalize the answer by consensus. They can be used together (Self-Ask to generate paths, Self-Consistency to compare them, and Voting to pick the final answer).