Context Window Management & Token Optimization+50

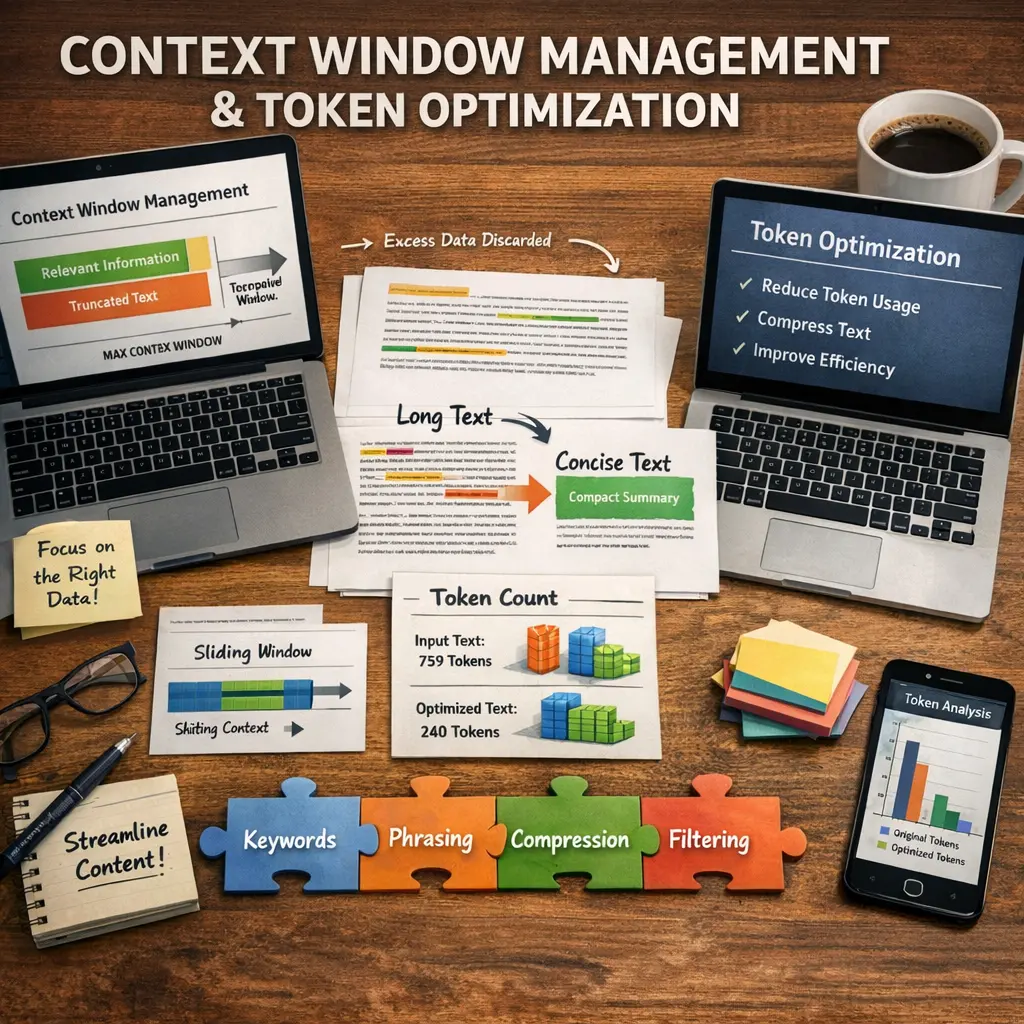

Context Window Management & Token Optimization in Retrieval-Augmented Generation (RAG) involve efficiently handling the limited input size (context window) of language models by selecting and organizing the most relevant information (tokens) from retrieved documents. This process ensures that the model receives concise, high-quality context, maximizing accuracy and coherence in generated responses while minimizing unnecessary or redundant data within the window constraints.

Context Window Management & Token Optimization+50

Context Window Management & Token Optimization in Retrieval-Augmented Generation (RAG) involve efficiently handling the limited input size (context window) of language models by selecting and organizing the most relevant information (tokens) from retrieved documents. This process ensures that the model receives concise, high-quality context, maximizing accuracy and coherence in generated responses while minimizing unnecessary or redundant data within the window constraints.

💡 Key Takeaways

- Understand what a context window is and how tokens fit inside it

- Learn how tokenization shapes token counts and model limits

- Explore practical techniques to fit inputs within a fixed context window (truncation, summarization, and chunking)

- Assess trade-offs between context size, latency, and answer quality for token optimization

❓ Frequently Asked Questions

What is Retrieval-Augmented Generation (RAG)?

A framework that combines external document retrieval with language model generation to improve factual accuracy and up-to-date information.

Why is the context window size important in RAG?

The context window is the maximum number of tokens a model can attend to at once; it determines how much retrieved content can influence the answer and guides token selection.

What is token optimization in RAG?

Techniques for selecting, compressing, and organizing retrieved content so the most relevant tokens fit within the model’s context window.

How are relevant tokens chosen from retrieved documents?

By scoring relevance to the prompt and using methods like ranking, summarization, or condensation to keep the most salient information.