Contrastive Learning for Bi-Encoder Retrievers

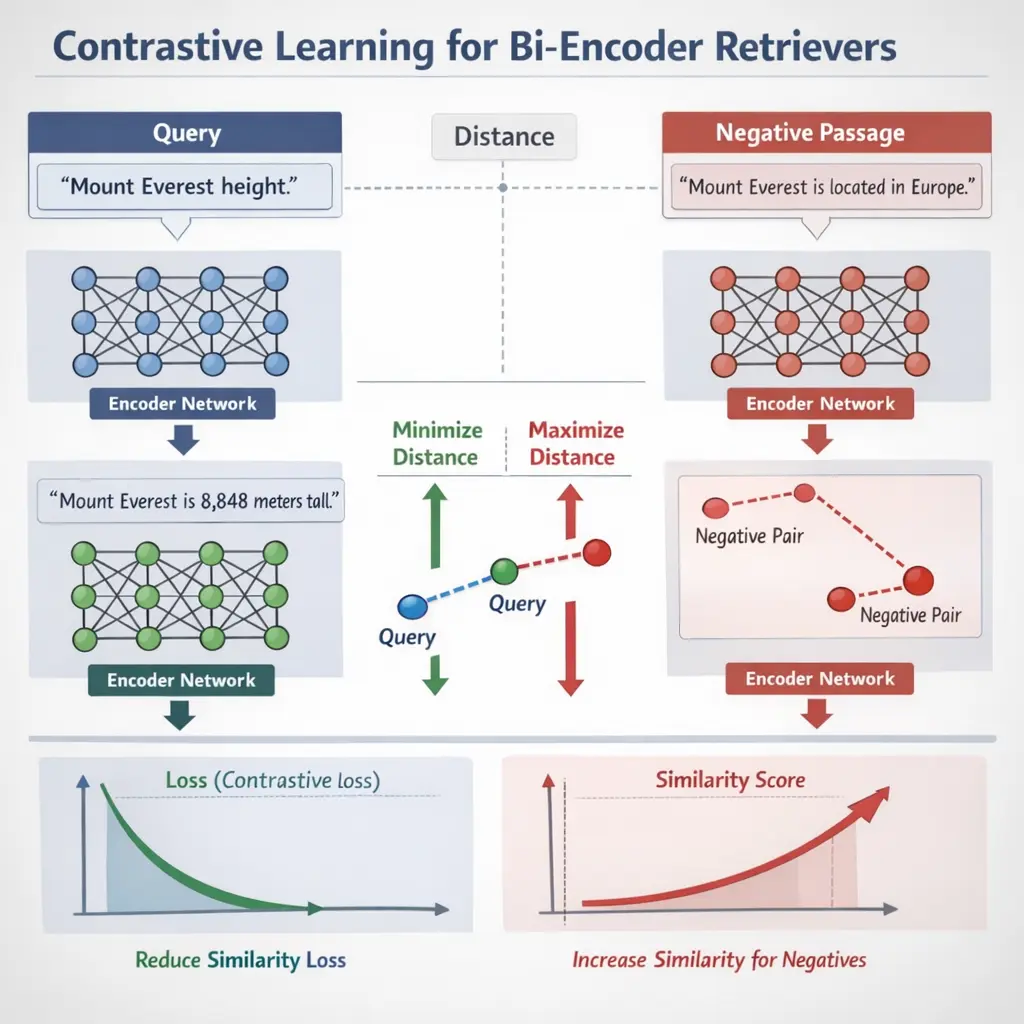

Contrastive Learning for Bi-Encoder Retrievers is an advanced technique in Retrieval-Augmented Generation (RAG) that enhances the ability of bi-encoders to distinguish relevant from irrelevant documents. By training the model to maximize similarity between query and positive passages while minimizing similarity with negatives, it improves retrieval accuracy. This approach enables more effective pairing of queries and documents, leading to better performance in information retrieval and downstream generative tasks.

Contrastive Learning for Bi-Encoder Retrievers

Contrastive Learning for Bi-Encoder Retrievers is an advanced technique in Retrieval-Augmented Generation (RAG) that enhances the ability of bi-encoders to distinguish relevant from irrelevant documents. By training the model to maximize similarity between query and positive passages while minimizing similarity with negatives, it improves retrieval accuracy. This approach enables more effective pairing of queries and documents, leading to better performance in information retrieval and downstream generative tasks.

💡 Key Takeaways

- Understand what a bi-encoder retriever is and how it encodes queries and documents into a shared embedding space for fast retrieval.

- Grasp contrastive learning basics: form positive and negative pairs and optimize with a contrastive loss to pull relevant embeddings together.

- Learn practical training techniques for bi-encoder retrievers such as in-batch negatives, hard negatives, and data augmentation to improve robustness.

- Know how to evaluate and deploy bi-encoder retrievers with metrics like recall@k and considerations for efficiency and scalability.

❓ Frequently Asked Questions

What is a bi-encoder retriever?

A model that encodes queries and documents separately into fixed-size embeddings, enabling fast approximate nearest-neighbor search using cosine similarity or dot product.

What is contrastive learning in this setting?

A training objective that pulls together representations of matching query–document pairs (positives) and pushes apart non-matching pairs (negatives), shaping an embedding space suited for retrieval.

How are positives and negatives defined for bi-encoder contrastive learning?

Positives are relevant query–document pairs. Negatives are non-relevant documents sampled from the pool or batch; hard negative mining can be used to improve learning.

Why use contrastive learning for bi-encoder retrievers?

It yields semantic, dense embeddings for fast retrieval at inference and scales well with data, avoiding expensive cross-encoders during search.

What is a common loss used in contrastive IR training?

InfoNCE (NT-Xent) loss, which maxes the similarity of the positive pair relative to negatives within a batch.