Counterfactual and Causal Evaluation of RAG Systems

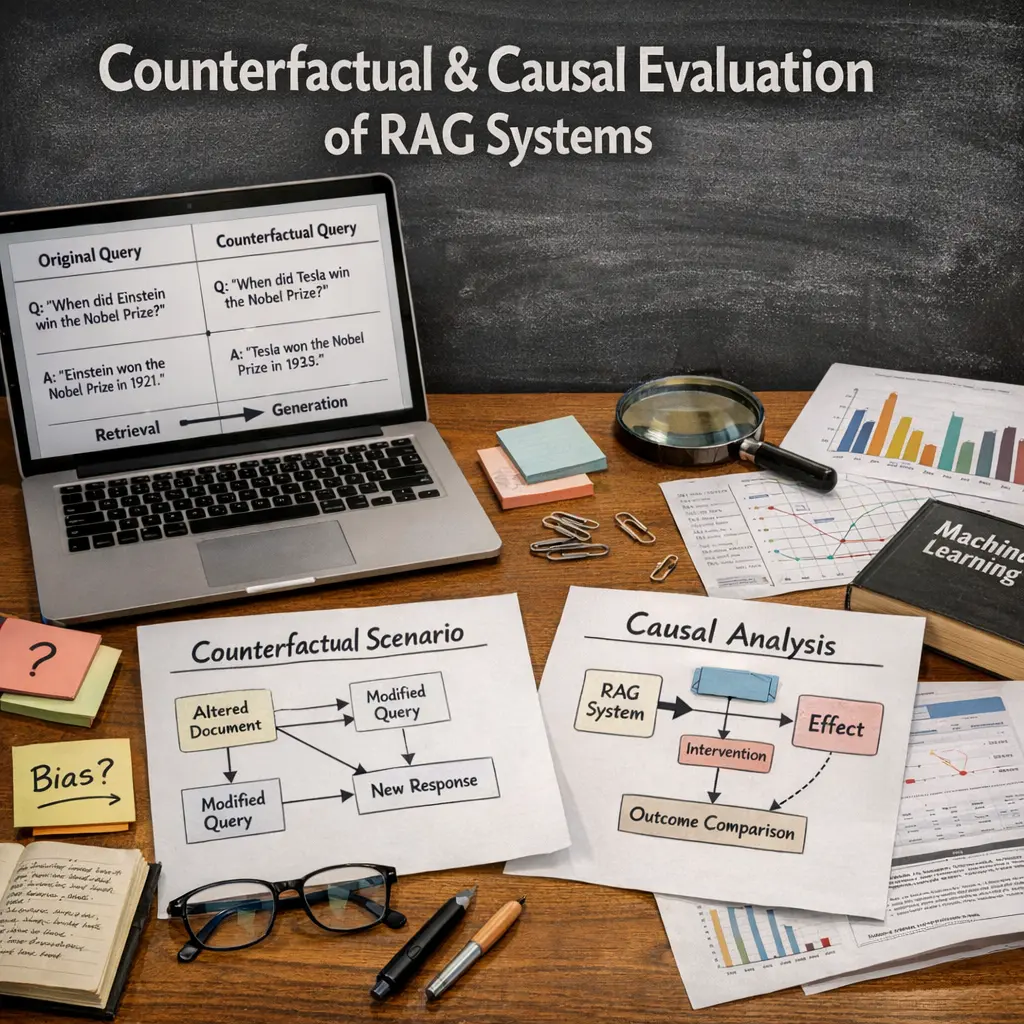

Counterfactual and causal evaluation of Retrieval-Augmented Generation (RAG) systems involves analyzing how changes in retrieved documents or queries affect the system’s generated outputs. This approach goes beyond traditional performance metrics by isolating the causal impact of specific retrievals on the final response. It helps identify which retrieved pieces of information are truly influential, enabling better debugging, optimization, and understanding of the RAG system’s reasoning and decision-making processes.

Counterfactual and Causal Evaluation of RAG Systems

Counterfactual and causal evaluation of Retrieval-Augmented Generation (RAG) systems involves analyzing how changes in retrieved documents or queries affect the system’s generated outputs. This approach goes beyond traditional performance metrics by isolating the causal impact of specific retrievals on the final response. It helps identify which retrieved pieces of information are truly influential, enabling better debugging, optimization, and understanding of the RAG system’s reasoning and decision-making processes.

💡 Key Takeaways

- Understand what Retrieval-Augmented Generation (RAG) systems are and how their retrieval and generation components interact

- Learn the difference between counterfactual evaluation and causal evaluation in RAG systems and when to apply each

- Explore practical experimental designs to quantify the causal impact of retrieval decisions and generated content on answer quality

- Identify common metrics and pitfalls in counterfactual/causal RAG evaluation and how to interpret results for system improvement

❓ Frequently Asked Questions

What is a RAG system?

A retrieval-augmented generation system combines a retriever that fetches relevant documents with a generator that uses those documents to produce grounded answers.

What does counterfactual evaluation mean in the context of RAG systems?

Counterfactual evaluation asks how outputs would change if inputs or retrieved evidence were different, testing robustness and dependence on specific sources or queries.

What is causal evaluation in RAG systems?

Causal evaluation seeks to identify cause–effect relationships between retrieval, grounding, and generation, often via interventions or controlled experiments to see how changes in retrieved content affect answers.

How can I perform practical counterfactual or causal evaluation for RAG?

Define interventions (e.g., modify queries, replace or remove sources), run controlled experiments, and compare metrics like factuality, faithfulness, and answer accuracy to determine the impact of retrieved content on outputs.