Dense Retriever Training: Contrastive Learning and Hard Negatives

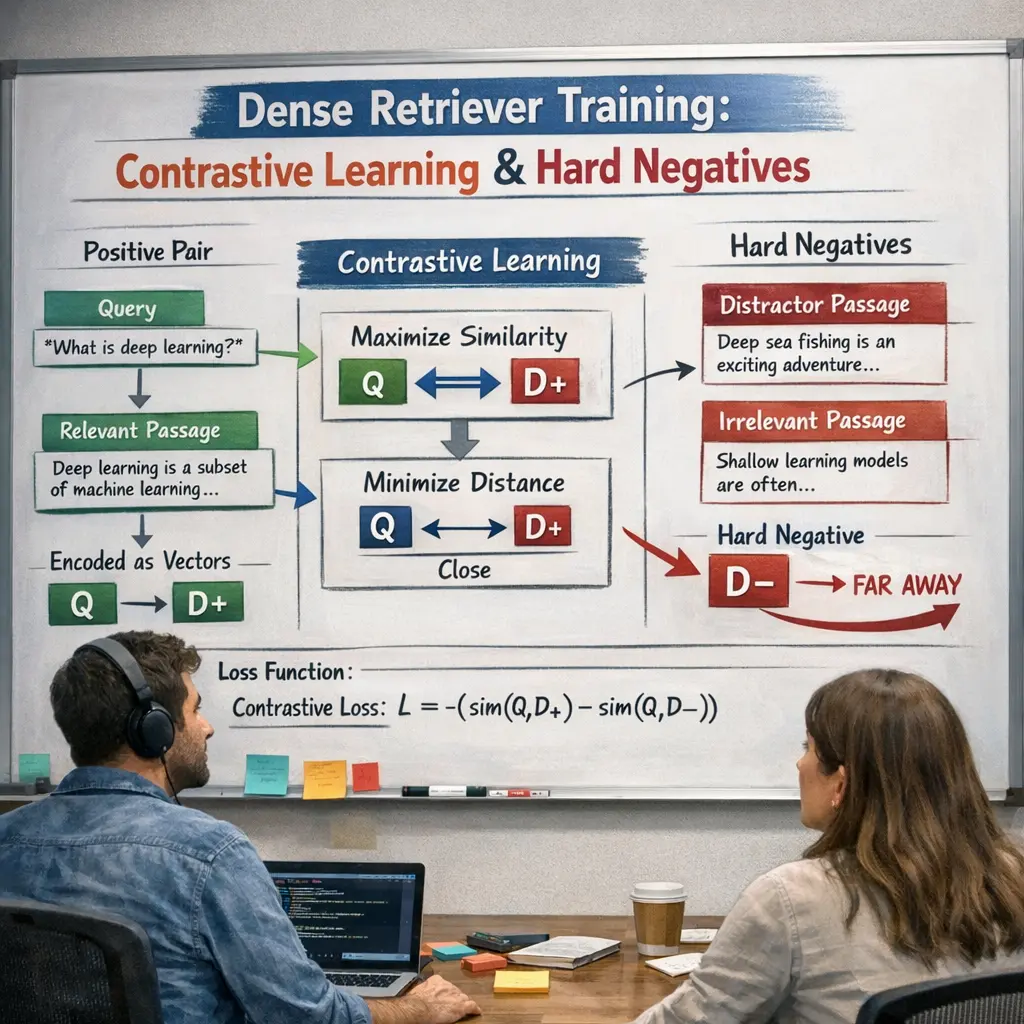

Dense Retriever Training with contrastive learning involves teaching a model to distinguish between relevant (positive) and irrelevant (negative) document-query pairs. Hard negatives—examples that are similar but incorrect—are especially valuable, as they force the model to learn subtle distinctions. In Retrieval-Augmented Generation (RAG), this process improves the retriever’s ability to fetch accurate, contextually relevant documents, which are then used by a generator to produce more informed and precise responses.

Dense Retriever Training: Contrastive Learning and Hard Negatives

Dense Retriever Training with contrastive learning involves teaching a model to distinguish between relevant (positive) and irrelevant (negative) document-query pairs. Hard negatives—examples that are similar but incorrect—are especially valuable, as they force the model to learn subtle distinctions. In Retrieval-Augmented Generation (RAG), this process improves the retriever’s ability to fetch accurate, contextually relevant documents, which are then used by a generator to produce more informed and precise responses.

💡 Key Takeaways

- Understand how dense retrievers use learned embeddings to match queries with relevant documents.

- Learn the basics of contrastive learning for retrievers, including positive and negative pairs and the role of the loss.

- Grasp hard negatives: what they are and why focusing on hard negatives improves discrimination during training.

- Get practical tips for training with contrastive loss and hard negatives, including mining strategies, batching, and evaluating retrieval performance.

❓ Frequently Asked Questions

What is a dense retriever in information retrieval?

A dense retriever encodes queries and documents into continuous vector representations and retrieves by measuring vector similarity, rather than relying on sparse keyword matching.

What does contrastive learning mean in dense retriever training?

Contrastive learning trains the model to bring the query and its relevant document representations closer while pushing apart non-relevant ones, using a contrastive loss like InfoNCE.

What are hard negatives and why are they important?

Hard negatives are non-relevant documents that look very similar to the query. They challenge the model to distinguish true positives from misleading negatives, improving ranking quality.

How are hard negatives typically mined for training?

Hard negatives are usually found by retrieving a set of candidate negatives with a base model or index, then selecting the top-scoring non-positives. They can be updated dynamically during training and may use in-batch negatives.