Document Preprocessing & Chunking Strategies+50

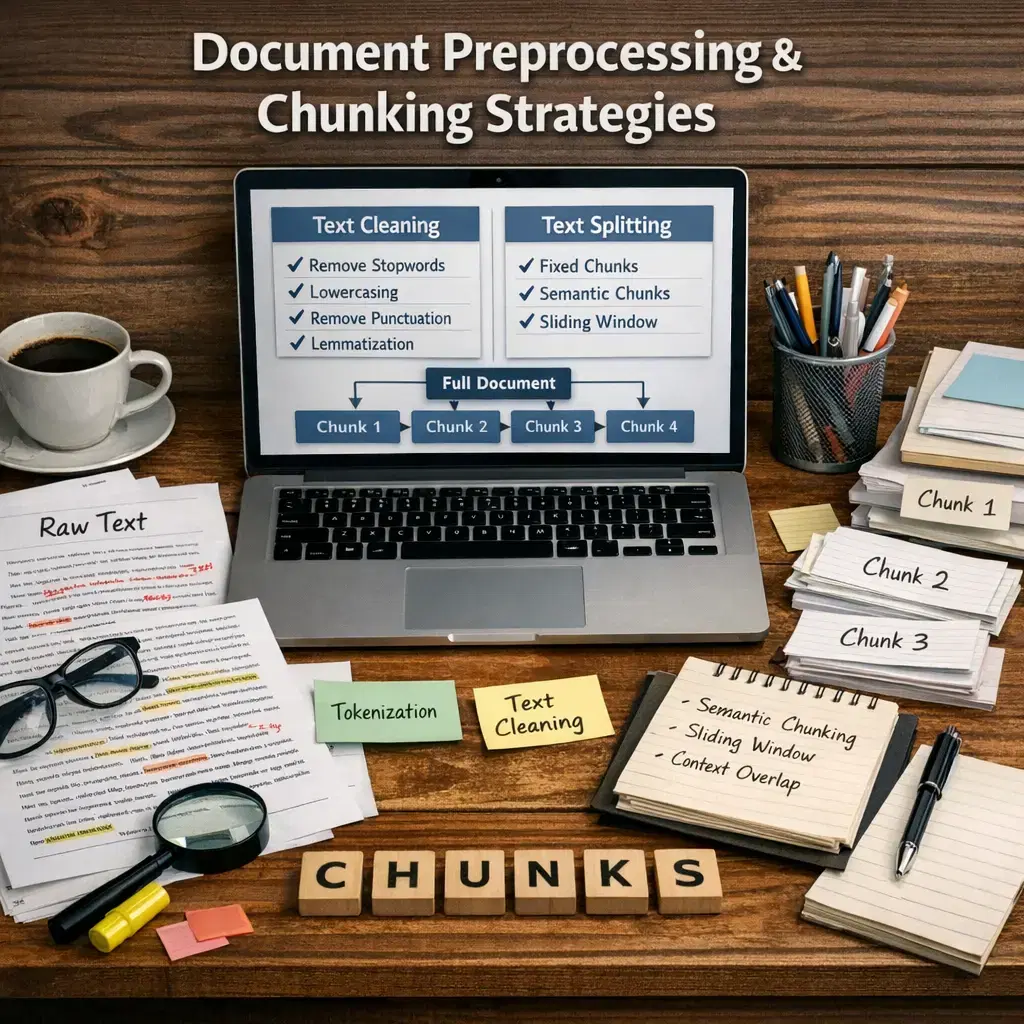

Document preprocessing and chunking strategies in Retrieval-Augmented Generation (RAG) involve preparing and dividing large documents into manageable segments, or "chunks," to enhance information retrieval and generation. Preprocessing includes cleaning, formatting, and removing irrelevant content, while chunking breaks text into logical units for efficient indexing and retrieval. These steps ensure that the RAG model can effectively search, retrieve, and generate accurate, contextually relevant responses from large-scale document collections.

Document Preprocessing & Chunking Strategies+50

Document preprocessing and chunking strategies in Retrieval-Augmented Generation (RAG) involve preparing and dividing large documents into manageable segments, or "chunks," to enhance information retrieval and generation. Preprocessing includes cleaning, formatting, and removing irrelevant content, while chunking breaks text into logical units for efficient indexing and retrieval. These steps ensure that the RAG model can effectively search, retrieve, and generate accurate, contextually relevant responses from large-scale document collections.

💡 Key Takeaways

- Understand what document preprocessing is and why it matters for NLP tasks

- Identify common preprocessing steps: cleaning, normalization, tokenization, and noise handling

- Describe chunking strategies for long documents: fixed-size windows, sentence/paragraph-based chunks, and overlapping chunks

- Choose chunk sizes and overlap settings based on downstream tasks like retrieval, QA, or summarization, balancing context and compute

❓ Frequently Asked Questions

What is document preprocessing in NLP and why is it important?

Document preprocessing in NLP involves cleaning and normalizing text before modeling to reduce noise, improve consistency, and boost accuracy. Common steps include lowercasing, removing non-text characters, tokenization, normalization, and optional stemming or lemmatization.

What are the most common preprocessing steps for text documents?

Typical steps include tokenization, lowercasing, punctuation handling, removal or normalization of whitespace, optional stopword removal, and language-aware normalization or stemming/lemmatization.

What is chunking in document processing and why is it used?

Chunking splits long documents into smaller, manageable pieces to fit model input limits, improve processing efficiency, and preserve context by grouping related content.

What chunking strategies are commonly used (and when)?

Common strategies include: fixed-size chunks (constant token length for predictability), sliding window (overlap to maintain context across chunks), sentence-based chunks (to preserve coherence within sentences), and hierarchical or topic-based chunks (to align with document structure). Choose based on task and model limits.

How do you choose chunk size and overlap for a given task?

Choose based on the model's maximum input size and the need for context. Larger chunks preserve more context but cost more, while overlap (stride) helps maintain dependencies between chunks. Empirically test different sizes and overlaps for the best balance.