Embedding Models & Vector Representations+50

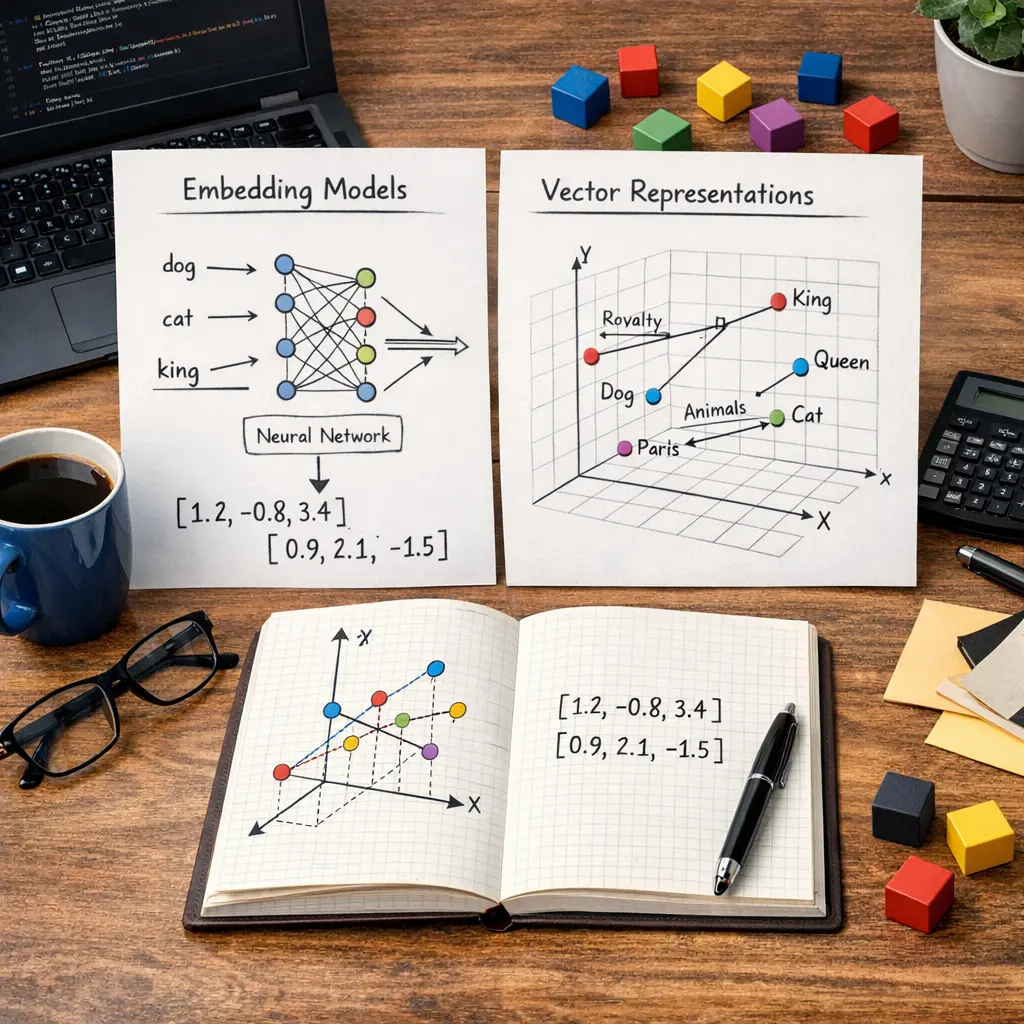

Embedding models transform text or data into numerical vectors that capture semantic meaning, enabling machines to understand relationships between concepts. In Retrieval-Augmented Generation (RAG), these vector representations are used to efficiently search large knowledge bases for relevant information. The retrieved data is then combined with generative models to produce more accurate and contextually rich responses, enhancing the performance of AI systems in tasks like question answering or information retrieval.

Embedding Models & Vector Representations+50

Embedding models transform text or data into numerical vectors that capture semantic meaning, enabling machines to understand relationships between concepts. In Retrieval-Augmented Generation (RAG), these vector representations are used to efficiently search large knowledge bases for relevant information. The retrieved data is then combined with generative models to produce more accurate and contextually rich responses, enhancing the performance of AI systems in tasks like question answering or information retrieval.

💡 Key Takeaways

- Define embedding models and vector representations and their role in NLP.

- Explain how text is transformed into numerical vectors using methods such as word embeddings and contextual embeddings.

- Compare dense versus sparse embeddings and discuss typical dimensionalities and trade-offs.

- Describe common similarity metrics (cosine similarity, Euclidean distance) used to compare vectors for tasks like search, recommendation, and clustering.

❓ Frequently Asked Questions

What is an embedding model?

An embedding model converts objects like words or images into dense numeric vectors in a continuous space, so similar items have nearby vectors.

What is a vector representation (embedding) and why use it?

A fixed-length numeric vector that encodes semantic or structural properties. It enables similarity comparisons, clustering, and feeding data into machine learning models.

How is similarity measured between embeddings?

Common metrics include cosine similarity (angle-based) and Euclidean distance. Cosine similarity is often preferred because it focuses on direction rather than magnitude.

What are common types of embedding models and their use-cases?

Static word embeddings (Word2Vec, GloVe) map words to fixed vectors; contextual embeddings (BERT, RoBERTa) provide context-sensitive vectors; sentence/document embeddings (Sentence-BERT, Universal Sentence Encoder) produce fixed-size vectors for longer text. Used for semantic search, clustering, and recommendations.