Hypothetical Document Embeddings (HyDE)

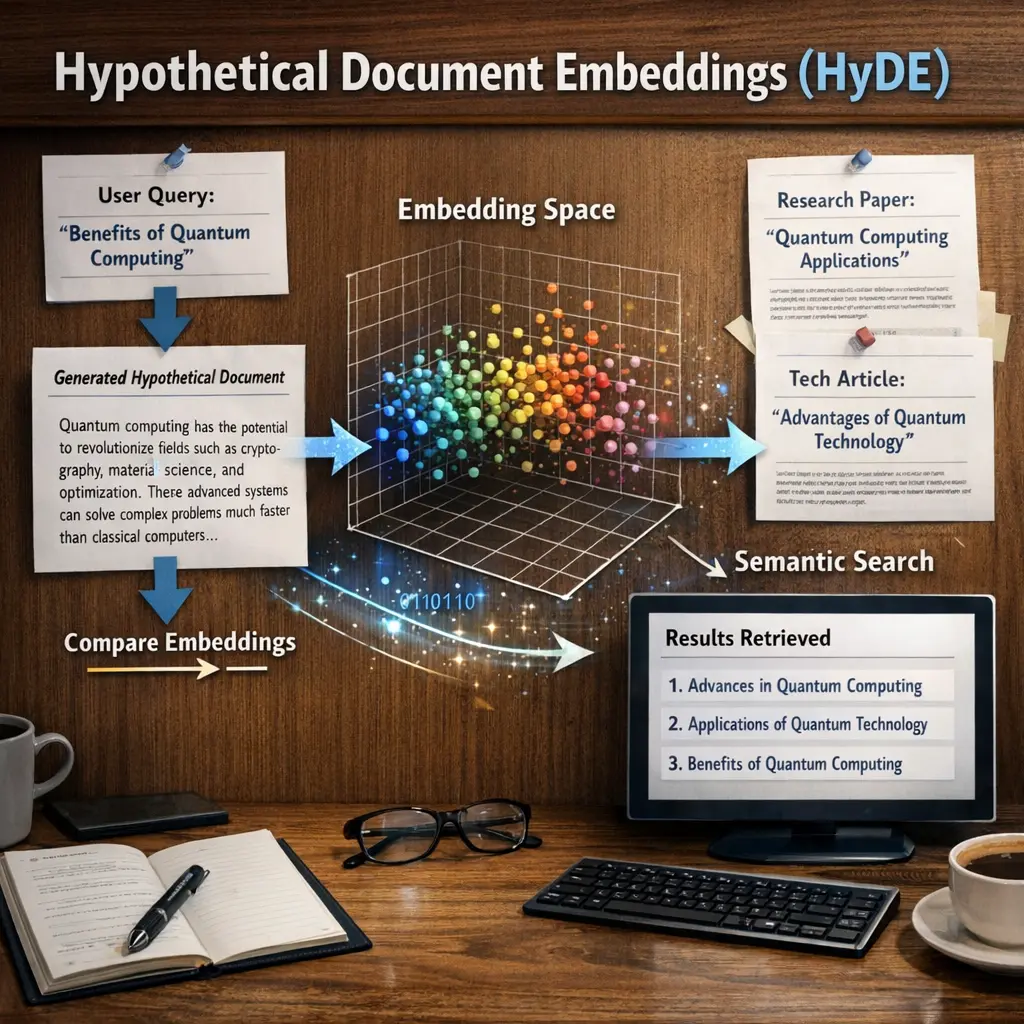

Hypothetical Document Embeddings (HyDE) is an advanced Retrieval-Augmented Generation (RAG) technique where, instead of directly embedding a user query, a language model first generates a hypothetical answer or document. This generated text is then embedded and used to search for relevant documents in a database. HyDE enhances retrieval effectiveness by bridging the semantic gap between user queries and stored documents, improving the relevance of retrieved information in complex search scenarios.

Hypothetical Document Embeddings (HyDE)

Hypothetical Document Embeddings (HyDE) is an advanced Retrieval-Augmented Generation (RAG) technique where, instead of directly embedding a user query, a language model first generates a hypothetical answer or document. This generated text is then embedded and used to search for relevant documents in a database. HyDE enhances retrieval effectiveness by bridging the semantic gap between user queries and stored documents, improving the relevance of retrieved information in complex search scenarios.

💡 Key Takeaways

- Understand the core idea of HyDE: using generated hypothetical documents to form concept embeddings.

- Learn the typical workflow: define concepts, generate hypothetical documents for each concept, and derive embeddings to represent them.

- Explore benefits: improved zero-shot or few-shot classification and easier domain adaptation through richer concept representations.

- Be aware of challenges: depends on the generator quality, potential biases in generated content, and the need for careful evaluation.

❓ Frequently Asked Questions

What is HyDE (Hypothetical Document Embeddings)?

HyDE is a retrieval technique that uses a large language model to generate a synthetic 'hypothetical' document capturing the essence of the information needed. The embedding of this document is used for retrieval.

How does HyDE differ from standard embedding-based retrieval?

Instead of embedding existing documents (or queries) directly, HyDE creates a fake or hypothetical document with the LLM and then uses its embedding to guide retrieval, often finding relevant information not present verbatim in the corpus.

What are common use cases for HyDE?

Open-domain question answering, knowledge-intensive dialogue, and retrieval-augmented generation where fast, relevant retrieval is important.

What should I watch out for with HyDE?

The model may introduce hallucinations or biases in the generated document. Effectiveness depends on prompt quality and the underlying LLM, and there can be additional computational costs.