Learning-to-Rank Objectives for RAG

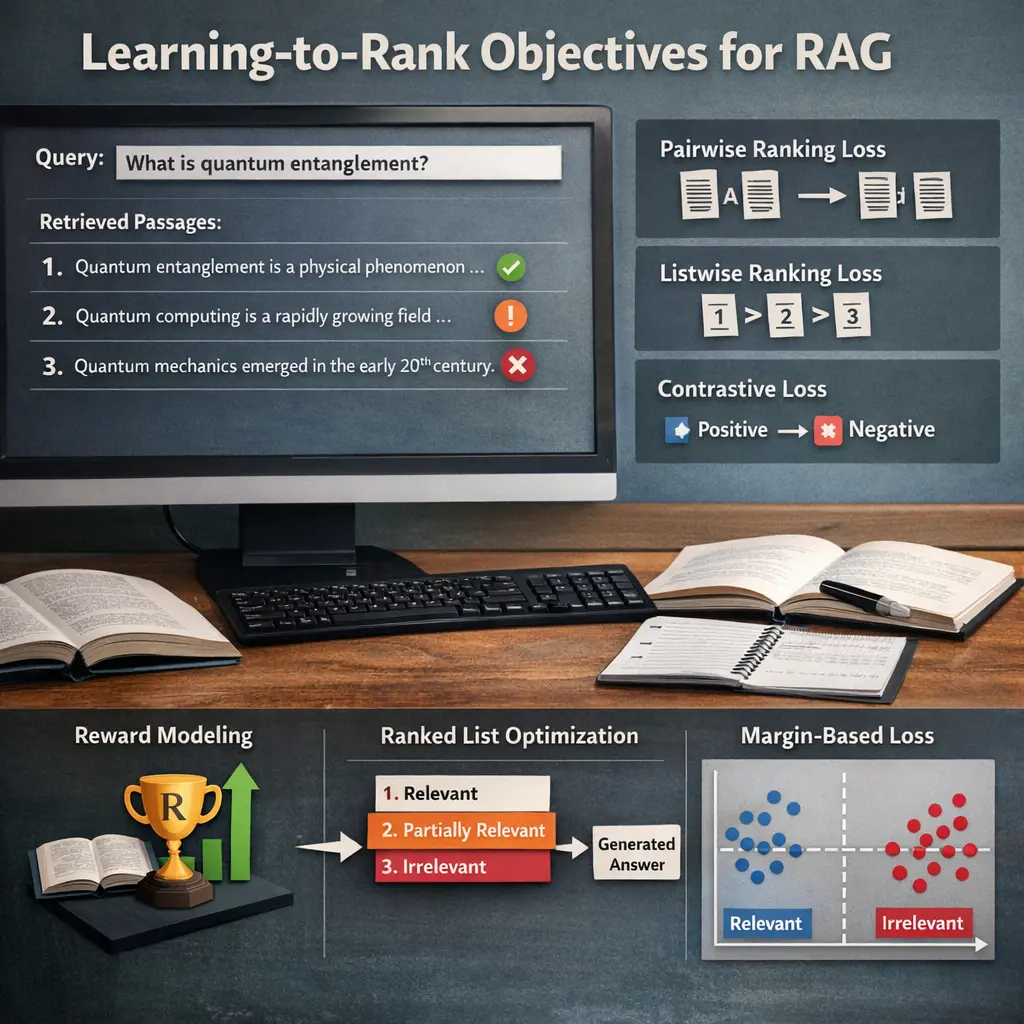

Learning-to-Rank objectives in Retrieval-Augmented Generation (RAG) optimize how retrieved documents are prioritized before generating responses. Instead of simply retrieving the most relevant documents, these objectives use machine learning models to rank the retrieved results based on their usefulness for the downstream generation task. Advanced RAG techniques leverage these objectives to improve the accuracy, relevance, and coherence of generated answers by ensuring the model focuses on the most informative and contextually appropriate documents.

Learning-to-Rank Objectives for RAG

Learning-to-Rank objectives in Retrieval-Augmented Generation (RAG) optimize how retrieved documents are prioritized before generating responses. Instead of simply retrieving the most relevant documents, these objectives use machine learning models to rank the retrieved results based on their usefulness for the downstream generation task. Advanced RAG techniques leverage these objectives to improve the accuracy, relevance, and coherence of generated answers by ensuring the model focuses on the most informative and contextually appropriate documents.

💡 Key Takeaways

- Understand how learning-to-rank objectives guide the selection of retrieved passages for RAG to improve answer quality.

- Compare common LTR formulations (pointwise, pairwise, listwise) and their implications for reranking in RAG.

- Learn how to create and use relevance signals (explicit judgments or user feedback) to train LTR models that boost factuality and relevance.

- Know how to evaluate LTR performance in RAG with metrics like nDCG, MAP, and precision@k, and how to use results to refine models.

- Grasp a practical RAG workflow: retrieve candidates, apply LTR reranker, select top docs, and generate final answer.

❓ Frequently Asked Questions

What is Learning-to-Rank (LTR) in Retrieval-Augmented Generation (RAG)?

LTR trains the retriever to order candidate documents by their relevance to the user query, so the generator receives better context for producing an answer.

What does a learning-to-rank objective optimize in RAG?

It optimizes the order of retrieved documents (not just scores) so more relevant items appear higher, often using ranking metrics like NDCG or MRR.

What are common LTR objective types used with RAG?

Pointwise (predict a relevance score), Pairwise (compare two documents), and Listwise (optimize the ranking of a list of candidates); contrastive approaches are also used.

Why are LTR objectives beneficial for RAG systems?

They improve retrieval quality, reduce noise from irrelevant documents, and help the generator produce more accurate and coherent answers.