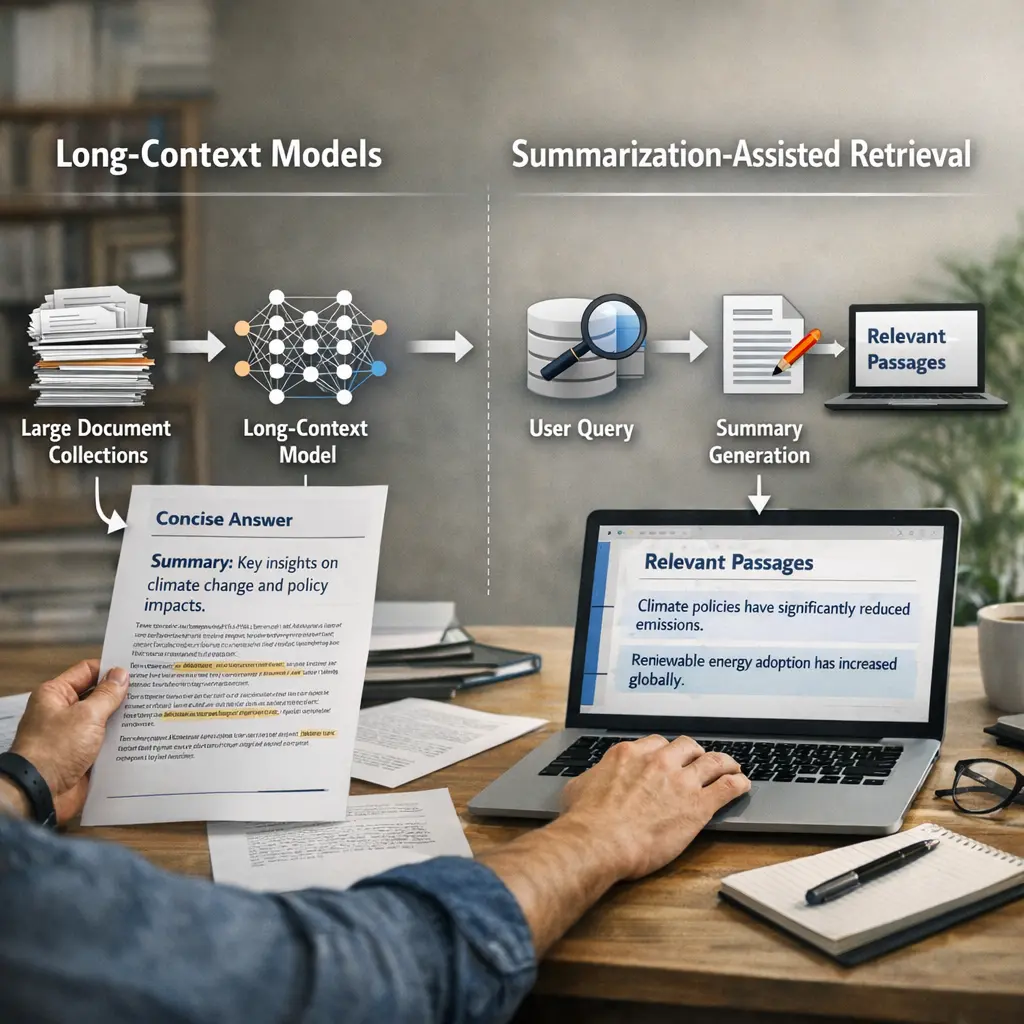

Long-Context Models and Summarization-Assisted Retrieval

Long-context models are AI systems designed to process and understand large amounts of textual information at once. Summarization-assisted retrieval, often used in Retrieval-Augmented Generation (RAG), combines these models with techniques that summarize and extract relevant information from vast data sources. This approach enables the AI to efficiently retrieve, condense, and generate accurate answers or content by leveraging both its understanding of long contexts and its ability to summarize key points from retrieved documents.

Long-Context Models and Summarization-Assisted Retrieval

Long-context models are AI systems designed to process and understand large amounts of textual information at once. Summarization-assisted retrieval, often used in Retrieval-Augmented Generation (RAG), combines these models with techniques that summarize and extract relevant information from vast data sources. This approach enables the AI to efficiently retrieve, condense, and generate accurate answers or content by leveraging both its understanding of long contexts and its ability to summarize key points from retrieved documents.

💡 Key Takeaways

- Define long-context models and why they matter for handling long documents or conversations.

- Explain how summarization can speed up retrieval by condensing content before search.

- Compare summarization-assisted retrieval with standard retrieval-augmented methods and note trade-offs.

- Identify common techniques (extractive vs abstractive, query-focused summarization) and typical use cases.

❓ Frequently Asked Questions

What are long-context models?

Long-context models are NLP models designed to process much longer input text than standard models, using extended attention windows, memory mechanisms, or hierarchical architectures. They help understand long documents or multi-turn conversations, improving tasks like summarization and retrieval that rely on large contexts.

What is summarization-assisted retrieval?

A retrieval approach that uses summaries to represent documents, enabling faster, more scalable search and ranking. By indexing or comparing queries to condensed representations, systems locate relevant information efficiently while preserving essential content.

How does context length affect model performance?

A longer context allows the model to consider more information, improving accuracy on long documents. However, it increases computation and memory usage and can offer diminishing returns. When context is short, important details may be missed; summarization can help by condensing content while keeping key ideas.

What are common challenges with long-context models and summarization-assisted retrieval?

Challenges include high computational and memory demands, potential hallucinations or inaccuracies in summaries, misalignment between summaries and full content, and difficulties evaluating retrieval quality across long documents.