Long-context models process and generate responses using vast input sequences, capturing nuanced context directly within the model. Retrieval-augmented generation (RAG) techniques, by contrast, fetch relevant external documents to supplement responses. Hybrid designs combine these strengths: they use retrieval to surface pertinent information and long-context models to integrate and reason over both the query and retrieved content, enabling more accurate, coherent, and contextually rich outputs in advanced applications.

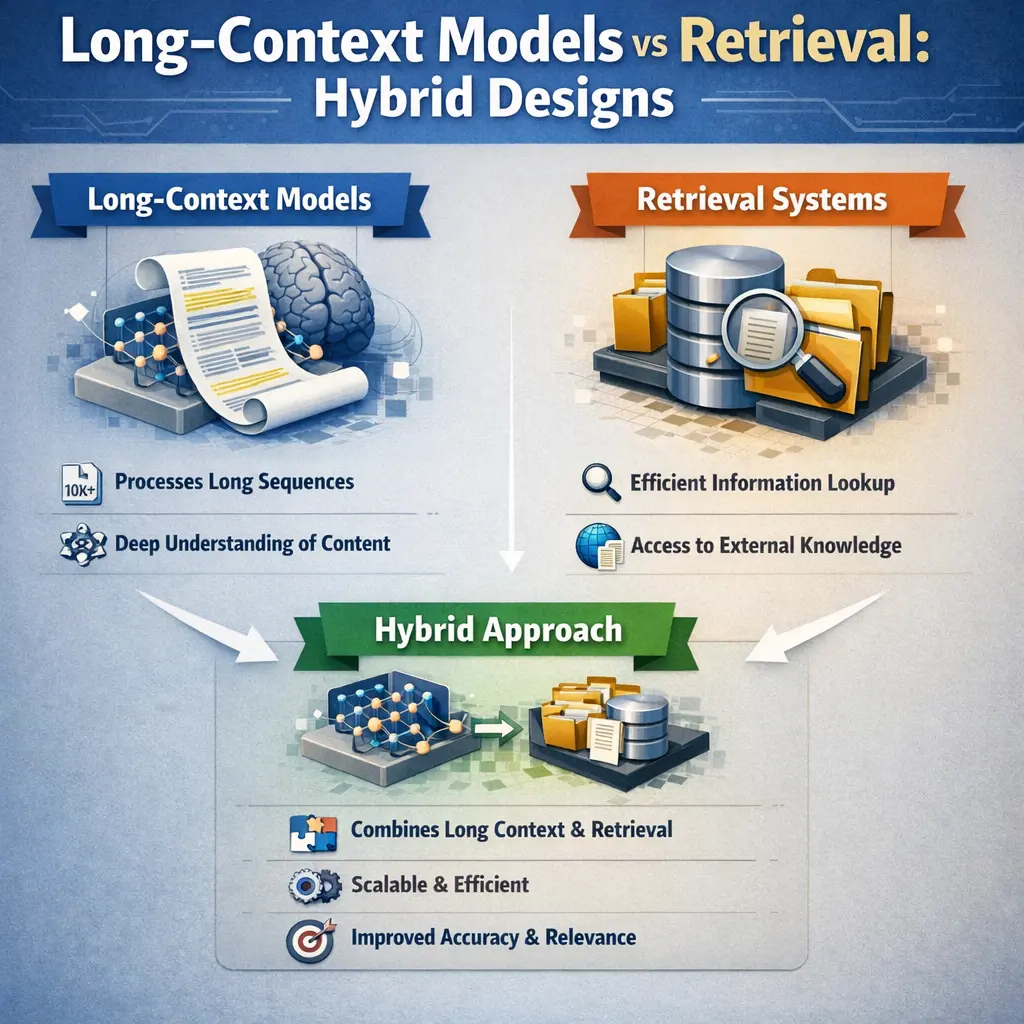

Long-Context Models vs Retrieval: Hybrid Designs

Long-context models process and generate responses using vast input sequences, capturing nuanced context directly within the model. Retrieval-augmented generation (RAG) techniques, by contrast, fetch relevant external documents to supplement responses. Hybrid designs combine these strengths: they use retrieval to surface pertinent information and long-context models to integrate and reason over both the query and retrieved content, enabling more accurate, coherent, and contextually rich outputs in advanced applications.

💡 Key Takeaways

- Understand the difference between long-context models and retrieval-based methods for handling long text inputs.

- Learn how hybrid designs combine internal model reasoning with external retrieval to scale knowledge access.

- Identify common architectural patterns in hybrid systems (e.g., chunking, retrievers, readers, and data fusion) and their tradeoffs.

- Assess practical factors for choosing a design (latency, cost, data freshness, and domain-specific needs).

❓ Frequently Asked Questions

What are long-context models?

Long-context models process and reason over very long input sequences by using techniques like extended attention windows, memory modules, or hierarchical processing to maintain information across many tokens.

What is retrieval in language models?

Retrieval involves using a separate knowledge base and a retriever to fetch relevant documents, which are then used to condition the model's output—enabling access to more information than the model’s training data alone.

What is a hybrid design in this context?

A hybrid design combines long-context processing with retrieval, allowing the model to reason over internal context while also augmenting it with externally retrieved documents.

When should you use a hybrid design?

Use a hybrid design when tasks require both deep reasoning over long sources and access to up-to-date or expansive external knowledge; it improves coverage and accuracy but adds complexity and potential latency.