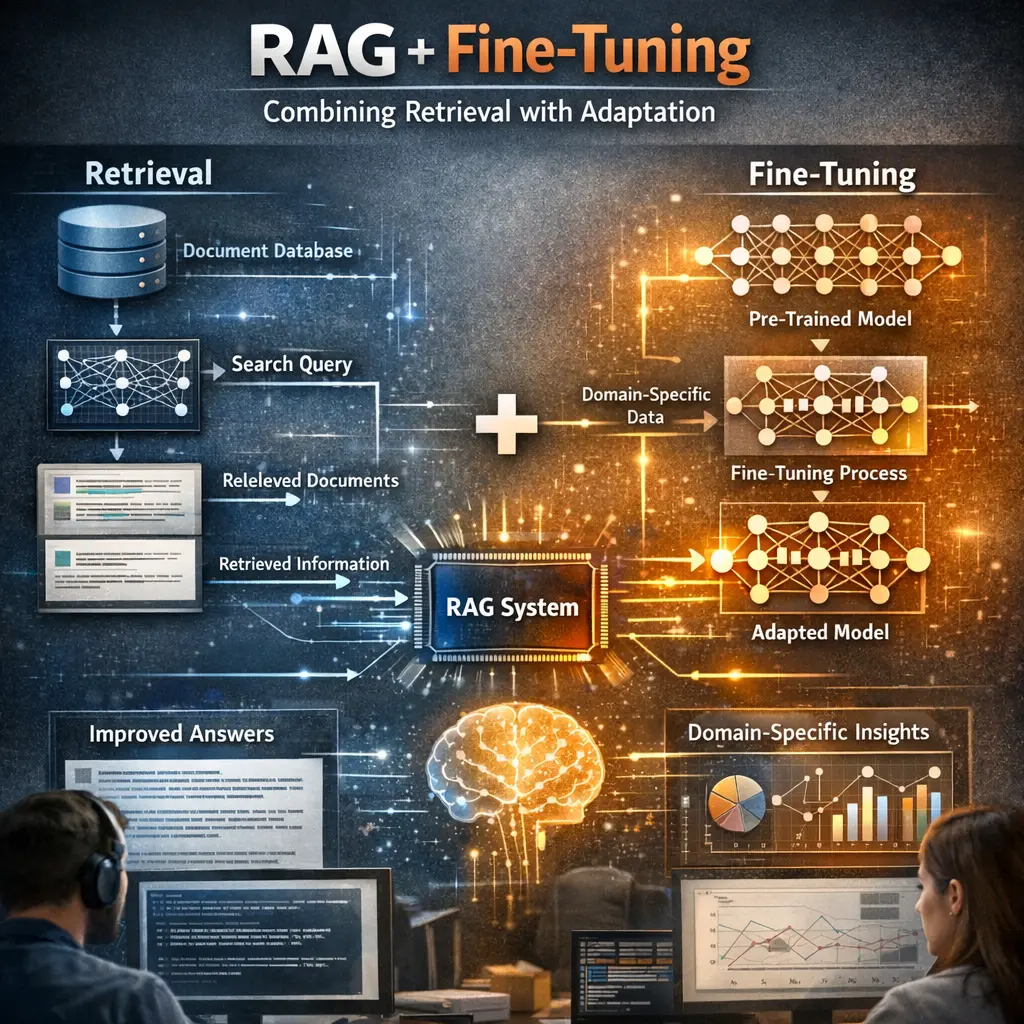

RAG + Fine-Tuning: Combining Retrieval with Adaptation

RAG + Fine-Tuning refers to an advanced technique in natural language processing that combines Retrieval-Augmented Generation (RAG) with model fine-tuning. In this approach, a language model not only retrieves relevant external documents to inform its responses but is also adapted through fine-tuning on domain-specific data. This synergy enables the model to generate more accurate, contextually relevant, and specialized outputs by leveraging both external knowledge and tailored learning.

RAG + Fine-Tuning: Combining Retrieval with Adaptation

RAG + Fine-Tuning refers to an advanced technique in natural language processing that combines Retrieval-Augmented Generation (RAG) with model fine-tuning. In this approach, a language model not only retrieves relevant external documents to inform its responses but is also adapted through fine-tuning on domain-specific data. This synergy enables the model to generate more accurate, contextually relevant, and specialized outputs by leveraging both external knowledge and tailored learning.

💡 Key Takeaways

- Understand Retrieval-Augmented Generation (RAG) and how retrieving documents can improve factual accuracy and coverage.

- Learn how to combine retrieval with fine-tuning or adapters to tailor outputs to a specific domain or knowledge base.

- Identify the core RAG components: a retriever, a generator (reader), and the mechanism that conditions generation on retrieved passages.

- Know practical steps to build a RAG pipeline: index documents, fine-tune/adapt the model in the retrieval loop, and evaluate with retrieval-aware metrics.

❓ Frequently Asked Questions

What is RAG in simple terms?

RAG stands for Retrieval-Augmented Generation. It combines a retriever that fetches relevant documents with a generator that crafts answers conditioned on those documents.

How does fine-tuning interact with RAG?

Fine-tuning can adapt the retriever and/or the generator on domain data (often via adapters or targeted training), improving retrieval relevance and the quality of generated answers.

Why combine retrieval with adaptation?

Retrieval provides evidence from sources, while adaptation tailors the model to a specific domain or style, reducing hallucinations and increasing factual accuracy.

What are typical steps to implement RAG + fine-tuning?

Set up a document store, configure a retriever (e.g., BM25 or dense retrieval), integrate a generator, prepare fine-tuning data, and train end-to-end or with adapters before evaluating.

What are common challenges to watch for?

Computational cost, maintaining up-to-date knowledge, data quality for fine-tuning, potential overfitting, and ensuring retrieved evidence supports the final answer.